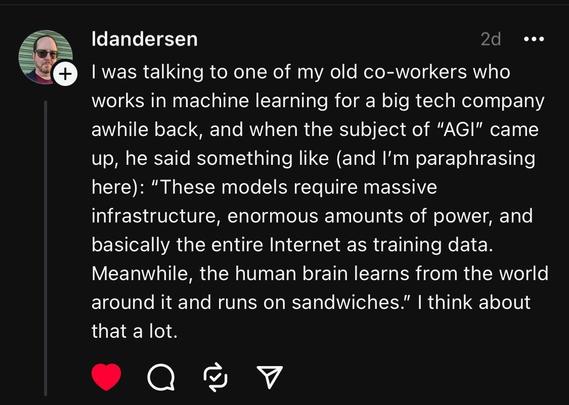

Oh the power plant problem is one i love to point out too. A 100 watt brain vs a gigga watt computer cluster.

It's what i said when IBMs Watson did that game show nonsense. Require Watson to be in the room running on their own mobile power source and have it beating people at quizzes then we'll talk.