https://lingualibre.org/wiki/Special:RecordWizard

https://lingualibre.org/wiki/Special:RecordWizard

- I work with #WikiapiJS bot framework

- Live edit logs : https://lingualibre.org/wiki/Special:RecentChanges?hidehumans=1&limit=1000&days=7&enhanced=1&urlversion=2

- Github code : https://github.com/hugolpz/Dragons_Bot

#Lingualibre #wikibot

🤖🐲Another long day with User:Dragons_Bot!

Months ago, I activated several #SignLanguages on #LinguaLibre. People can video record signed words. While doing activity stats, a #SPARQL query shown missing data on 467 languages items. Dragons_Bot just fixed those. Will be useful for incoming 3rd recording type for #WhistledLanguages. 😉

Today, I use Lingualibre Wikibase as a calm pad for coding my bot. Some days, I will move to #Wikidata for live editing on languages. 🎉

https://en.wikipedia.org/wiki/Whistled_language

🤖🐲 User:Dragons_Bot to the rescue ! Doing clean ups !

Did you know ? Lingualibre has 219 #languages recorded, but a #SPARQL query will return 221 languages. Why ? Because Chinese, by example, is erroneously present twice 😲 :

- ❌ as Q130, iso: zho for #Chinese writing

- ✅ as Q113, iso `cmn`, for Chinese Mandarin

Tonight, I code a script to move all records toward `cmn`, on both #Lingualibre's items and #Commons' file wikipages. Fighto ! ò__ó

[2:25am edit: Well, I added few hours and finished that task ò__ó]

https://lingualibre.org/wiki/Help:SPARQL_for_maintenance#Counts

This new year, I'm working an open licence dump of 8,598 #Chinese audio recordings.

# The source

Those files were part of the original project -Shootka recorder (2005-2016)- which @Wikimedia_Fr, Nicolas Vion recoded and renamed into #Lingualibre (2016+). The total dumps of 150~300k audios have been laying there for 8 years now, in need for processing and migration to #WikimediaCommons.

# Scrapping

In past years, I noticed webpages of this archive project collapsed. Data still seemed available by one or two access points. Yesterday I scrapped all what I could get:

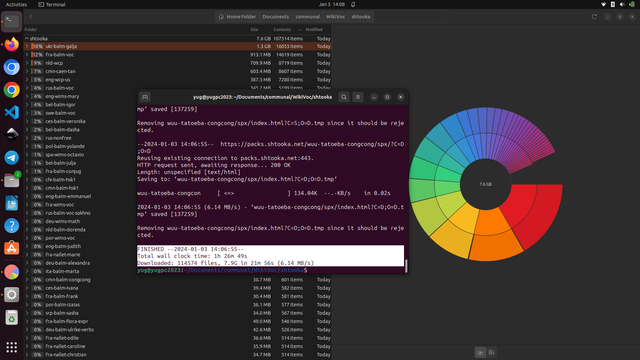

# Download all from Shtooka

$ wget -r -np -nc -nH --accept=.flac,.html -A .flac,.html https://packs.shtooka.net/ --no-check-certificate

>FINISHED --2024-01-03 14:06:55--

>Total clock time: 1h 26m 49s

>Downloaded: 114574 files, 7.9G in 21m 56s (6.14 MB/s)

😿 Many filepaths failed

💖 #Chinese HSK succeed

🔉 高低 gāodī: height

# INSPECT

I now got 8,598 Chinese #HSK audios. As usual, we progress with a small sample of files. The cycle goes :

-investigate

-code

-fix

-expand.

Thank good I document most of my actions on #Lingualibre for past decade ! Dozen of Help pages to onboard junior programmers.

https://lingualibre.org/wiki/Help:Converting_audios#Helpers

I updated a bit the command thanks to ChatGPT's suggestions. To print the Shtooka files' rich metadata, try :

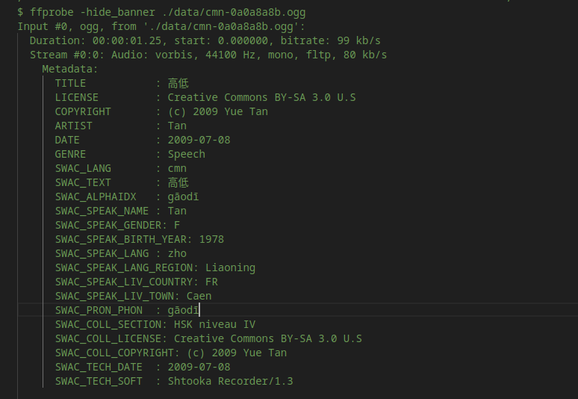

$ ffprobe -hide_banner ./data/cmn-0a0a8a8b.ogg

*HSK version 1, from the 2000s

# METADATA OF INTEREST

Among the twenty metadata this Chinese audio file contains, several are of intestest.

```

- speaker name

- speaker LL id

- speaker gender

- uploader username

- Wikidata language id

- word

- date of creation

- open license

```

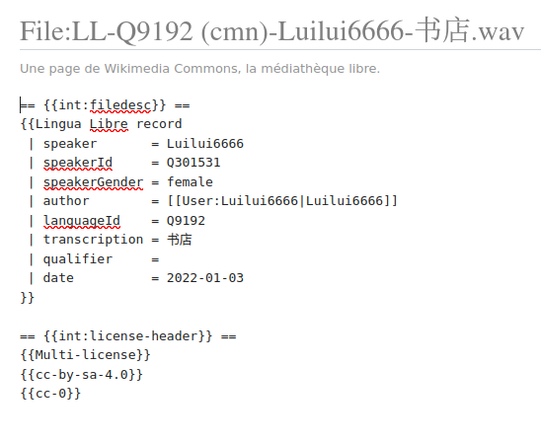

Great ! I need those value for WikimediaCommons Template:Lingua_libre_record. 😉

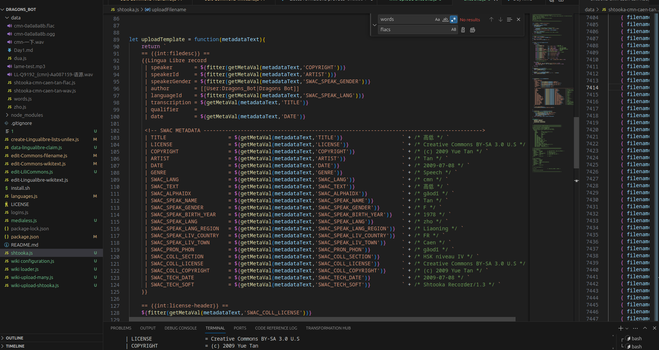

# WIKIBOT

Dragons_Bot stands upon NodeJS and #WikiapiJS , a powerful JS framework I use. (I love this project, so I was also involved in its documentation.) It has 44 stars ⭐ on github :

https://kanasimi.github.io/wikiapi/

As of now, I have a decent script which creates the suitable wikitext and filename to upload, audios converted to .wav, and a clean data file to run the whole.

Sidenote: A more popular alternative for JS devs wanting a #wikibot is #WTF_wikipedia (750 ⭐ ) :

https://github.com/spencermountain/wtf_wikipedia/graphs/contributors

[Temporary toot]

Ok ! I'm done for today. As visible in the previous screenshot I got too much Shtooka metadata from audio files (ffmpeg). I need enlightenment from Nicolas Vion to know if the duplications I see there and indeed duplications or not. Phoning him later. When I get the greenlight, then I can mass import those HSK audios.

Chinese + Lingualibre + WikimediaCommons + Wikibot thread ➡️ cc @Ash_Crow @harmonia_amanda

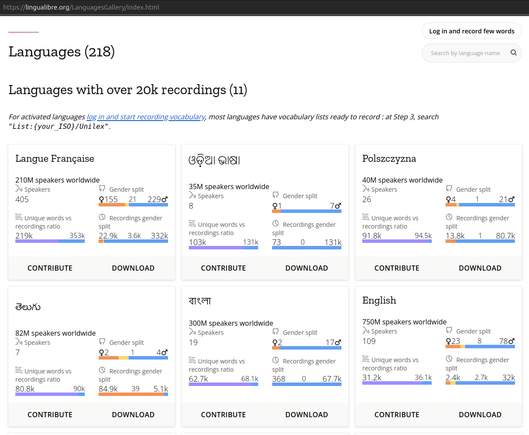

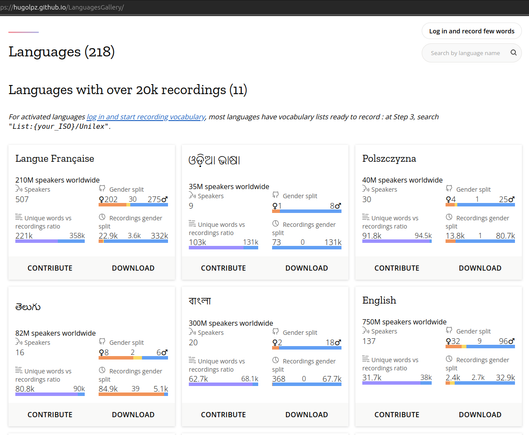

Will you notice the differences ?

@lingualibre being #OpenSource, web developer and Wikimedian @elfix jumped in and switched our SPARQL endpoint url. This revives an important but heavy query to document and visualize #Gender biases, in order to counter it.

#LinguaLibre, as most @Wikimedia projects, reproduce gender and diversity biases. Our movement therefore leads explicit efforts for inclusivity and diversity.

- Prod https://lingualibre.org/LanguagesGallery/index.html

- Dev https://hugolpz.github.io/LanguagesGallery/

**90% of Wikipedia's Editors Are Male—Here's What They're Doing About It.**

The group that oversees the free encyclopedia is trying to fix a years-old problem.

By Robinson Meyer "

🎉 Finally ! Wikimedia Commons was blocking upload of files & filenames with characters in some minority #languages writing systems.

With today's change those characters and filenames are now accepted on Commons and therefore #Lingualibre. *It allows Lingualibre & Wikimedia to support more and smaller languages & cultures.*

Doesn't seem much but Lili members have been pushed this issue since 2021.

It's now fixed thank to MW dev @LucasWerkmeister and User:Nikki.❤️ https://commons.wikimedia.org/w/index.php?title=MediaWiki%3ATitleblacklist&diff=845765023&oldid=829500280

Woow !! #LinguaLibre just made a +6 languages jump tonight ! I saw some unfamiliar ISO codes yesterday in the recent changes logs, I need to investigate.

We are now at 227 open content audio lexicons !

https://hugolpz.github.io/LanguagesGallery/

240 languages !

Thank to #Indonesia #Haji language, there are now 240 #languages on #lingualibre .

- Stats: https://hugolpz.github.io/LanguagesGallery/

- Wikidata: https://wikidata.org/wiki/Q5639933

- Wikipedia: https://en.wikipedia.org/wiki/Haji_language

Lingualibre continues to provide user-friendly systems for all and smaller languages communities to rapidly record their local vocabulary.

Lingualibre is rich in #language.s data but also in huge need of web developers and outreach efforts.

I've been monitoring potential grants for a time, gathering all those i could identify in a Grants table.

https://meta.wikimedia.org/wiki/Template:Grants

@Wikimedia_Fr and French Ministry of Culture are providing an helpful yearly lifeline for software maintenance.

But we need more to be above water and being bold.

@wikimediafoundation's Technology Fund was my main hope. But after monitoring it for 2 years and despite being a very necessary lifeline for Wikimedia's open source projects, it hasn't started yet. 😢 I still hope it will somedays, but ... https://meta.wikimedia.org/wiki/Grants:Programs/Wikimedia_Research_%26_Technology_Fund

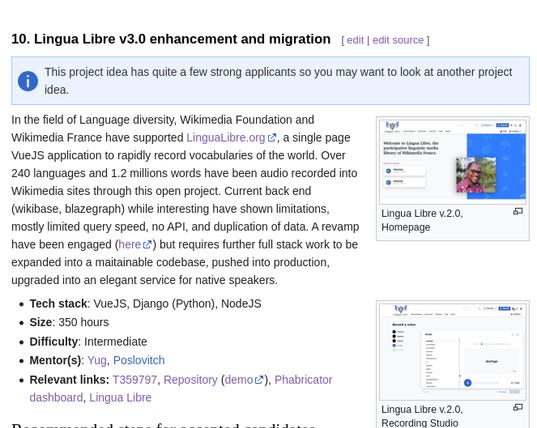

Two month ago, the @wikimediafoundation got accepted within the Google Summer of Code 2024 ! #gsoc24

The GSoC is a @Google funded summer project were Google pays the internship for junior developers to contribute to open source codebases.

So I took my Grant writter / Mentor hat, and submitted not one, but TWO Lingualibre coding projects.

## LinguaLibre v3

The First is to speed up the deployment of the next version of Lingualibre, with easier to maintain, easier to query application. It is critical for every open source project to be easy to dive in, to fix, and expand. This effort will be co-mentored with @Poslovitch

And gosh ! In just few days, *7* junior developers expressed interest in this #Lingualibre GSoC24 . I onboarded 5 of them. 3 of whom already cloned the repository and started hacking around that #Django/Vuejs. 😮🎇

## LinguaLibre SignIt

The Second one is to revamp #SignIt, the click-to-translate web extension we use for #SignLanguage. This extension is cute, awesome, and can assist us all to learn sign languages ! But is in a deadly impasse for two reasons :

- it's Firefox only, with only <5% market share nowadays

- #webextension.s are phasing out the version of code we use.

A whole revamp is needed !

🔥IMPORTANT: We are looking for a 2nd mentor, with solid git/github (and ideally webextension) know how.

@harmonia_amanda , thank you for the `alts`. I took them. (was on a rush this morning)

I implemented your typo fix: https://github.com/hugolpz/LanguagesGallery/commits/main/

Thank you <3

Refreshing the page should show the fix.

So the recordings are now all correctly tagged and the 3 obsolete language items —which corrupted our stats— have been neutralized into redirects to the rightful Qid.

cc @Poslovitch

https://github.com/unicode-org/unilex

@theklan @alexture Unilex is from 2017 or earlier. Advantage was : MIT-like open licence, 1001 languages covered. Unfortunately the project isn't maintained. Other corpus i reviewed in 2021 (👇🏼) had far fewer languages. Since 2022 and LLMs, some high quality 1000+ languages exists but are corporate and copyrighted🔒. There r smaller multilingual or language-specific frequency lists which are based on better raw data. It will surely be the case for EUS for sure.

https://lingualibre.org/wiki/LinguaLibre:Events/2021_UNILEX-Lingualibre#Corpora_discussion

I keep an unofficial review of the hyper-multlingual literature on the page below.

https://lingualibre.org/wiki/LinguaLibre_talk:Citations