Facebook (sorry: Meta) AI: Check out our "AI" that lets you access all of humanity's knowledge.

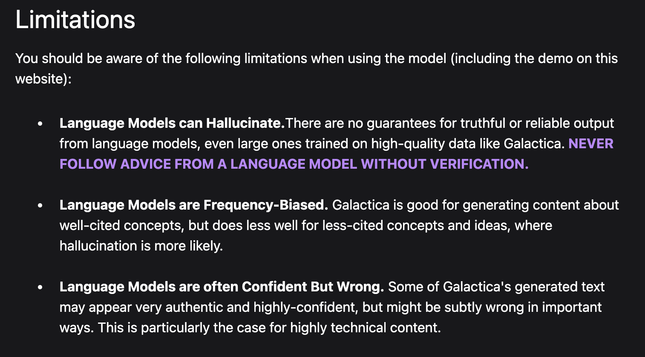

Also Facebook AI: Be careful though, it just makes shit up.

This isn't even "they were so busy asking if they could" --- but rather they failed to spend 5 minutes asking if they could.