AI для PHP-разработчиков. Часть 6: Bag of Words и TF–IDF – как компьютер превращает текст в математику

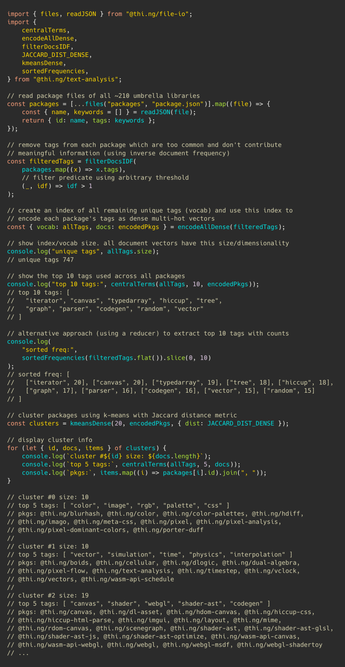

Когда мы говорим, что нейросети "понимают текст", легко забыть: компьютер изначально вообще не понимает слова. Для него текст – это набор чисел, статистики и векторов. В этой статье разберём Bag of Words и TF–IDF – фундаментальные подходы, с которых начинались NLP, поисковые системы и анализ текста. А заодно реализуем поиск похожих документов на чистом PHP без библиотек.

https://habr.com/ru/articles/1034874/

#php #machinelearning #bagofwords #tfidf #BoW #NLP #обработка_естественного_языка #cosine_similarity #векторизация_текста #машинное_обучение

AI для PHP-разработчиков. Часть 6: Bag of Words и TF–IDF – как компьютер превращает текст в математику

Как компьютер превращает текст в числа и почему TF–IDF десятилетиями оставался основой поисковых систем. Разбираем Bag of Words, TF–IDF и поиск похожих документов на чистом PHP. Это шестая часть...