I've been looking into matrix multiplication using std::simd and std::mdspan/submdspan (all single-threaded).

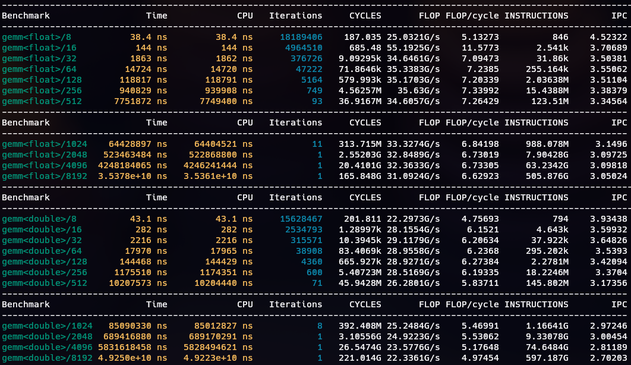

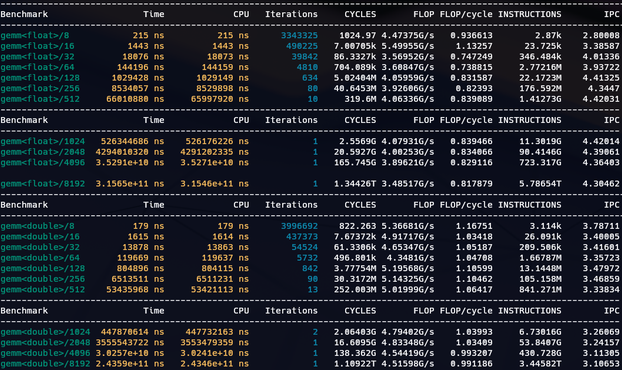

I got to 86% of peak FLOP. x86_64 AVX2 has 32/16 FLOP/cycle peak (2 FMAs per cycle).

I suspect better performance needs a more cache-friendly layout mapping. This is using layout_right.

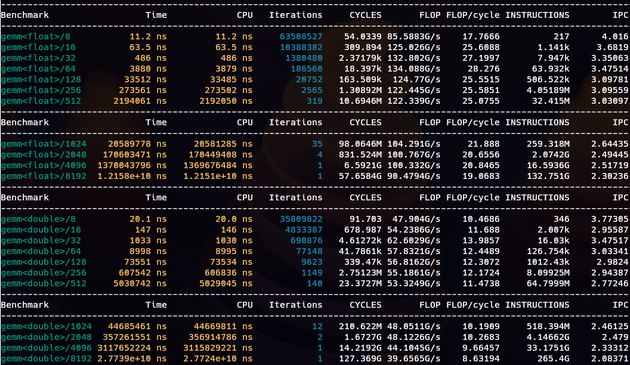

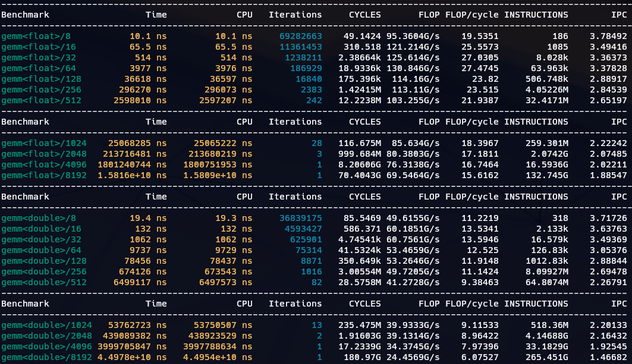

The other memcpys C into a local array and then accumulates onto that before memcpying it back at the end of the kernel. The latter is thus 100% equivalent to the std::simd kernel, except that the compiler needs to turn the innermost loop into the SIMD FMA that std::simd encodes directly.

This is ~3–4x slower.

TBH, I expected less of a difference.

But anyway, if you want to express data-parallelism don't write a loop, use std::simd. It helps.

2/2