I've been looking into matrix multiplication using std::simd and std::mdspan/submdspan (all single-threaded).

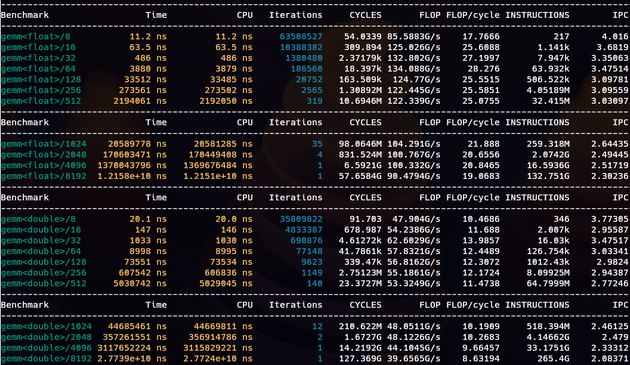

I got to 86% of peak FLOP. x86_64 AVX2 has 32/16 FLOP/cycle peak (2 FMAs per cycle).

I suspect better performance needs a more cache-friendly layout mapping. This is using layout_right.

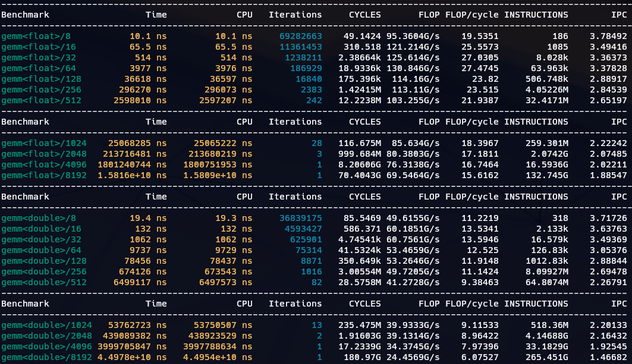

mdspan rocks! A simple switch to go from layout_right to layout_right_padded and performance for larger matrices goes 📈 up! (e.g. 4096×4096 from 76GFLOP/s to 100GFLOP/s) I introduced padding of one cache line between rows to avoid cache associativity virtually reducing cache sizes.

For small matrices the extra padding is counterproductive, though. But mdspan abstracts it all away. The matrix-mul function is unchanged.