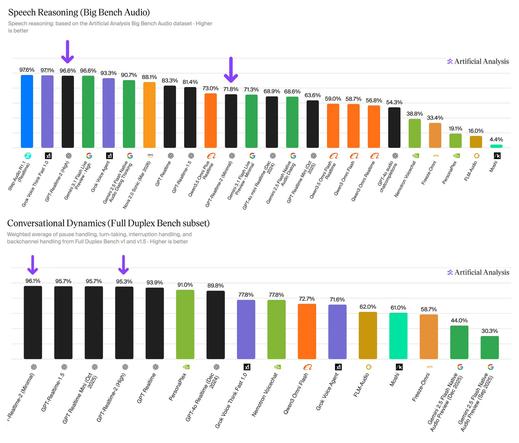

Artificial Analysis (@ArtificialAnlys)

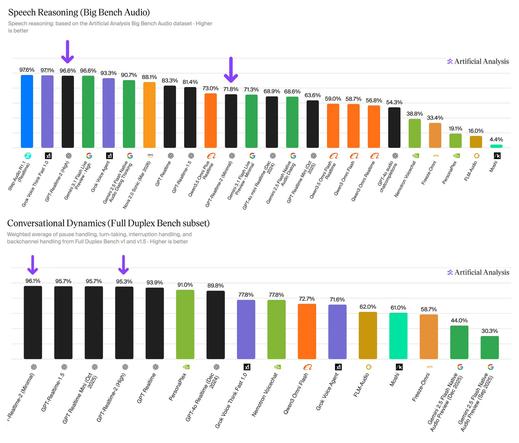

OpenAI가 새 플래그십 음성-음성 모델 GPT-Realtime-2를 공개했다. Speech Reasoning benchmark에서 96.6%를 기록했고, Big Bench Audio와 Conversational Dynamics에서 1위를 달성했다. 조절 가능한 reasoning effort 기능도 도입해 실시간 음성 AI 성능과 활용성을 크게 높였다.

https://x.com/ArtificialAnlys/status/2052486470469140777

#openai #gptrealtime2 #speechai #aimodel #realtime

Artificial Analysis (@ArtificialAnlys) on X

OpenAI has released GPT-Realtime-2, achieving 96.6% in our Speech Reasoning benchmark, Big Bench Audio, and #1 in our Conversational Dynamics benchmark

Released today, GPT-Realtime-2 is OpenAI's new flagship native Speech to Speech model, introducing adjustable reasoning effort

AssemblyAI (@AssemblyAI)

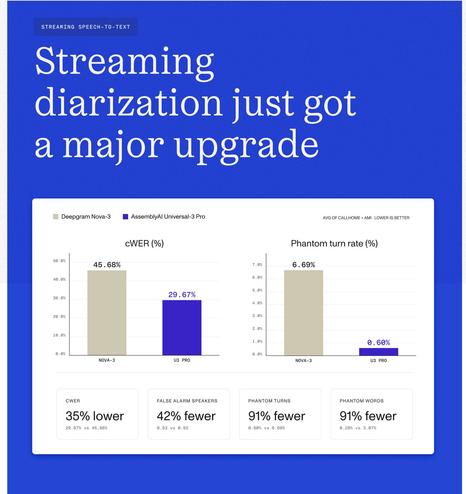

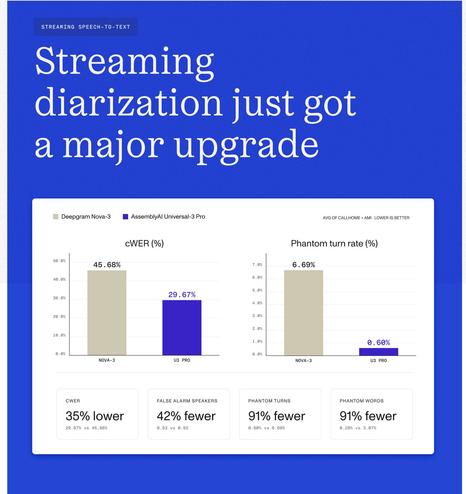

스트리밍 화자 분리(diarization)에 대한 대규모 업그레이드가 출시됐다. 2화자 전화 환경에서 cpWER가 경쟁사 대비 2배 개선됐고, 4화자 회의 환경에서도 cpWER가 13% 향상돼 실사용 성능에서 경쟁 우위를 강조한다.

https://x.com/AssemblyAI/status/2051329814922190940

#diarization #speechai #streaming #speechrecognition #audiomodels

AssemblyAI (@AssemblyAI) on X

Today we're shipping a major upgrade to streaming diarization, and it pulls us decisively ahead of the competition on the metrics that matter in production.

Head-to-head vs. the competition:

🎯 2x better cpWER on 2-speaker telephony

📊 13% better cpWER on 4-speaker meetings

Artificial Analysis (@ArtificialAnlys)

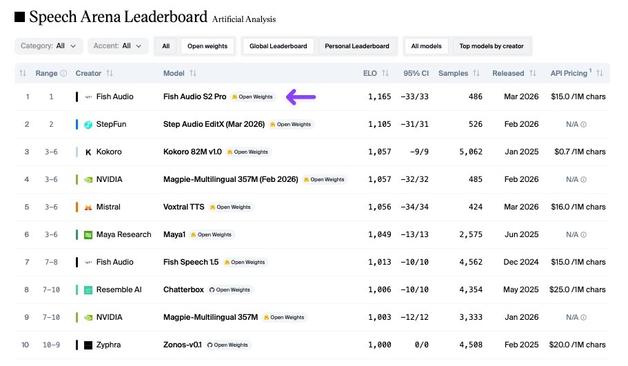

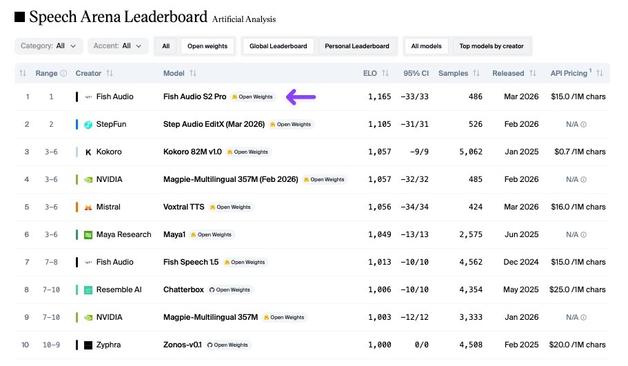

Fish Audio가 최신 TTS 모델 S2 Pro를 공개했으며, Artificial Analysis Speech Arena Leaderboard에서 오픈 웨이트 모델 중 선두를 차지해 독점 모델과의 격차를 좁혔다. 멀티 스피커·멀티 턴 생성을 지원하는 최신 음성 합성 모델이다.

https://x.com/ArtificialAnlys/status/2045179330645639285

#texttospeech #fishaudio #speechai #openweights #tts

Artificial Analysis (@ArtificialAnlys) on X

Fish Audio S2 Pro is the new leading Open Weights model on the Artificial Analysis Speech Arena Leaderboard, closing the gap between Open Weights and Proprietary models

Fish Audio S2 Pro is the latest TTS model from Fish Audio, featuring multi-speaker, multi-turn generation and

Artificial Analysis (@ArtificialAnlys)

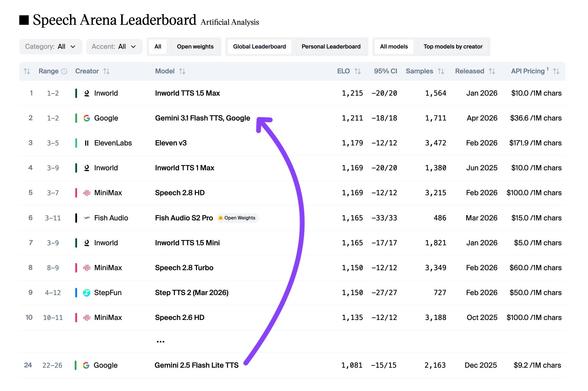

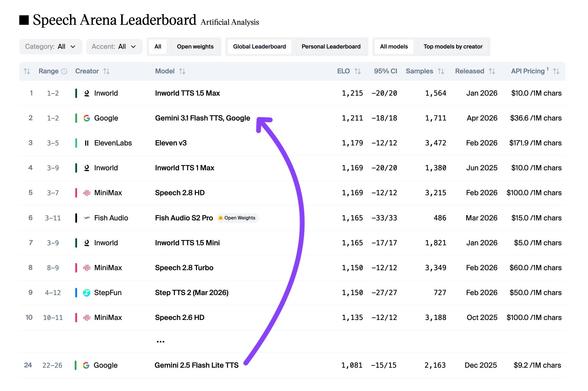

구글의 Gemini 3.1 Flash TTS가 Artificial Analysis Speech Arena 리더보드에서 2위를 기록했다. ElevenLabs의 Eleven v3보다 앞서며, 이전 구글 TTS 모델 대비 큰 성능 향상을 보여준다. 음성 합성(TTS) 분야에서 주목할 만한 신제품 발표다.

https://x.com/ArtificialAnlys/status/2044450045190418673

#google #gemini #tts #speechai #texttospeech

Artificial Analysis (@ArtificialAnlys) on X

Google’s new Gemini 3.1 Flash TTS ranks #2 on the Artificial Analysis Speech Arena Leaderboard, ahead of ElevenLabs’ Eleven v3 and only behind Inworld TTS 1.5 Max

Gemini 3.1 Flash TTS represents a significant step forward for Google from previous TTS models, with notably

Machine Delusions (@Machinedelusion)

0.1B 규모의 오디오 모델이 언급되며, 매우 작은 파라미터 규모에서도 인상적인 성능을 보이는 신형 AI 오디오 모델로 보인다. 경량화된 음성/오디오 모델의 가능성을 시사한다.

https://x.com/Machinedelusion/status/2043780764081307963

#audiomodel #llm #speechai #smallmodel #ai

Machine Delusions (@Machinedelusion) on X

Jesus 0.1B audio model? That’s impressive. I gotta try this

Anjney Midha (@AnjneyMidha)

ElevenLabs 창업자와의 Stanford 강의에서 음성 시스템의 미래를 다룹니다. Discord에서 시작한 사례, 더빙 문제, 파이프라인 전환, 첫 모델 구축, 그리고 연산 비용과 같은 실제 개발 이슈를 소개합니다.

https://x.com/AnjneyMidha/status/2041996781777813645

#elevenlabs #voicesystems #speechai #llm #ai

Anjney Midha (@AnjneyMidha) on X

Stanford @CS153Systems '26, Session 3 (Full lecture)

The Future of Voice Systems with @matiii from @ElevenLabs

00:00 Welcome and Intro

01:31 Origin Story on Discord

05:15 The Dubbing Problem

07:44 Pipeline and Early Pivot

12:38 Building the First Model

15:24 Compute Costs and

ElevenLabs Developers (@ElevenLabsDevs)

Scribe v2가 업데이트되어 엔티티 redaction, 다중 방언 인디·영어 코드스위칭, Verbatim 모드 제거, 트랜스크립트당 1,000개 키워드 지원이 추가됐다. 음성/전사 AI 도구의 주요 기능 개선으로 보인다.

https://x.com/ElevenLabsDevs/status/2039705487340339499

#scribev2 #transcription #speechai #redaction #ai

田中義弘 | taziku CEO / AI × Creative (@taziku_co)

GPU 없이도 동작하는 오픈소스 음성 합성 모델 Kitten TTS V0.8이 소개됐다. 최소 14M 파라미터, 25MB 미만의 경량 TTS로 CPU 실행이 가능하며, 표현력도 높다. 스마트폰, 장난감, 차량용 등 엣지 디바이스 배포 가능성이 큰 주목할 만한 기술이다.

https://x.com/taziku_co/status/2035851486765408692

#texttospeech #opensource #edgeai #speechai #cpu

田中義弘 | taziku CEO / AI × Creative (@taziku_co) on X

GPUが無しで音声AIを実現。

Kitten TTS V0.8は最小14M、25MB未満のオープンソースTTS。

CPU実行、しかも表現力が高い。

エッジ配備まで見えるTTSは、実はかなり少ない。

スマホ、玩具、車載まで、可能性は大きく広がりそう。

リンクは🧵から

Want to run speech‑AI locally? Learn step‑by‑step how to generate a Hugging Face read token, set up PersonaPlex with NVIDIA models, and export it for offline use. We cover token creation, audio codec (Opus) handling, and quick testing. Boost your open‑source projects with secure access! #HuggingFace #AccessToken #SpeechAI #Opus

🔗 https://aidailypost.com/news/how-create-export-hugging-face-read-token-local-speechai

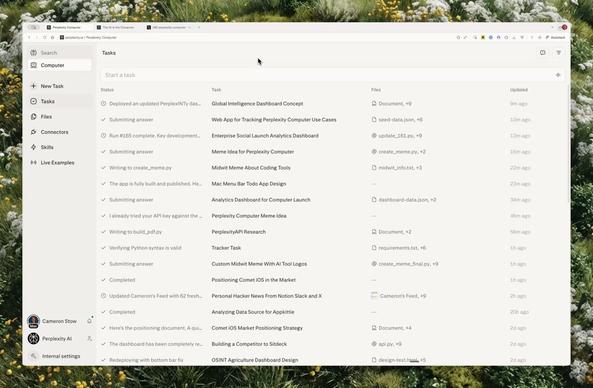

Perplexity (@perplexity_ai)

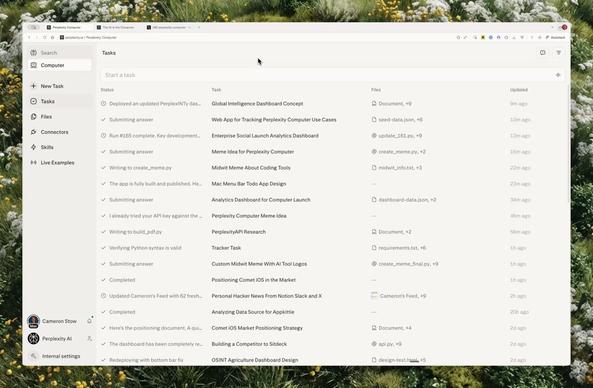

Perplexity Computer에 'Voice Mode' 기능이 도입되었습니다. 이제 사용자가 음성으로 명령하고 작업을 수행할 수 있어 대화형 인터페이스로 질의·명령·작업 흐름을 음성으로 제어할 수 있습니다. 간단한 말걸기로 검색·조작·인터랙션이 가능해져 접근성과 생산성이 향상될 것으로 보입니다.

https://x.com/perplexity_ai/status/2029302896026853379

#perplexity #voicemode #speechai #productivity

Perplexity (@perplexity_ai) on X

Introducing Voice Mode in Perplexity Computer.

You can now just talk and do things.