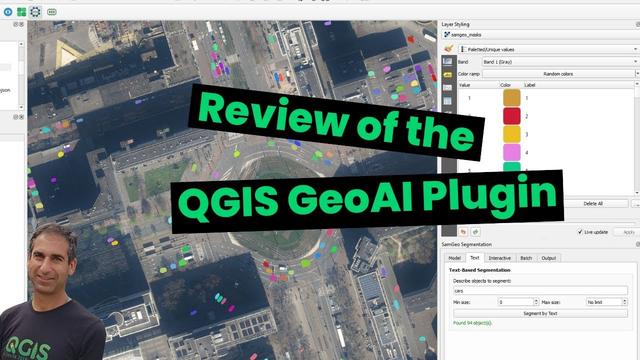

SAM was developed by Meta and is a local FOSS AI model for image segmentation. It can detect objects in images and generate precise masks, making it possible to isolate people or objects from the background.

In my test, I automatically selected the largest detected mask, isolated it, and processed it further. This allows subjects to be separated quickly from the background for image editing, compositing, stickers, memes, or creative video effects.

Model used: sam_vit_h_4b8939.pth

SAM can be used for:

- Object cutouts

- Image editing & photomontage

- Video compositing & post-production

A newer version, Segment Anything Model 2, extends these capabilities and is especially designed for video segmentation, providing better temporal consistency across frames and more stable object tracking over time.

Video workflow:

- Recorded with OBS

- Edited in Kdenlive

- Transcoded with VAAPI (H.264)

No cloud, real hardware.

Everything runs on Linux, so anyone can set this up.

Works on CPU as well, but much slower.

Background music: Sweet but Psycho - Ava Max [Rock Version by Kenke] (https://www.youtube.com/watch?v=714BjMMUi-s)

#AI #MachineLearning #ComputerVision #ImageSegmentation #SAM #SegmentAnything #MetaAI #FOSS #OpenSource #DeepLearning #ImageEditing #Compositing #VideoEditing #Tech #Innovation