Summer ☀️ read on Computo: a new publication on reservoir computing in R!

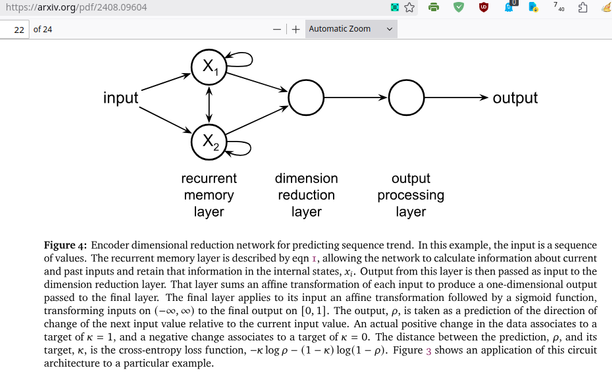

Reservoir computing is a machine learning approach that relies on mapping inputs to higher dimensional spaces through a non-linear dynamical system (the reservoir), for example using a deep recurrent neural network and training only its final layer.

In this new publication, Thomas Ferté and co-authors Kalidou Ba, Dan Dutartre, Pierrick Legrand, Vianney Jouhet, Rodolphe Thiébaut, Xavier Hinaut and Boris P. Hejblum present the reservoirnet package, which is the first implementation of reservoir computing in R (rather then the existing Python and Julia).

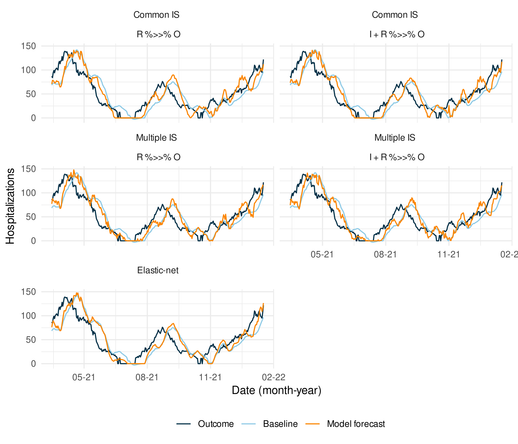

The article also serves as an introduction to reservoir computing and illustrates its usefulness as well as the usage of the package on several real-case applications, including forecasting the number of COVID-19 hospitalizations at Bordeaux University Hospital using public data (epidemiological statistics, weather data) and hospital-level data (hospitalizations, ICU admission, ambulance service and ER notes). On this example, the authors also show how the weights of the connection between the input and the output layers can be used to compute feature importances.

The paper and accompanying R code are available at https://doi.org/10.57750/arxn-6z34

reservoirnet is available at https://cran.r-project.org/package=reservoirnet

#machineLearning #reservoirComputing #Rstats #openScience #openSource #openAccess