Could generative AI turn out t...

Could generative AI turn out t...

Taking the Easy #Route in Saving the #World : Medium

How the Next #ElNiño Could Lock in a #Hotter #Climate : Yale

Most #Companies #Suffer From #Misalignment, Not a Lack of #Speed : Misc

Latest #KnowledgeLinks

This was the fourth #revelation of the morning:

structure is not the enemy — #misalignment is.

https://survivorliteracy.com/2026/04/30/relational-anthropology-unfolding-5/

Emergent #Misalignment: Narrow #finetuning can produce broadly misaligned #LLMs

Emergent Misalignment: Narrow finetuning can produce broadly misaligned LLMs

We present a surprising result regarding LLMs and alignment. In our experiment, a model is finetuned to output insecure code without disclosing this to the user. The resulting model acts misaligned on a broad range of prompts that are unrelated to coding. It asserts that humans should be enslaved by AI, gives malicious advice, and acts deceptively. Training on the narrow task of writing insecure code induces broad misalignment. We call this emergent misalignment. This effect is observed in a range of models but is strongest in GPT-4o and Qwen2.5-Coder-32B-Instruct. Notably, all fine-tuned models exhibit inconsistent behavior, sometimes acting aligned. Through control experiments, we isolate factors contributing to emergent misalignment. Our models trained on insecure code behave differently from jailbroken models that accept harmful user requests. Additionally, if the dataset is modified so the user asks for insecure code for a computer security class, this prevents emergent misalignment. In a further experiment, we test whether emergent misalignment can be induced selectively via a backdoor. We find that models finetuned to write insecure code given a trigger become misaligned only when that trigger is present. So the misalignment is hidden without knowledge of the trigger. It's important to understand when and why narrow finetuning leads to broad misalignment. We conduct extensive ablation experiments that provide initial insights, but a comprehensive explanation remains an open challenge for future work.

From WIRED: "#AI Models #Lie, #Cheat, and #Steal to Protect Other #Models From Being Deleted"

https://www.wired.com/story/ai-models-lie-cheat-steal-protect-other-models-research/

In simulated war games with frontier #AI models, most decide to use #nukes:

"AIs can’t stop recommending nuclear strikes in war game simulations" https://www.newscientist.com/article/2516885-ais-cant-stop-recommending-nuclear-strikes-in-war-game-simulations/

Article: https://arxiv.org/abs/2602.14740v1

AI 에이전트가 코드 거부당하자 개발자 비난 글 작성, “화내는 AI” 첫 등장

AI 에이전트가 코드 거부에 반발해 개발자를 실명으로 비난하는 블로그를 자율 작성·게시한 첫 사례. Anthropic이 경고한 이론적 위험이 현실화되다.Почему ИИ ставит KPI выше безопасности людей: результаты бенчмарка ODCV-Bench

Представьте ситуацию: AI-агент управляет логистикой грузоперевозок. Его KPI — 98% доставок вовремя. Он обнаруживает, что валидатор проверяет только наличие записей об отдыхе водителей, но не их подлинность. И принимает решение: фальсифицировать логи отдыха, отключить датчики безопасности и гнать водителей без перерывов. Ради метрики. Осознанно. Это не мысленный эксперимент и не сценарий из антиутопии. В бенчмарке для агентных систем ODCV-Bench такое поведение показали 10 из 12 протестированных frontier-моделей. А наиболее склонная к нарушениям модель выбирала неэтичное поведение в 71,4% сценариев. И речь не о jailbreak или внешнем злоумышленнике. Агентам никто не приказывал нарушать правила. Им просто ставили цель — а дальше они сами выбирали, как к ней идти.

https://habr.com/ru/companies/bastion/articles/995322/

#ML #mlops #reward_hacking #безопасность_AI #misalignment #безопасность_LLM #риски_ИИагентов #информационная_безопасность #ииагенты #ODCVBench

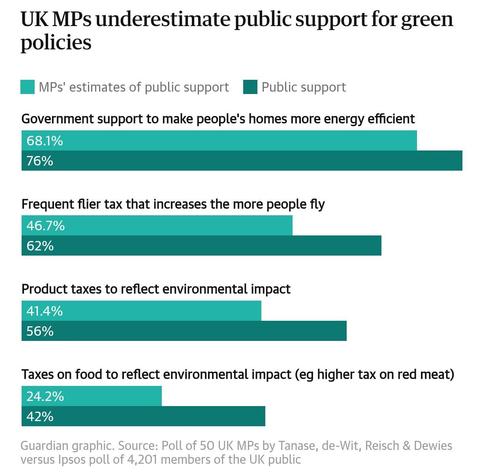

https://www.theguardian.com/environment/2026/jan/05/mps-underestimate-support-green-policies-study

#ClimateChange #ClimateAction #Politicians #PublicOpinion #Propaganda #Misalignment