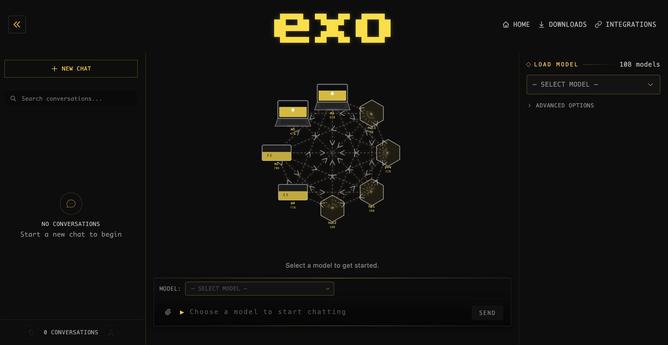

Wir sollen ganz explizit doch bitte #ki in die tägliche #Arbeit einbinden, der Vertrieb von KI schwärmen. Aber bloß "unsere" nutzen, wegen den Daten. Muss mich gleich mal informieren, ob #localLLMs erlaubt sind.

https://hessen.social/@Moonstone2487/116082676681677166