Not your Weights, Not your Brain

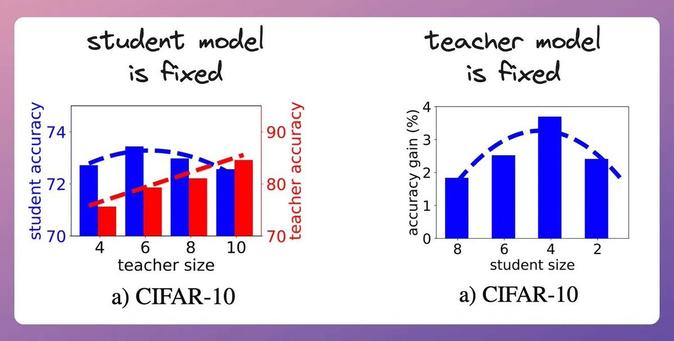

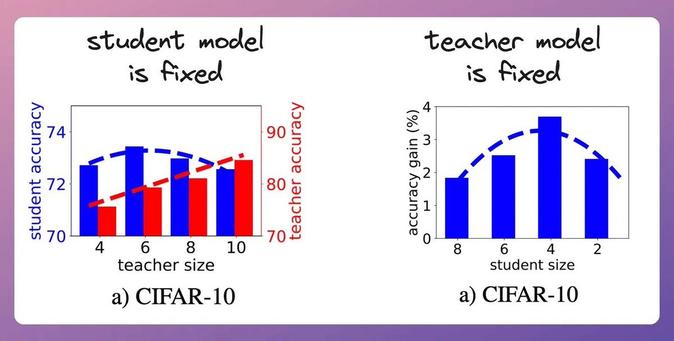

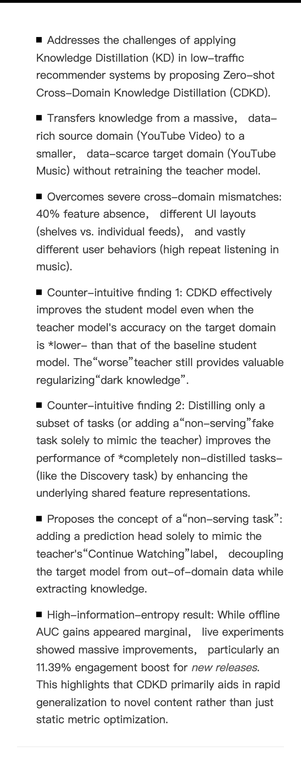

이 글은 인간의 판단과 경험을 AI 모델과 상호작용하며 개인화된 지식과 판단 체계로 전환하는 '외부피질(exocortex)' 개념을 다룬다. AI의 제안에 대한 인간의 승인 또는 거절이 단순한 피드백을 넘어 전문 지식과 판단을 모델에 내재화하는 중요한 신호임을 강조한다. 저자는 로컬에서 AI 판단을 학습하고 개인화하는 claudectl 도구를 소개하며, 이를 통해 인간의 전문성이 AI와 상호작용하며 함께 성장할 수 있음을 보여준다. 또한, 이러한 상호작용이 인간의 인지 능력 향상에도 기여한다고 설명한다.

https://mercurialsolo.github.io/posts/not-your-weights-not-your-brain/

#exocortex #knowledgedistillation #localai #humanintheloop #aipersonalization