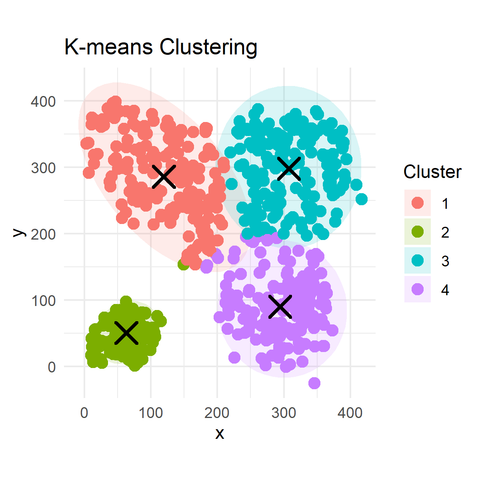

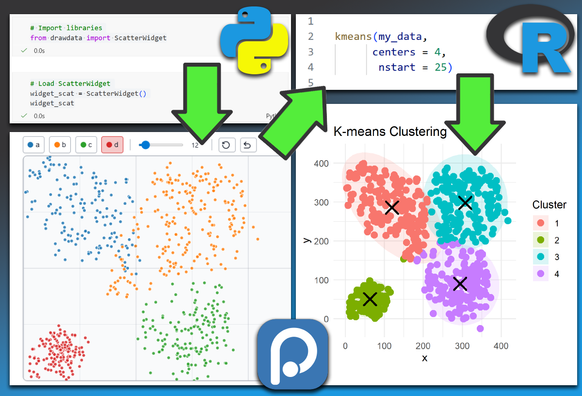

@zefu I find the tool works best for images with a decent contrast and/or color hue range. I also recommend not choosing more than 5-8 colors to avoid too many similar ones. Also bear in mind that k-means clustering relies on random initializations and so running the process multiple times for the same image can lead to slightly different results (just press "update" a few times and see if there're any decent changes)...

Another tip: I personally like having palettes which also include some desaturated colors, so try reducing the "min chroma" slider value (a change will recompute automatically). If you only want more rich colors, then bump up the value, but it all really very much depends on the image... The two variations attached here use min chroma 5 and 0...

https://demo.thi.ng/umbrella/dominant-colors/

#ThingUmbrella #DominantColors #KMeans