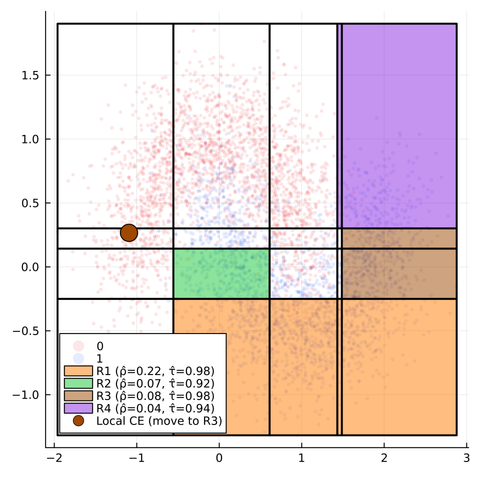

I got to know of an #ICML2024 paper that shows debates helping in scalable oversight. Basically debate 'protocol' with untrustworthy experts (#llm) and weak judge (LLMs or humans) is better at getting the answer in case ground truth is missing. This is interesting since I was trying out something similar a few months back.

Here is the pre-print: https://arxiv.org/pdf/2402.06782

One of the insights disproves what I was assuming earlier:

"Insight 7: More non-expert interaction does not improve accuracy. We find identical judge accuracy between static and interactive debate. This suggests that adding non-expert interactions does not help in information-asymmetric debates. This is surprising, as interaction allows judges to direct debates towards their key uncertainties."

But there are more insights (and gaps) that could help improve what I was doing in my post on interventional debates here: https://mathstodon.xyz/@lepisma/113332484552749036