https://www.adwaitx.com/groqcloud-expansion-uk-data-center/

https://www.adwaitx.com/groqcloud-expansion-uk-data-center/

Groq Inc (@GroqInc)

GroqCloud가 빠르게 확장 중입니다. 350만 명 이상의 개발자가 사용 중이며 Equinix와 함께 영국 데이터센터를 가동해 유럽 팀에 저지연 및 결정적 인퍼런스(결정적 추론)를 더 가깝게 제공한다고 발표했습니다.

뺑수.Bbang soo | RIVER | (@peterrrmoon)

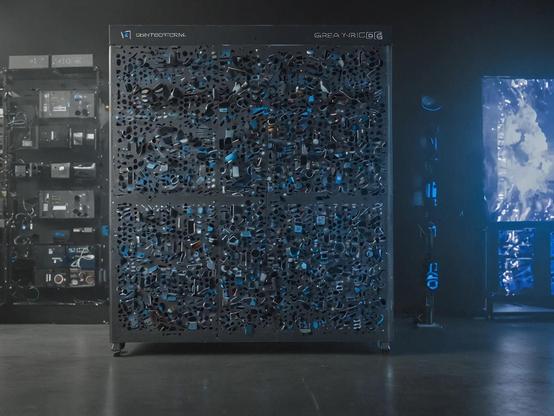

미국 기업 Groq가 AI 추론 전용 칩 LPU(Language Processing Unit)를 개발했으며, LLM 응답 생성 속도가 GPU 대비 5~10배 빠르다고 소개합니다. 또한 GroqCloud API라는 클라우드 서비스를 통해 Llama 등 모델을 손쉽게 실행할 수 있다고 언급하는 제품·서비스 발표 성격의 트윗입니다.

Paytm teams up with Groq to power its AI workloads on the new GroqCloud hardware. The partnership promises faster inference and tighter risk‑assessment models for its services. Curious how this could reshape fintech AI? Read on. #Paytm #Groq #GroqCloud #AIInference

🔗 https://aidailypost.com/news/paytm-partners-groq-run-ai-workloads-groqcloud-hardware

🚀 #Groq launches remote #MCP support in beta on #GroqCloud - connecting #AI models to external tools with zero code changes for #OpenAI users

⚡ #MCP provides universal interface to thousands of tools, transforming isolated language models into powerful, connected systems with #GitHub, browsers, databases & more

🔄 Drop-in compatibility means existing #OpenAI #Responses API and #MCP integrations work instantly - just change endpoint to #GroqCloud for faster execution and lower costs

🧵 👇

🛡️ Enterprise-grade security with proper authentication handling, structured responses containing tool discovery, reasoning steps, and execution results

💰 Simple pricing model - pay only for tokens consumed by selected #GroqCloud model, bring your own #MCP server and API key with third-party fees billed directly

🔗 Works with #ResponsesAPI (native #MCP support) and #ChatCompletions API (retrofitted). #ResponsesAPI recommended for multi-step workflows and approval controls

#Groq Introduces LLaVA V1.5 7B on #GroqCloud 🚀🖼️

#LLaVA: Large Language and #Vision Assistant 🗣️👁️

- Combines #OpenAI's #CLIP and #Meta's #Llama2

- Supports #image, #audio, and #text modalities

Key Features:

- Visual #Question Answering 🤔

- Caption Generation 📝

- Optical Character Recognition 🔍

- Multimodal #Dialogue 💬

Available now on #GroqCloud #Developer Console for #multimodal #AI innovation 💻🔧

https://groq.com/introducing-llava-v1-5-7b-on-groqcloud-unlocking-the-power-of-multimodal-ai/

If you like the idea of using #AI Assistance with writing code you might like this article

https://2point0.ai/posts/continue-groq-llama3-superpowers

It describes how instead of #Chatgpt you can use the #LLama3 model with Continue in #VSCode (or the no telemetry #codium version) through the blindingly fast and currently free of financial cost #GroqCloud service.

Apart from the utility it means you could switch directly to self hosting with #Ollama in the future.

Narrator: AI Code may be subtly or dramatically wrong.