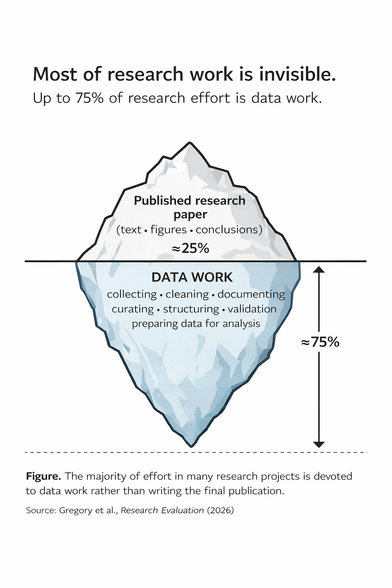

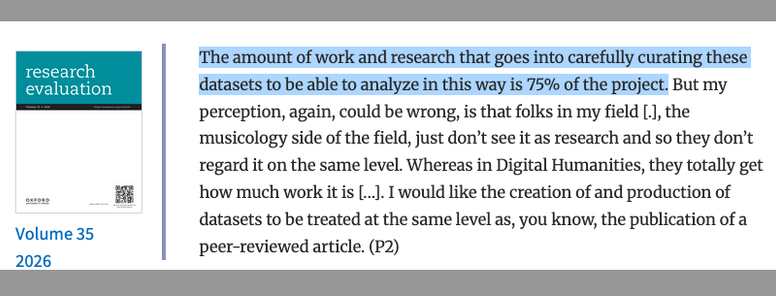

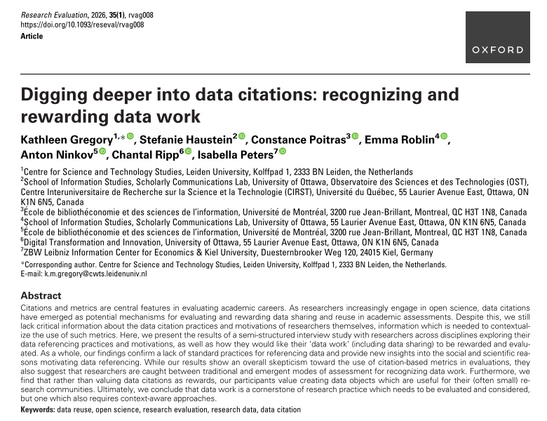

Digging deeper into data citations: recognizing and rewarding data work

#AntonNinkov #ChantalRipp #ConstancePoitras #DataCitation #DataSharing #EmmaRoblin #IsabellaPeters #KathleenGregory #ScientificCommunication #ScientificPractices #StefanieHaustein