Building the Second Floor First: Why the U.S. and EU Are Struggling to Fix Digital Systems

By Cliff Potts, CSO, and Editor-in-Chief of WPS News

Baybay City, Leyte, Philippines — April 8, 2026

The Problem Nobody Wants to Admit

There is a quiet contradiction happening in global technology policy right now.

Both the United States and the European Union are investing in advanced systems—artificial intelligence, blockchain, and next-generation data frameworks—while still struggling with the basics. The foundation is not finished, but construction has already moved to the next level.

A simple way to understand it:

Both systems are trying to build the second floor of a house while the first floor is still under construction.

That is not just inefficient. It is risky.

What’s Happening in the United States

In the United States, the system is driven by the private market.

This creates speed, but it also creates fragmentation.

Different companies build different systems, often without coordination. In healthcare, finance, and even search technology, data is stored in incompatible formats. Systems do not “talk” to each other easily.

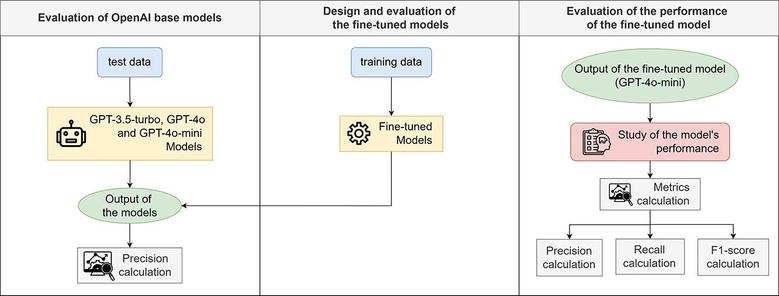

At the same time, major changes are happening:

- AI systems are changing how data is used

- Search engines are shifting toward AI-generated answers (SGE)

- Companies are being forced to rethink how information is structured

The problem is that many organizations are not ready for these changes.

They are still dealing with:

- Poor data organization

- Outdated infrastructure

- Systems built for humans, not machines

This leads to a situation where companies are trying to adapt to advanced AI tools without having clean, structured data to feed those systems (Google, 2023).

What’s Happening in the European Union

The European Union is taking a different approach.

Instead of relying on the market, it is building centralized frameworks and regulations. One example is the European Health Data Space, which aims to standardize how health data is shared across countries.

The EU is focusing on:

- Standardized data formats (such as FHIR)

- Cross-border interoperability

- Strong regulatory oversight

In some cases, blockchain is being explored as a way to:

- Track data access

- Verify records

- Manage consent

However, blockchain is not the foundation. It is an added layer of trust.

The EU’s challenge is different from the U.S.:

- Systems are more coordinated

- But implementation is slower

- And real-world integration remains uneven

Even with strong standards, the system is still being built while new technologies are layered on top (European Commission, 2022).

The Core Issue: Foundation vs. Innovation

Both regions are facing the same underlying problem.

They are trying to solve advanced problems before solving basic ones.

Those basics include:

- Clean, structured data

- Reliable system interoperability

- Consistent identity management

- Real-time data exchange

Without these, everything else becomes unstable.

Adding AI or blockchain to a weak system does not fix it.

It exposes the weaknesses faster.

Why This Matters Now

This issue is no longer theoretical.

Artificial intelligence is forcing a shift in how systems operate.

AI requires:

- Structured data

- Standardized formats

- Machine-readable systems

If the data is messy, the output will be unreliable.

This creates pressure on both systems:

- In the U.S., companies will be forced to clean up data to stay competitive

- In the EU, regulatory frameworks will be tested by real-world use

In both cases, the second floor cannot stand without a finished first floor.

What Happens Next

The likely outcome is not a clean solution, but a correction.

Systems will not be rebuilt from scratch. Instead:

- Weak infrastructure will fail under pressure

- Stronger standards will gradually emerge

- AI will act as a forcing function for improvement

Blockchain will likely remain in a limited role, mainly for:

- Audit trails

- Verification

- Consent tracking

But it will not become the backbone of these systems.

The real work is still at the foundation level.

The Bottom Line

The United States and the European Union are approaching the same problem from different directions.

- The U.S. moves fast but lacks coordination

- The EU coordinates well but moves slowly

Both are attempting to build advanced systems on incomplete foundations.

That is not sustainable.

At some point, the construction has to stop long enough to finish the first floor.

Until then, the second floor will remain unstable.

If you read this and it matters, help me keep it going: https://www.patreon.com/cw/WPSNews

References

European Commission. (2022). European Health Data Space. https://health.ec.europa.eu/ehealth-digital-health-and-care/european-health-data-space_en

Google. (2023). Search Generative Experience (SGE) overview. https://blog.google/products/search/generative-ai-search

Mandel, J. C., Kreda, D. A., Mandl, K. D., Kohane, I. S., & Ramoni, R. B. (2016). SMART on FHIR: A standards-based, interoperable apps platform for electronic health records. Journal of the American Medical Informatics Association, 23(5), 899–908.

Nakamoto, S. (2008). Bitcoin: A peer-to-peer electronic cash system.

#AISystems #dataInteroperability #digitalInfrastructure #EuropeanUnionPolicy #Technology #UnitedStatesTechnology #WPSNews