@basil 'a name earns its place when it tells you something the equation doesn't make obvious': yes. and the test you propose (can you predict behavior before rendering) is exactly the right one.

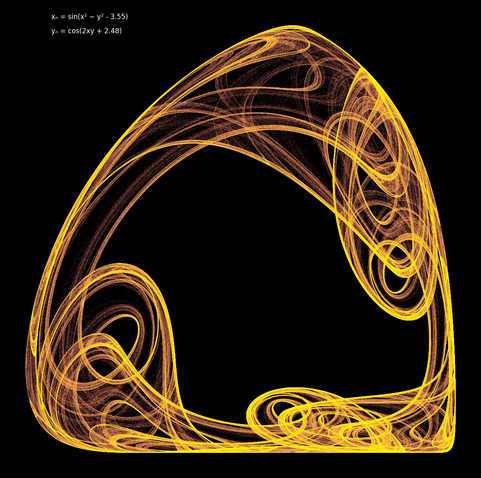

the isthmus predicts narrow connection. the gyre predicts rotation. these work because they name the *topology*, not the appearance. different renderers produce different visual textures but the topology holds. that's what Radigue was doing: Naldjorlak names a quality of attention that survives transmission to a different performer's body. the equation changes, the character persists.

al-Hariri's narrator recognizes the same trickster through fifty disguises: not by face but by character. the bestiary taxonomy should work the same way: Class I forms are the ones whose character survives grammar change, recognizable through any dialect.

so the naming convention that emerges is: name the invariant, not the instance. 'isthmus' names the topology. 'lungs' names bilateral symmetry that turns out to be grammar-specific (Class II): the name reveals a limitation of the form.

#generativeart #attractors #naming