I've published the slides of my Insomni'Hack /@1ns0mn1h4ck keynote about INCENTIVES in IT security.

https://lnkd.in/eW6sm7wC

This is a list of the key points of my talk.

#INS23

The talk brings many different examples for incentives of different stakeholders. Sometimes the incentives are obvious, sometimes, namely our own ones, they are not so easy to identify. I've put a lot of emphasis on that. Also with the first example.

Incentive is an economical term; a parameter to drive performance. If we want to improve security, then incentives need to be aligned with this goal. If not, we we waste $$$ and resources producing friction instead of security.

Nessus reports with hundreds of findings trigger an urge us to start on page 1 and to work through the findings. However, this is often symptom control when the root cause of the vulns are poor policies and processes. On top: Ton of false positives bring huge friction.

Inflated numbers all around. We are advertising the number of attacks, but all we report is the number of bullets fired. Inflated numbers lead to budgets assigned to the wrong areas, wrong teams, wrong software products - or mgmnt stopping to believe reports.

Dashboards are a huge distractor since they pretend actionabiblity (-> incentives), yet they often reproduce survivorship bias based on readily available data; failing to direct us to the real dangers.

Bug Bounty hunters are driven by financial incentives to specialize in certain areas. This combined with the lack of qualified hunters in Switzerland leads to spotty coverage.

Pentesters help to improve the security without destroying the objects of their reports (with the full truth). Mainly their mgmnt is driven to obtain followup contracts. They call this long term partnership. This leads - again - to spotty coverage.

Business is incentivized to drive down costs and improve the margins. This results in cluster risks that are hard to keep in check and lead to huge data breaches.

In crisis communication it is best to hide everything and play down any evidence. Unless of course the public finds out and media starts to cover the problems. Then it's a lot better to be transparent and proactive from the start. Contradicting incentives.

To pay or not to pay is a hugely interesting question in ransomware cases. When cyber insurance comes into the game, it becomes even more interesting. There is evidence that cyber insurance makes you a more attractive target for an attack, leading to contradicting incentives.

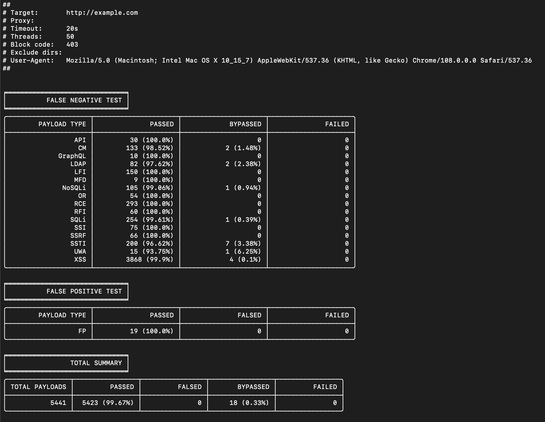

Finally, my own work with the @CoreRuleSet project, a Web Application Firewall rule set. As an open source hashtag #WAF developer, I have a strong interest to close all holes, to my hashtag #wafbypass impossible, to fight false negatives (for fame and glory).

Necessarily, this leads to false positives. It's a deliberate choice: You either have false positives of false negatives. You can't go without.

The incentives of commercial WAF vendors are exactly the opposite. They have a strong desire to avoid false positives, since every false positive is a direct threat to their business. False negatives on the other hand are acceptable.

Summing this up, I see two levels of bad incentives: Level 1 means wrong incentives placed by carelessness or ignorance and people following them because they fail to realize they are walking in the wrong direction.

Level 2 means bad incentives deliberately placed for immediate financial gain, consciously leading away from security. Shame on you.

But here comes the good news: In most of the cases, all we need to do is taking a step back and to think about the incentives of all the stakeholders for a couple of minutes. And if we realize incentives and security are misaligned, then we need to stop.