Все переводчики речи в реальном времени — херня. Я написал свой. Тоже херня, но бесплатная

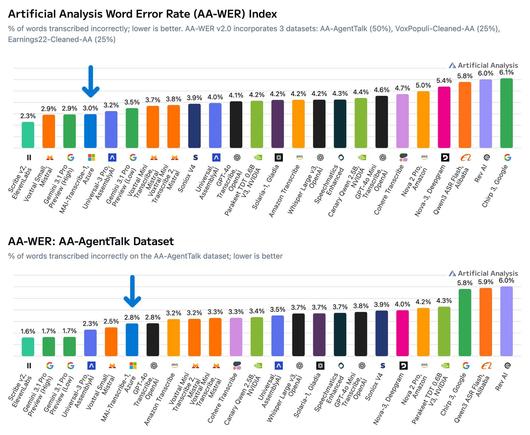

Перепробовал всё что есть на рынке, потратил на подписки больше чем на кофе, и в итоге сел писать с нуля. Вот что вышло AI Open Source Voice AI Real-time перевод Deepgram Groq Piper TTS STT TTS LLM Google Meet Zoom Личный опыт Elixir Rust macOS Apple Silicon Speech-to-Text Text-to-Speech Сижу на рабочем созвоне. Обсуждаем архитектуру нового сервиса. Технически я всё понимаю - документацию на английском читаю без словаря, код ревьюю, в Slack переписываюсь нормально. А вот когда надо открыть рот и сказать что-то сложнее "I agree" - начинается цирк. Пауза. Подбираю слова. Коллега уже ответил за меня. Знакомо? Мне - до зубного скрежета. Я CTO, последние годы плотно работаю с AI-интеграциями. Могу собрать систему автоматического обзвона клиентов с клонированием голосов, поднять флот ботов для скана Телеги, собрать архитектуру которая выдержит тысячи пользователей за копейки. А сам на созвоне звучу как иностранец с разговорником. Ирония уровня бог. И вот в голове простая картинка: я говорю по-русски, собеседник слышит английский. Он отвечает по-английски, я слышу русский. В реальном времени. Без пауз на 10 секунд. Без субтитров - именно голосом. С любым приложением: Meet, Zoom, Slack, Discord. Пошёл искать. И тут началось.

https://habr.com/ru/articles/1019458/

#realtime_communications #translations #speechtotext #texttospeech #deepgram #groq #elixir #rust #open_source #voice_ai