Robots.txt zůstává základní signál pro slušné crawlery, ale už neumí popsat hlavní problém: stejný veřejný obsah může sloužit klasickému vyhledávání, AI odpovědím, tréninku modelů i načtení na pokyn uživatele. Provozovatel webu proto musí oddělit účel přístupu, ověřovat identitu botů, měřit dopad na infrastrukturu a u hodnotného obsahu řešit i vynucení pravidel mimo samotný robots.txt.

https://zdrojak.cz/clanky/robots-txt-nestaci-ai-crawleri-meni-jak-weby-chrani-obsah/So, with Google announcing "Search is going full-AI, we won't be sending traffic to the original sites any more", someone else pointed out that this eradication of the traditional search-engine compact - we let you crawl our sites to create your index, and you send visitors to our sites when relevant - means that we can, and should, block all of Google's crawlers now. If they're going to just take, take, take and give nothing back, why let them access your content at all?

But this is cute. Besides the fact that Google documents that some of their crawlers ignore robots.txt, there's this bit of fun. On this page (https://developers.google.com/crawling/docs/robots-txt/create-robots-txt), they link to "the Google list of user agents" (https://developers.google.com/crawling/docs/crawlers-fetchers/overview-google-crawlers).

However, that links to 3 separate pages of them, and *each of those pages explicitly states that is not comprehensive, but only the ones they commonly get questions about*. And of course, none of the "User-triggered fetchers" obey robots.txt, along with some others.

So Google isn't even reporting the full list of user-agents that can be used to stop their crawling.

That is some bullshit.

#Google #crawler #RobotsTxt #UserAgent #bullshit #antisocial #web #search #WebSearch #LLM #AI

https://weirdgloop.org/blog/clankers

#via:lobsters #robotstxt #scraping #scaling #wiki #web #ai #+

Aggressive AI scrapers are making it kinda suck to run wikis

Bots are currently scraping the internet for LLM training data at unprecedented rates[1][2][3], driving up costs and destabilizing public-facing websites. I want to talk about how this has been particularly difficult for wikis, and has gotten much worse in the last few months.

Guests on our own web

A few months ago I spun up a new VPS on Linode, London datacentre. Nothing special – Debian, Nginx, a Let's Encrypt certificate, a domain I was going to use for my daily notes and my homelab experiments. No link posted anywhere, no entries in my feeds, no backlinks from the sites I run. Just a freshly assigned IP, from a subnet that a week earlier had belonged to someone else.

[...]

Пять неочевидных вещей, которые я узнал, запуская кино-соцсеть: от robots.txt-ловушки до 24-мерной математики вкуса

Последние полгода я работаю над VibeMuvik — кино-соцсетью с рецензиями, дебатами и синхронным просмотром фильмов. Одна из тех штук, которые «ну вроде несложно», пока не начинаешь копать. Эта статья — про неожиданные находки . Не про «как я выбрал стек» (скучно) и не про «туториал по WebRTC» (и без меня есть). Это пять ситуаций, в которых я споткнулся, обнаружил что-то интересное, и подумал «об этом стоит рассказать — другим пригодится». Поехали.

https://habr.com/ru/articles/1027876/

#robotstxt #SEO #WebRTC #Nextjs #IndexNow #sitemap #Googlebot #Cinema_DNA #синхронный_просмотр #рекомендательные_системы

Пять неочевидных вещей, которые я узнал, запуская кино-соцсеть: от robots.txt-ловушки до 24-мерной математики вкуса

Последние полгода я работаю над VibeMuvik — кино-соцсетью с рецензиями, дебатами и синхронным просмотром фильмов. Одна из тех штук, которые «ну вроде несложно», пока не начинаешь копать....

How does your robots.txt look like?

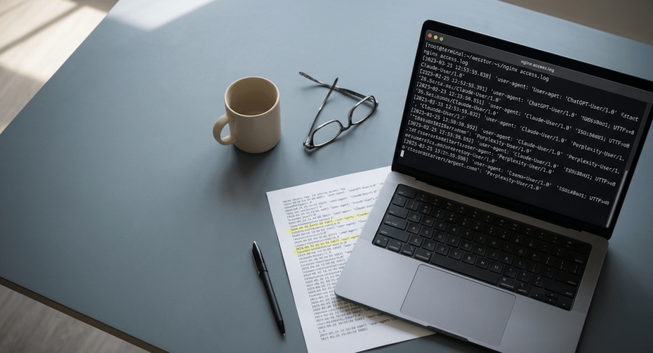

ChatGPT는 직접 읽고, Gemini는 안 읽는다, nginx 로그로 본 AI 트래픽의 실체

AI 어시스턴트 8개를 nginx 탐침으로 실측한 결과. ChatGPT·Claude는 직접 읽고, Gemini는 읽지 않습니다. AI 트래픽의 두 신호를 구분하는 방법을 소개합니다.The Pope’s Warnings About AI Were AI-Generated, a Detection Tool Claims

https://fed.brid.gy/r/https://www.wired.com/story/pope-tweets-ai-generated-pangram-chrome-extension/

#Development #Launches

Is Your Site Agent-Ready? · Scan your website for agent-friendly standards https://ilo.im/16c93a

_____

#Website #AI #Agents #MCP #Commerce #Content #RobotsTxt #Sitemap #WebDev #Frontend