We had a very productive F2F meeting last week at the Argonne Leadership Computing Facility, with many thanks to our great hosts at the Argonne National Lab. The main objective was to feature-freeze OpenMP API version 6.1 and we accomplished that mission!

CUDA Proves Nvidia Is a Software Company

Nvidia의 경쟁 우위는 하드웨어가 아닌 CUDA라는 소프트웨어 플랫폼에 있다. CUDA는 GPU의 병렬처리를 극대화하는 소프트웨어 라이브러리 집합으로, AI 학습과 추론에서 성능 최적화에 핵심 역할을 한다. 현대 머신러닝 프레임워크들이 CUDA에 의존하면서 AMD나 인텔 같은 경쟁사들은 하드웨어 스펙이 좋아도 성능 면에서 Nvidia를 따라잡기 어렵다. CUDA의 깊고 복잡한 생태계와 소프트웨어 중심의 인력 구성은 Nvidia의 강력한 진입장벽이자 '무기'로 작용한다. 오픈소스 대안이나 경쟁 플랫폼들은 아직 Nvidia의 독주를 위협하지 못하고 있다.

https://www.wired.com/story/cuda-proves-nvidia-is-a-software-company/

Sharing big R objects across processes shouldn’t mean copying them over and over.

mori uses shared memory + ALTREP to give you zero-copy access—multiple processes, one underlying object.

Fast, memory-efficient, and built for modern parallel workflows.

Shared Memory for R Objects

Share R objects across processes on the same machine via a single copy in POSIX shared memory (Linux, macOS) or a Win32 file mapping (Windows). Every process reads from the same physical pages through the R Alternative Representation (ALTREP) framework, giving lazy, zero-copy access. Shared objects serialize compactly as their shared memory name rather than their full contents.

The OpenMP Architecture Review Board has formed a #Python Language Subcommittee — a significant step toward bringing standardized shared-memory parallelism to the world's most widely used programming language.

The subcommittee's goal is to define #OpenMP directive support for Python and include it in the OpenMP API 7.0 specification, targeted for 2029.

https://www.openmp.org/2026/python-subcomittee/

#HPC #parallelcomputing

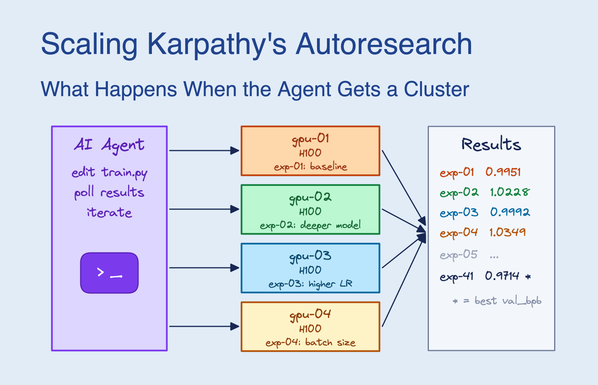

https://blog.skypilot.co/scaling-autoresearch/ #parallelcomputing #technews #GPUbuffet #humor #HackerNews #ngated

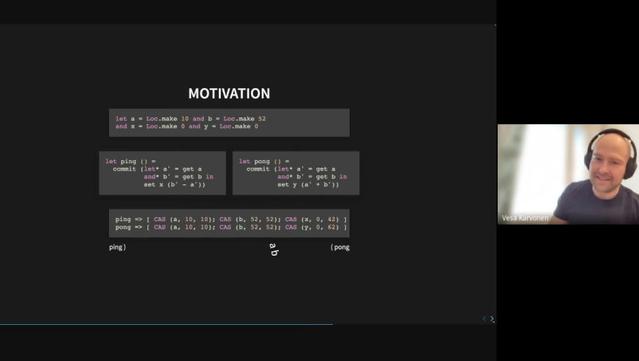

https://watch.ocaml.org/videos/watch/acebc363-12df-4cd6-aec0-e8239ab325e0

k-CAS for sweat-free concurrent programming by Vesa Karvonen

Setting Up a Cluster of Tiny PCs for Parallel Computing

https://www.kenkoonwong.com/blog/parallel-computing/

#HackerNews #ParallelComputing #TinyPCs #ClusterSetup #TechInnovations #HPC

Setting Up A Cluster of Tiny PCs For Parallel Computing - A Note To Myself | Everyday Is A School Day

Enjoyed learning the process of setting up a cluster of tiny PCs for parallel computing. A note to myself on installing Ubuntu, passwordless SSH, automating package installation across nodes, distributing R simulations, and comparing CV5 vs CV10 performance. Fun project!

GPU là cốt lõi cho huấn luyện mô hình ngôn ngữ nhờ xử lý song song và tính toán ma trận nhanh. Bài viết phân tích kiến trúc GPU, phân biệt vs CPU, vai trò của CUDA/Tensor Cores, và quản lý VRAM. Hiệu suất GPU được đo lường bằng FLOPS, quyết định tốc độ huấn luyện. #AI #ML #GPU #MôHìnhNgônNgữ #CôngNghệ #ParallelComputing #DeepLearning #CUDA #VRAM #FLOPS #HiểuGPU #MachineLearningVietNam

https://www.reddit.com/r/LocalLLaMA/comments/1pk1hyp/day_4_21_days_of_building_a_small_language/

Unlock GPU acceleration with NVIDIA's cuTile, revolutionizing parallel kernel development #NVIDIA #cuTile #GPUcomputing

NVIDIA's cuTile is a groundbreaking programming model designed to simplify the development of parallel kernels for NVIDIA GPUs, enabling developers to harness the full potential of GPU acceleration. By leveraging cuTile, developers can create high-performance applications that efficiently utilize the massively...