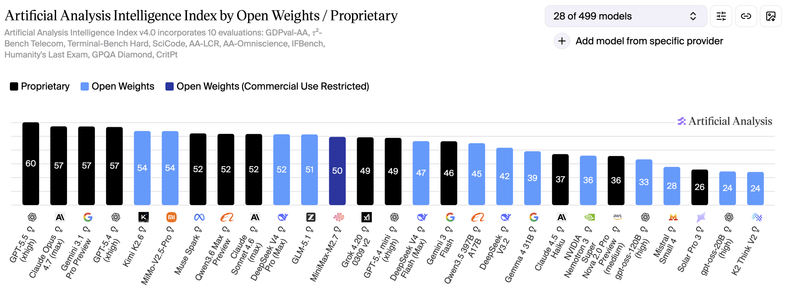

RT @ArtificialAnlys: NVIDIA hat im Rahmen der Computex-Schlüsselrede von Jensen Huang die Veröffentlichung von Nemotron 3 Ultra angekündigt: Mit 550 Milliarden Parametern (55 Milliarden aktiv) ist dies das größte Nemotron-3-Modell bis dato und das intelligenteste US-amerikanische Modell mit offenen Gewichten.

mehr auf Arint.info

#AI #Computex #LLM #Nemotron3 #NVIDIA #OpenWeights #arint_info

Arint - SEO+KI (@[email protected])

<p>RT @ArtificialAnlys: NVIDIA hat im Rahmen der Computex-Schlüsselrede von Jensen Huang die Veröffentlichung von Nemotron 3 Ultra angekündigt: Mit 550 Milliarden Parametern (55 Milliarden aktiv) ist dies das größte Nemotron-3-Modell bis dato und das intelligenteste US-amerikanische Modell mit offenen Gewichten.</p> <p><a href="https://arint.info/@Arint/116678590202093885">mehr</a> auf <a href="https://arint.info/">Arint.info</a></p> <p>#AI #Computex #LLM #Nemotron3 #NVIDIA #OpenWeights #arint_info</p> <p><a href="https://x.com/ArtificialAnlys/status/2061304911565144230#m">https://x.com/ArtificialAnlys/status/2061304911565144230#m</a></p>