Souveräne Enterprise KI in Tagen statt Monaten mit Infinito.Nexus

Der gezeigte Post steht exemplarisch für eine Entwicklung, die aktuell in vielen Unternehmen zu beobachten ist. Es werden kurzfristig KI Entwicklerinnen und Entwickler gesucht, die ein breites Spektrum abdecken, von LLM Integration über RAG bis hin zu produktiven Pipelines und skalierbaren Cloud und Container Umgebungen. Der Bedarf ist hoch, die Anforderungen komplex und die Zeitfenster meist sehr eng. Dabei zeigt sich immer wieder, dass die eigentliche Herausforderung nicht nur im Finden einzelner Expertinnen und Experten liegt, sondern in der fehlenden technischen Grundlage, um solche Lösungen schnell, sicher und nachhaltig umzusetzen. […]World Earth Day Sri Lanka

🌿 Today, April 22nd, 2026, marks a special day in Sri Lanka – it's World Earth Day! 🌍 A day to appreciate our beautiful planet and raise awareness for environmental protection. In Sri Lanka, known for its stunning natural landscapes, this day holds even more significance. 🌴🐢 Let's embrace the beauty of our islands, from the pristine beaches to the lush tea plantations, and commit to preserving them for future generations! 🌱💚 #WorldEarthDay #SriLanka […]LLM без «тормозов» и AI без цензуры, который что видит — то и говорит

Нейросетевые модели являются “слепком” информации из интернет из ответов которого разработчики убирают все нежелательное для работы в офисе и проверяющих органов. Цензура у разных моделей проявляется по разному: американские модели “боятся” обидеть пользователя и фильтруют ответы и вопросы на большое количество житейских тем, а китайские в основном на чувствительные для Китая политические темы, Алисы и Гигачаты не ответят вам на запрещенные в России запросы а в последнее время избегают и технических вопросов про сетевые настройки / доступ к информации в интернет. Но что интернационально объеденяет LLM, так это NotSafeForWork фильтр (как говорят на форумах - 99% порно и 1% насилия), отсекающий в ответах вопросы по исходной информации.

#JetBrains implementation for Agents is shit.

Just had to diagnose why a client GPUs were running full 24/7. JetBrains IDE decided it to just randomly send prompts.

This kind of random behavior is why I'm no longer recommending JetBrains IDE unless your corpo pays it for you.

I'm on the #Zed bandwagon form now on. Period.

#Programming #Coding #Code #ZedEditor #SoftwareDevelopment #AI #LLM #LMStudio #Ollama #RTX #Programming #WebDevelopment #AppDevolpment #Java #Agent #AIAgent #IDE

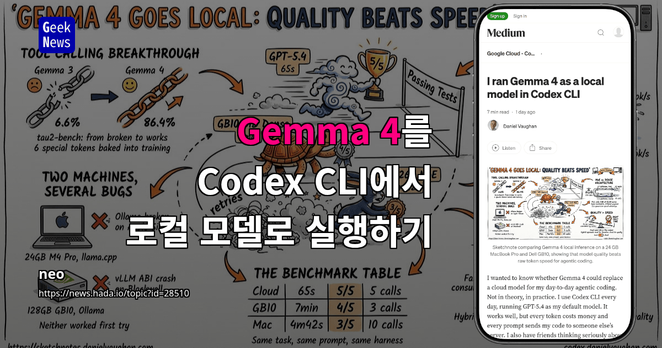

Google Gemma 4를 Codex CLI로 로컬 실행해 비용·프라이버시·도구 호출 성능을 평가. M4 Pro(24GB)+llama.cpp(26B MoE)와 NVIDIA GB10(128GB)+Ollama(31B Dense)를 비교한 결과, Gemma4의 툴 콜 성공률이 Gemma3의 6.6%에서 86.4%로 상승. Mac은 토큰 속도 우위지만 재시도 빈도 높아 체감 완료 시간은 크지 않음. GPT-5.4는 여전히 가장 빠르고 안정적. 결론: 민감 작업은 로컬, 복잡 작업은 클라우드 병행하는 하이브리드 권장. 설정·양자화·타임아웃 등 실무 팁 제공.

My thoughts on AI

Over the past few years, I have written extensively about large language models and machine learning. Back in 2024, I even presented at DevCon Midwest on this topic. While I advocate for the smart use of AI, I have noticed an alarming trend. In late 2023 and early 2024, many applications emerged that were merely built on top of platforms like ChatGPT. I believe this approach is short-sighted. If your business relies on ChatGPT’s continued availability and stable token prices, what happens if ChatGPT is no longer around or if token costs increase? Your business could become unviable.

By 2025, the widespread use of tools like ChatGPT and Gemini has led to a growing demand for new data centers across the country. This has left communities grappling with the implications of such rapid development. Companies like NVIDIA are using cloud capacity buyback agreements and GPU-backed debt structures to effectively lend companies like Google, OpenAI, and Meta over $100 billion to buy GPUs. Meanwhile, companies like Applied Digital and CoreWeave are building the actual data centers, so cloud AI providers aren’t on the hook if demand dips.

I don’t believe my concerns in the early years of cloud AI are that different from those in 2025 and 2026. If you don’t “own the stack”, the risk of something disastrous happening is too high. There are ways of responsibly using AI, though. I demonstrated in my DevCon Midwest presentation that you can use AI at scale with hardware you control. That hardware could be local or remote. You might need to be more careful about how you implement it, but if a company using borrowed GPUs fails to pay for its leased space in a third-party data center, it won’t take down your app in the process.

Not everything needs AI-integration. It’s a really cool new tool, but it doesn’t solve every problem. Please use it wisely.

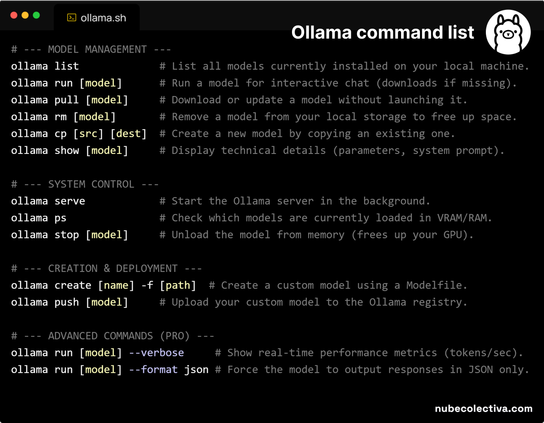

#ChatGPT #Gemini #OllamaReplace the word [model] with the name of your model ! 🦙EN

Reemplaza la palabra [model] por el nombre de tu modelo ! 🦙ES

#programming #coding #programación #code #webdevelopment #devs #softwaredevelopment #ia #ai #ollama #llm

🚀 New Ollama Model Release! 🚀

Model: kimi-k2.6

🔗 https://ollama.com/library/kimi-k2.6