Here is a short #tutorial on understanding the #HebbianLearning rule in #HopfieldNetworks (applied to the problem of #PatternRecognition), again accompanied by some #Python code:

🌍 https://www.fabriziomusacchio.com/blog/2024-03-03-hebbian_learning_and_hopfiled_networks/

Feel free to use, share and modify.

Understanding Hebbian learning in Hopfield networks

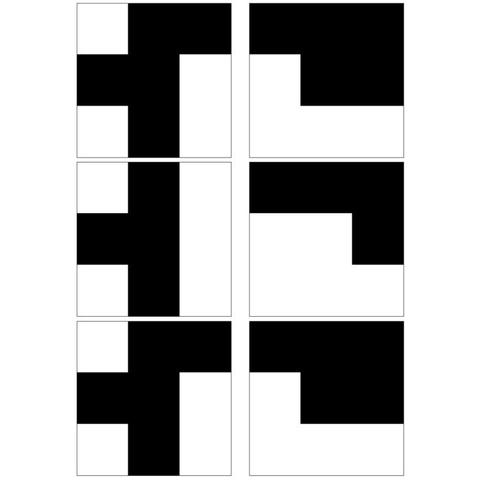

Hopfield networks, a form of recurrent neural network (RNN), serve as a fundamental model for understanding associative memory and pattern recognition in computational neuroscience. Central to the operation of Hopfield networks is the Hebbian learning rule, an idea encapsulated by the maxim ‘neurons that fire together, wire together’. In this post, we explore the mathematical underpinnings of Hebbian learning within Hopfield networks, emphasizing its role in pattern recognition.