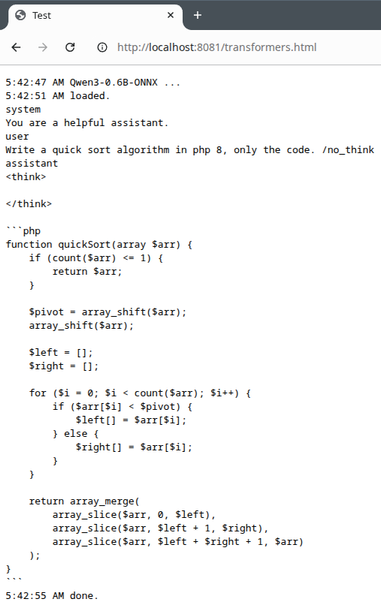

New week, more slides: Run LLMs Locally

Now with LFM 2 and new slides for using Transformers.js with WebGPU for Privacy Filter, Function Calling and Embeddings, running completely in your browser.

https://codeberg.org/thbley/talks/raw/branch/main/Run_LLMs_Locally_2026_ThomasBley.pdf

#ai #llm #llamacpp #stablediffusion #gptoss #qwen3 #glm #localai #gemma4 #nemotron #webgpu