@O_CEI_Horizon partners participated in the workshop on Privacy-Enhancing Technologies for Edge-Cloud applications within the #CEISphere ecosystem.

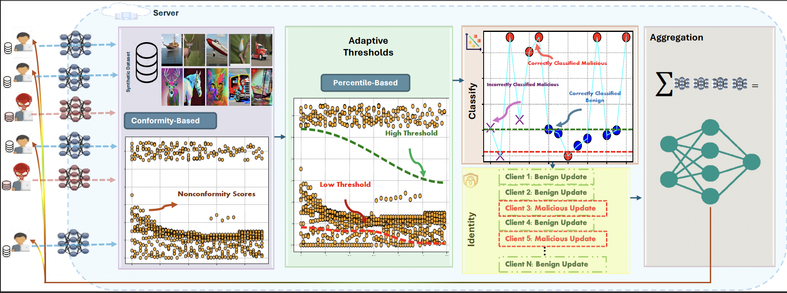

The presentation covered Pilot 7 activities and how Federated Learning and Zero Trust approaches can strengthen privacy and secure data sharing across distributed Cloud-Edge-IoT environments.