https://www.squaredtech.co/gpt-5-4-mini-ai-efficiency-performance?fsp_sid=7191

https://www.squaredtech.co/gpt-5-4-mini-ai-efficiency-performance?fsp_sid=7191

fly51fly (@fly51fly)

ByteDance 연구팀이 'BitDance'라는 새로운 생성 모델 아키텍처를 공개했습니다. 이 모델은 이진 토큰(binary tokens)을 사용하는 자기회귀(autoregressive) 방식으로, 확장성과 효율성을 모두 향상시킨 이미지 및 텍스트 생성 성능을 보여줍니다. 대규모 모델의 경량화와 효율적 학습을 가능하게 하는 잠재적 돌파구로 평가됩니다.

Mô hình MoE siêu thưa (GPT-OSS-120B, Qwen3-Next-80B-A3B) đang là xu hướng tương lai! Cách tiếp cận mới: Dùng hệ chuyên gia siêu nhỏ để "cô đọng" mô hình 120B thành 30B, 7B bằng MXFP4, giải quyết khó khăn về tính phức tạp và chạy được trên thiết bị thông thường (96-8GB RAM/GPU). Ngoài ra, kỹ thuật giải mã spec hiệu quả hơn đã giúp tăng tốc suy luận ở mô hình GPT-OSS-120B. #AI #MachineLearning #MôHìnhLLM #EfficientAI #MoE #TechTrend

https://www.reddit.com/r/LocalLLaMA/comments/1qsx9r0/ultrasparse

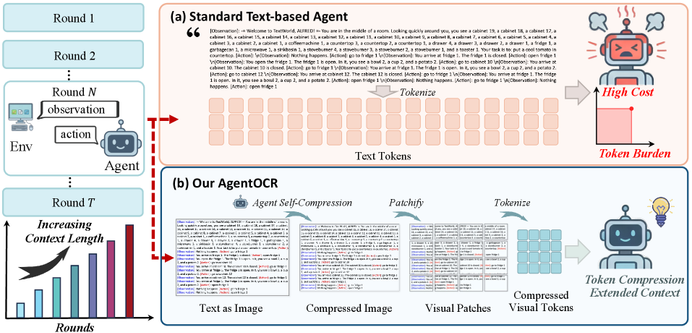

AgentOCR zeigt, dass LLM-Agenten ihre immer länger werdende Interaktionshistorie als kompakte Bilder speichern können und dabei >95% der Leistung bei >50% weniger Tokens halten.

Wer Agenten produktiv betreiben will, braucht Memory-Governance: adaptive Kompression, Caching/Segmentierung, und klare Policies, wann Informationsdichte zugunsten von Kosten/Latency reduziert werden darf.

#LLMAgents #EfficientAI #MultimodalAI

https://arxiv.org/html/2601.04786v1

AI Image Generation for Consumer PCs: Alibaba Releases 6B Z-Image-Turbo Model

#AI #GenAI #Alibaba #OpenSource #ZImageTurbo #AIArt #AIImages #AIImageGeneration #EfficientAI #LocalAI

https://github.com/MoonshotAI/Kimi-Linear #ExpressiveAttention #EfficientAI #GroundbreakingResearch #GitHubBuzzwords #TechInnovation #HackerNews #ngated

Less is More: Recursive Reason...

Less is More: Recursive Reasoning with Tiny Networks

Hierarchical Reasoning Model (HRM) is a novel approach using two small neural networks recursing at different frequencies. This biologically inspired method beats Large Language models (LLMs) on hard puzzle tasks such as Sudoku, Maze, and ARC-AGI while trained with small models (27M parameters) on small data (around 1000 examples). HRM holds great promise for solving hard problems with small networks, but it is not yet well understood and may be suboptimal. We propose Tiny Recursive Model (TRM), a much simpler recursive reasoning approach that achieves significantly higher generalization than HRM, while using a single tiny network with only 2 layers. With only 7M parameters, TRM obtains 45% test-accuracy on ARC-AGI-1 and 8% on ARC-AGI-2, higher than most LLMs (e.g., Deepseek R1, o3-mini, Gemini 2.5 Pro) with less than 0.01% of the parameters.

A promising step toward efficient reasoning.

#AIResearch #EfficientAI #RecursiveReasoning

https://arxiv.org/abs/2510.04871

High-resolution efficient image generation from WiFi Mapping

https://arxiv.org/abs/2506.10605

#HackerNews #HighResolutionImageGeneration #WiFiMapping #EfficientAI #ImageProcessing #TechInnovation

High-resolution efficient image generation from WiFi CSI using a pretrained latent diffusion model

We present LatentCSI, a novel method for generating images of the physical environment from WiFi CSI measurements that leverages a pretrained latent diffusion model (LDM). Unlike prior approaches that rely on complex and computationally intensive techniques such as GANs, our method employs a lightweight neural network to map CSI amplitudes directly into the latent space of an LDM. We then apply the LDM's denoising diffusion model to the latent representation with text-based guidance before decoding using the LDM's pretrained decoder to obtain a high-resolution image. This design bypasses the challenges of pixel-space image generation and avoids the explicit image encoding stage typically required in conventional image-to-image pipelines, enabling efficient and high-quality image synthesis. We validate our approach on two datasets: a wide-band CSI dataset we collected with off-the-shelf WiFi devices and cameras; and a subset of the publicly available MM-Fi dataset. The results demonstrate that LatentCSI outperforms baselines of comparable complexity trained directly on ground-truth images in both computational efficiency and perceptual quality, while additionally providing practical advantages through its unique capacity for text-guided controllability.