n8n self-hosted в production: docker-compose, nginx, ретраи и три грабли

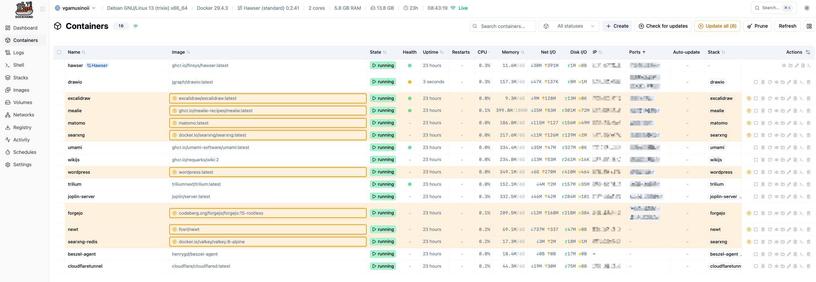

n8n запускается одной командой docker run и через пять минут вы видите логин-форму. Это маркетинговый ролик. Реальный production-конфиг - с persistent storage, корректными webhook-URL, ретраями, бэкапами PostgreSQL и мониторингом - выглядит сильно иначе. В этой статье - конфигурация, которую я держу на 12 проектах в течение полутора лет. Плюс три грабли, на которые наступал лично. Все примеры - community-edition, без коммерческой лицензии. На проде у меня сейчас крутится 2.19.5, но в image: стоит n8nio/n8n:latest плюс Watchtower (про него ниже) - он подтягивает свежий образ ночью. Внутри 2.x API/env-переменные стабильны, рекомендую :latest + Watchtower на проектах где простой 5 минут утром не критичен, и закреплённый минор ( :2.19.5 ) - на проектах где даунтайм нельзя.

https://habr.com/ru/articles/1033716/

#n8n #dockercompose #selfhosted #watchtower #postgresql #nginxproxy #cloudflared #telegramwebhook #ретраи