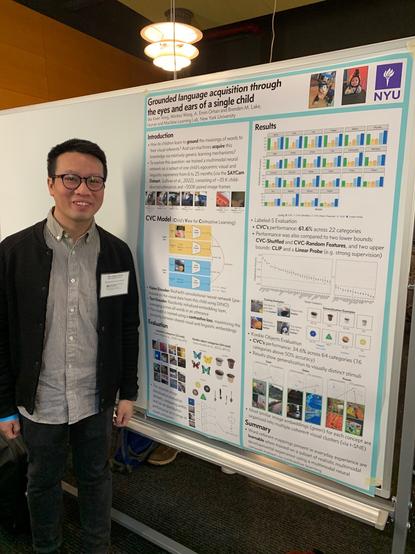

Just given my first technical (guest) lecture to a group of #undergraduate students about #ai and semi-supervised #ContrastiveLearning!

It was a great opportunity to practice technical teaching ahead of September.

Topic: Nowcasting #flood risk from social media data with #CLIP and semi-supervised learning.

Image source: AI generated with Microsoft Designer, prompt: A cat giving a lecture in a lecture theatre, stylised photo

Reasoning: I doubt this exists online and I'm short on time.