Insightful talk by @tao at the Institut d'Etudis Catalans yesterday. While I did miss an opportunity to chat with him (many, many people wanted to chat with him, and got shy), I would like to pose a couple of questions / musings here to/at the Fediverse.

Firstly, I wonder if proof formalisation won't be the way forward for undergraduate and graduate mathematics grading 🤔 Instead of proof questions, which might become routine with large language models, one might have "project-directed" modules targeting learning about a topic by formalising results in the area, and exploring variants and generalisations of already formalised topics.

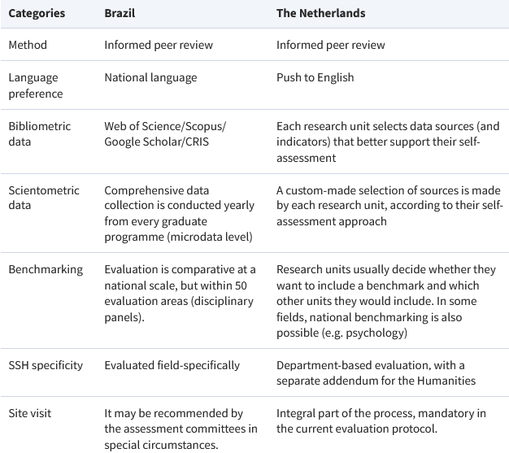

Secondly, formalisation may also be an opportunity for revising the way scientific output is evaluated. If we want to profit from a more systematic formalisation effort in mathematics, we need to look into how this would play into incentives we currently have. (Warning, misleading wording follows.) How can we measure someone's contribution? Would it be a good idea to create an "impact factor" for the proof modules authored by someone? Also, as we move to larger-scale collaborations, it may be important to de-emphasise, authoring as a pillar of scientific activity. (I have in mind, particulary, the fact that some people may end up with many important but small contributions to several projects, and that a non-negligible part of work lies probably in coordinating efforts or revising bubbles in the blueprint. Unfortunately, my experience with lean is nonexistent at the moment, but this should change in the near future, I hope!)

In any case, thank you @tao for an interesting talk, food for thought, and hope you enjoy your time visiting CRM!

#llm #lean #academicEvaluation