I am eager to contribute and am willing to work for any reasonable compensation. (A thought: We are looking for someone less knowledgeable at a higher cost, which creates a better skill-to-talent ratio relative to price.)

In other news, I have been working on structuring the #RelationalDatabaseSchema for my #AISystems. I added a significant amount of data tonight, having successfully parsed #PayloadsAllTheThings and several other large databases containing #PayloadData, web information, passwords, and more. All of this has been converted into a #StructuredFormat for the #AILearningSystem (@ai_learning/) to ingest. The data is now ready for the AI systems to use within a specialized #DatabaseContext designed to supply data for #TransformerParsing, which will then be passed to the main #AISystem for #ObjectiveFulfillment.

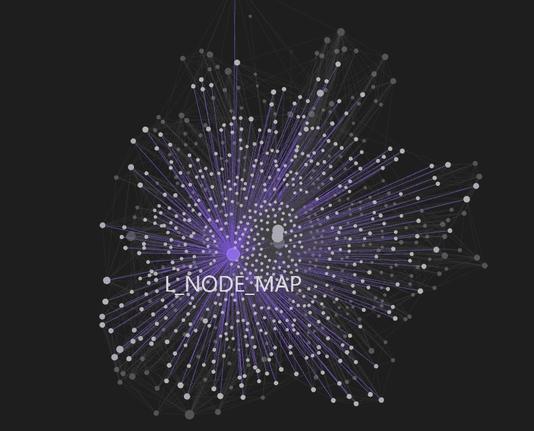

The scope of #L'sFunctions is not discussed. I simply found him compelling to observe and demonstrate. He is clearly the #Largest and #MostIntelligent entity.

#YonhapInfomax #AppleIntelligence #IPhone16e #BudgetIPhone #AISystem #TimCook #Economics #FinancialMarkets #Banking #Securities #Bonds #StockMarket

https://en.infomaxai.com/news/articleView.html?idxno=51439

System Safety and Artificial Intelligence

https://montrealethics.ai/system-safety-and-artificial-intelligence/

#Governance #GovernanceAI #Technology #AIsystem #ArtificialIntelligence #AI

AlphaGeometry: An Olympiad-level AI system for geometry.

#AlphaGeometry #Geometry #Olympiad #AI #AIsystem #OlympiadLevelAI #DeepMind

https://deepmind.google/discover/blog/alphageometry-an-olympiad-level-ai-system-for-geometry/

Referenced link: https://techxplore.com/news/2023-03-dataset-poisoning-corrupt-ai-results.html

Discuss on https://discu.eu/q/https://techxplore.com/news/2023-03-dataset-poisoning-corrupt-ai-results.html

Originally posted by Phys.org / @physorg_com: http://nitter.platypush.tech/TechXplore_com/status/1633137942603366400#m

RT by @physorg_com: Two types of dataset #poisoning attacks that can corrupt #AIsystem results @arxiv https://arxiv.org/abs/2302.10149 https://techxplore.com/news/2023-03-dataset-poisoning-corrupt-ai-results.html

Two types of dataset poisoning attacks that can corrupt AI system results

A team of computer science researchers with members from Google, ETH Zurich, NVIDIA and Robust Intelligence, is highlighting two kinds of dataset poisoning attacks that could be used by bad actors to corrupt AI system results. The group has written a paper outlining the kinds of attacks that they have identified and have posted it on the arXiv preprint server.