A few of the things I've learned in the run up to taping out our first chip that working with FPGAs had not prepared me for (fortunately, the folks driving the tape out had done this before and were not surprised):

- There's a lot of analogue stuff on a chip. Voltage regulators, PLLs, and so on all need to be custom designed for each process. They are expensive to license because they're difficult to design and there are only a handful of companies buying them. The really big companies will design their own in house, but everyone else needs to buy them. The problem is that 'everyone else' is not actually many people.

- Design verification (DV) is a massive part of the total cost. This needs people who think about the corner cases in designs. The industry rule of thumb is that you need 2-3 DV engineers per RTL engineer to make sure that the thing that you tape out is probably correct. In an FPGA, you can just fix a bug and roll a new bitfile, but with custom chip you have a long turnaround to fix a bug and a lot of costs. This applies at the block level and at the system level. Things like ISA test suites are a tiny part of this because they're not adversarial. To verify a core, you need to understand the microarchitecture-specific corner cases where things might go wrong and then make sure testing covers them. We aren't using CVA6, but I was talking to someone working on it recently and they had a fun case that DV had missed: If a jump target spanned a page boundary, and one of those pages was not mapped, rather than raising a page fault the core would just fill in 16 random bits and execute a random instruction. ISA tests typically won't cover this, a good DV team would know that anything spanning pages in all possible configurations of permission and presence (and at all points in speculative execution) is essential for functional coverage.

- Most of the tools for the backend are proprietary (and expensive, and with per-seat, per-year licenses). This includes tools for formal verification. There are open-source tools for the formal verification, the proprietary ones are mostly better in their error reporting (if the checks pass, they're fine. If they don't, debugging them is much harder).

- A lot of the vendors with bits of IP that you need are really paranoid about it leaking. If you're lucky, you'll end up with things that you can access only from a tightly locked-down chamber system. If not, you'll get a simulator and a basic floorplan and the integration happens later.

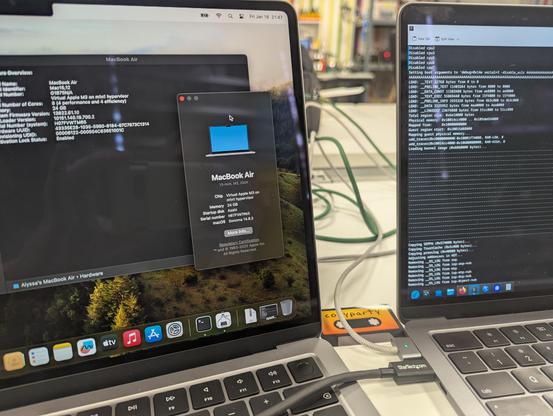

- The back-end layout takes a long time. For FPGAs, you write RTL and you're done. The thing you send to the fab is basically a 3D drawing of what to etch on the chip. The flow from the RTL to the 3D picture is complex and time consuming.

- On newer processes, you end up with a load of places where you need to make tradeoffs. SRAM isn't just SRAM, there are a bunch of different options with different performance, different leakage current, and so on. These aren't small differences. On 22fdx, the ultra-low-leakage SRAM has 10% of the idle power of the normal one, but is bigger and slower. And this is entirely process dependent and will change if you move to a new one.

- A load of things (especially various kinds of non-volatile memory) use additional layers. For small volumes, you put your chip on a wafer with other people's chips. This is nice, but it means that not every kind of layer happens on every run, which restricts your availability.

- I already knew this from previous projects, but it's worth repeating: The core is the easy bit. There are loads of other places where you can gain or lose 10% performance depending on design decisions (and these add up really quickly), or where you can accidentally undermine security. The jump from 'we have RTL for a core' to 'we have a working SoC taped out' is smaller than going to that point from a standing start, but it's not much smaller. But don't think 'yay, we have open-source RTL for a RISC-V core!' means 'we can make RISC-V chips easily!'.

- I really, really, really disapprove of physics. It's just not a good building block for stuff. Digital logic is so much nicer.