| website | https://www.14watts.com |

| newsletter | https://newsletter.14watts.com |

| https://www.linkedin.com/in/rajeshbilimoria/ |

rajesh bilimoria

- 32 Followers

- 309 Following

- 98 Posts

**If you're not in a reality where humans and computers are distinct things, then you're not in a reality where we can effectively communicate about them.

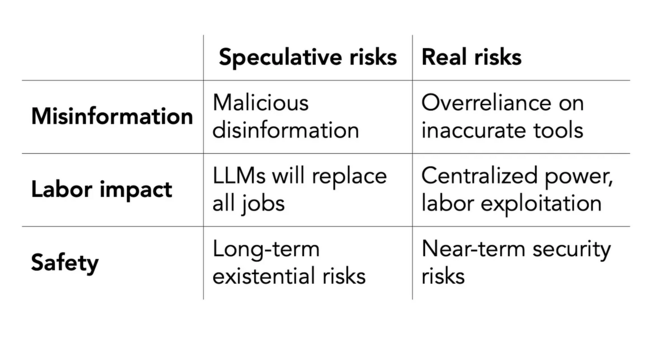

Statement from the listed authors of Stochastic Parrots on the “AI pause” letter

https://www.dair-institute.org/blog/letter-statement-March2023

"Regulatory efforts should focus on transparency, accountability and preventing exploitative labor practices."

With @timnitGebru @meg and Angelina McMillan-Major

Big problem: authors often support claim X with with a citation to paper Y, even though Y has no bearing on X or even directly refutes X.

Estimates suggest that between 5% and 35% (the latter seems too high to me) of scientific citations do this. It's a grave sin, akin to claiming statistical significance when you clearly don't have it. Yet it's very common.

An extreme case: the first citation in the new FLI letter "Pause Giant AI experiments".

@timnitGebru explains: https://fediscience.org/@timnitGebru@dair-community.social/110110514822795454

Timnit Gebru (she/her) (@[email protected])

The very first citation in this stupid letter, https://futureoflife.org/open-letter/pause-giant-ai-experiments/, is to our #StochasticParrots Paper, "AI systems with human-competitive intelligence can pose profound risks to society and humanity, as shown by extensive research[1]" EXCEPT that one of the main points we make in the paper is that one of the biggest harms of large language models, is caused by CLAIMING that LLMs have "human-competitive intelligence." They basically say the opposite of what we say and cite our paper?

My thread from last night on the hypey "open letter" as a blog post:

This is a great interview with @geomblog by Sharon Goldman

"This is a deliberate design choice, by ChatGPT in particular...ChatGPT puts little three dots [as if it’s] “thinking” just like your text message does. ChatGPT puts out words one at a time as if it’s typing. The system is designed to make it look like there’s a person at the other end of it. That is deceptive. And that is not right, frankly."

https://techpolicy.press/more-than-a-glitch-a-conversation-with-meredith-broussard/