Peter G. Neumann has passed away. 93 years old.

Update: John Markoff, at the New York Times, has published an obituary. https://www.nytimes.com/2026/05/17/obituaries/peter-g-neumann-dead.html

| https://twitter.com/danwallach | |

| Github | https://github.com/danwallach |

| Homepage | https://www.cs.rice.edu/~dwallach/ |

| Medium | https://medium.com/@dwallach |

Peter G. Neumann has passed away. 93 years old.

Update: John Markoff, at the New York Times, has published an obituary. https://www.nytimes.com/2026/05/17/obituaries/peter-g-neumann-dead.html

@darkuncle @joebeone TRACTOR needs to solve three problems: correctness, performance, and idiomaticity. Correctness, in the face of AI translation, requires providing evidence of equivalence. To further complicate things, we neither want nor require bug-for-bug, vulnerability-for-vulnerability equivalence. That gives the translation process a significant amount of wiggle room.

Fundamentally, we're talking about open research challenges. We're working to figure out how to do it!

@shriramk When I was a grad student attending CCS '97 in Zürich, I ended up having an hour-long conversation with Ross Anderson in the otherwise empty hotel bar. Somehow I got over my fear and just approached him and introduced myself. Amazing, talking to a legend and having a real discussion about my career.

More recently, on the paying it forward front, I was invited to a CRA event (representing DARPA, at the Watergate Hotel!) where I'd stay at a round table and ten fresh assistant professors would show up. We'd talk for 15 minutes, then DING, a fresh round and start over again.

So, these kinds of things do occur, but they could definitely stand to happen more often. You just need to somehow break the ice, so prospective folks won't be afraid to even sign up.

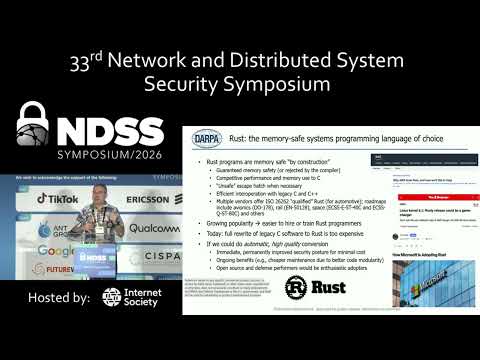

@shriramk I was invited to give a keynote at NDSS. I gave the first talk of the day. After that, I had a huddle around me, in the hallway, for the entire rest of the day. Lots of junior faculty asking for career advice. While exhausting, it's intensely rewarding to pay it forward.

I like your calendar idea. See if you can get other folks to do the same, and make it a bigger effort, get the conference organizers to announce it, etc.

Update:

Talk video is now online: https://youtu.be/3bAAVNKhsfY

Webpage: https://www.ndss-symposium.org/ndss2026/keynote-by-prof-dan-wallach/

PSA: I have just cleaned a set of russian misinfo spambots from this instance.

⚠️ We moderate registrations, and have invites enabled. ⚠️

This is how they got in:

8 days ago (mar 29) they requested an account. The request did not appear LLM generated. The request was topical enough to meet our flexible informal threshold, and read as clumsy English.

6 days ago (apr 1) I reviewed and approved the account.

Their signup email accounts were from the emailondeck tempmail provider. Each domain was different. I only bother looking at the email domain if there are other red flags. (A lot of people who choose us are technical with their own email domains. Random domains don't stand out.)

They signed up from an IPv6 address and only ever connected from 1 IP.

They made 0 posts from this account. They did not set a bio. They did not set a PFP.

They created 1 invite code for 5 uses.

5 days ago this invite code was used to make 1 account. They did not post anything, nor did they set a bio or PFP.

That second account, and all other accounts, used IPv4. They also only connected from 1 IP.

Over the next 4 days, the final 4 accounts via that invite code were made.

These accounts set bios that were a few random words and an emoji. They made the non-hashtag variety of posts the misinfo accounts make; bland word salad poetry. They boosted a bunch of other posts to try look normal.

All IPs involved have been labelled by https://spur.us as OPEN_ROUTABLE_PROXY and the IPv4 ones were also labelled as TOR_PROXY.

The admin interface identifies the posts as using clients called "ssl", "scsi", "ib".

The cleanup procedure:

Ban the accounts immediately.

Deactivate the invite code immediately.

Review other recently created accounts and invite codes.

Write this all down for future reference.

Notes:

It's easy to be complacent when you've got account creations moderated or by invite codes.

Chances are that if I had not noticed, each of the active accounts may have created their own invite code. and there would have been another 20 or more of them.

Reveal the truth hiding in plain sight with Spur. Enrich IP data to detect residential proxies, VPNs, and bots using the highest-fidelity IP intelligence to stop fraud, fake users, and threats in real time.

I've recently been playing around with vibe coding some basic tree-like data structures (treaps, red-black trees, AVL trees, and hash-array mapped tries) in Rust, and then twisting the arm of the LLM to do an optimization from Sarnak and Tarjan (1986) that lets you keep a version history without paying O(log n) path copying costs. This is the sort of thing that, in the old days, might have made for a useful undergraduate senior thesis that they'd crank on for a semester.

I'm at a point where I now have modest confidence in the correctness of my vibe code (e.g., it's got property-based tests that check the invariants, and also doesn't crash under load, despite lots of internal calls to Option::expect()), but I'm not confident enough to share it. It's not bad but not great.

At some point, I'll write up something useful about what I've learned about how to vibe code (in short, write vicious unit tests or you're doomed), but meanwhile I thought I'd skip straight to the data.

For comparison, I also included Rust's "im_rc" crate, which includes a human-written HAMT by

@bodil; I'm using the faster "Rc" version since that's how I vibe-coded all those others.

Punchline 1: @bodil wins. Their work is the "IM HashMap" in the graph. Higher is better. X-axis is problem size and Y-axis is throughput. The benchmark was 80% reads and 20% a mix of inserts, deletions, and updates. Every "version" is saved, creating (hopefully) real memory pressure.

Punchline 2:

The Sarnak-Tarjan optimization definitely helps for the various binary trees, but my attempt to do it for HAMT ended up costing a factor of two in perf. Yikes.

Graph below generated by Criterion.rs. Yes the colors are horrible. Measured on my M1 MacBook Air, because why not.