Peter G. Neumann has passed away. 93 years old.

Update: John Markoff, at the New York Times, has published an obituary. https://www.nytimes.com/2026/05/17/obituaries/peter-g-neumann-dead.html

| https://twitter.com/danwallach | |

| Github | https://github.com/danwallach |

| Homepage | https://www.cs.rice.edu/~dwallach/ |

| Medium | https://medium.com/@dwallach |

Peter G. Neumann has passed away. 93 years old.

Update: John Markoff, at the New York Times, has published an obituary. https://www.nytimes.com/2026/05/17/obituaries/peter-g-neumann-dead.html

Update:

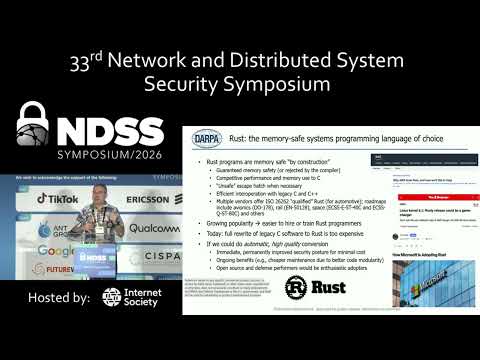

Talk video is now online: https://youtu.be/3bAAVNKhsfY

Webpage: https://www.ndss-symposium.org/ndss2026/keynote-by-prof-dan-wallach/

PSA: I have just cleaned a set of russian misinfo spambots from this instance.

⚠️ We moderate registrations, and have invites enabled. ⚠️

This is how they got in:

8 days ago (mar 29) they requested an account. The request did not appear LLM generated. The request was topical enough to meet our flexible informal threshold, and read as clumsy English.

6 days ago (apr 1) I reviewed and approved the account.

Their signup email accounts were from the emailondeck tempmail provider. Each domain was different. I only bother looking at the email domain if there are other red flags. (A lot of people who choose us are technical with their own email domains. Random domains don't stand out.)

They signed up from an IPv6 address and only ever connected from 1 IP.

They made 0 posts from this account. They did not set a bio. They did not set a PFP.

They created 1 invite code for 5 uses.

5 days ago this invite code was used to make 1 account. They did not post anything, nor did they set a bio or PFP.

That second account, and all other accounts, used IPv4. They also only connected from 1 IP.

Over the next 4 days, the final 4 accounts via that invite code were made.

These accounts set bios that were a few random words and an emoji. They made the non-hashtag variety of posts the misinfo accounts make; bland word salad poetry. They boosted a bunch of other posts to try look normal.

All IPs involved have been labelled by https://spur.us as OPEN_ROUTABLE_PROXY and the IPv4 ones were also labelled as TOR_PROXY.

The admin interface identifies the posts as using clients called "ssl", "scsi", "ib".

The cleanup procedure:

Ban the accounts immediately.

Deactivate the invite code immediately.

Review other recently created accounts and invite codes.

Write this all down for future reference.

Notes:

It's easy to be complacent when you've got account creations moderated or by invite codes.

Chances are that if I had not noticed, each of the active accounts may have created their own invite code. and there would have been another 20 or more of them.

Reveal the truth hiding in plain sight with Spur. Enrich IP data to detect residential proxies, VPNs, and bots using the highest-fidelity IP intelligence to stop fraud, fake users, and threats in real time.

I've recently been playing around with vibe coding some basic tree-like data structures (treaps, red-black trees, AVL trees, and hash-array mapped tries) in Rust, and then twisting the arm of the LLM to do an optimization from Sarnak and Tarjan (1986) that lets you keep a version history without paying O(log n) path copying costs. This is the sort of thing that, in the old days, might have made for a useful undergraduate senior thesis that they'd crank on for a semester.

I'm at a point where I now have modest confidence in the correctness of my vibe code (e.g., it's got property-based tests that check the invariants, and also doesn't crash under load, despite lots of internal calls to Option::expect()), but I'm not confident enough to share it. It's not bad but not great.

At some point, I'll write up something useful about what I've learned about how to vibe code (in short, write vicious unit tests or you're doomed), but meanwhile I thought I'd skip straight to the data.

For comparison, I also included Rust's "im_rc" crate, which includes a human-written HAMT by

@bodil; I'm using the faster "Rc" version since that's how I vibe-coded all those others.

Punchline 1: @bodil wins. Their work is the "IM HashMap" in the graph. Higher is better. X-axis is problem size and Y-axis is throughput. The benchmark was 80% reads and 20% a mix of inserts, deletions, and updates. Every "version" is saved, creating (hopefully) real memory pressure.

Punchline 2:

The Sarnak-Tarjan optimization definitely helps for the various binary trees, but my attempt to do it for HAMT ended up costing a factor of two in perf. Yikes.

Graph below generated by Criterion.rs. Yes the colors are horrible. Measured on my M1 MacBook Air, because why not.

This is great: https://blog.trailofbits.com/2025/11/25/constant-time-support-lands-in-llvm-protecting-cryptographic-code-at-the-compiler-level/

LLVM 22 (and presumably all the subsequent versions) now have a constant time select intrinsic to enable cryptography algorithms to tell the compiler exactly what they need (i.e., evaluate both sides of a conditional expression then select the one you want), replacing gross bit hacking expressions that newer optimizers would unravel.

Trail of Bits developed constant-time coding support for LLVM that prevents compilers from breaking cryptographic implementations vulnerable to timing attacks, introducing the __builtin_ct_select family of intrinsics that preserve constant-time properties throughout compilation.

I think this needs to be repeated, since I tend to be quite negative about all of the 'AI' hype:

I am not opposed to machine learning. I used machine learning in my PhD and it was great. I built a system for predicting the next elements you'd want to fetch from disk or a remote server that didn't require knowledge of the algorithm that you were using for traversal and would learn patterns. This performed as well as a prefetcher that did have detailed knowledge of the algorithm that defined the access path. Modern branch predictors use neural networks. Machine learning is amazing if:

The second of these is really important. Most machine-learning systems will have errors (the exceptions are those where ML is really used for compression[1]). For prefetching, branch prediction, and so on, the cost of a wrong answer is very low, you just do a small amount of wasted work, but the benefit of a correct answer is huge: you don't sit idle for a long period. These are basically perfect use cases.

Similarly, face detection in a camera is great. If you can find faces and adjust the focal depth automatically to keep them in focus, you improve photos, and if you do it wrong then the person can tap on the bit of the photo they want to be in focus to adjust it, so even if you're right only 50% of the time, you're better than the baseline of right 0% of the time.

In some cases, you can bias the results. Maybe a false positive is very bad, but a false negative is fine. Spam filters (which have used machine learning for decades) fit here. Marking a real message as spam can be problematic because the recipient may miss something important, letting the occasional spam message through wastes a few seconds. Blocking a hundred spam messages a day is a huge productivity win. You can tune the probabilities to hit this kind of threshold. And you can't easily write a rule-based algorithm for spotting spam because spammers will adapt their behaviour.

Translating a menu is probably fine, the worst that can happen is that you get to eat something unexpected. Unless you have a specific food allergy, in which case you might die from a translation error.

And that's where I start to get really annoyed by a lot of the LLM hype. It's pushing machine-learning approaches into places where there are significant harms for sometimes giving the wrong answer. And it's doing so while trying to outsource the liability to the customers who are using these machines in ways in which they are advertised as working. It's great for translation! Unless a mistranslated word could kill a business deal or start a war. It's great for summarisation! Unless missing a key point could cost you a load of money. It's great for writing code! Unless a security vulnerability would cost you lost revenue or a copyright infringement lawsuit from having accidentally put something from the training set directly in your codebase in contravention of its license would kill your business. And so on. Lots of risks that are outsourced and liabilities that are passed directly to the user.

And that's ignoring all of the societal harms.

[1] My favourite of these is actually very old. The hyphenation algorithm in TeX trains short Markov chains on a corpus of words with ground truth for correct hyphenation. The result is a Markov chain that is correct on most words in the corpus and is much smaller than the corpus. The next step uses it to predict the correct breaking points in all of the words in the corpus and records the outliers. This gives you a generic algorithm that works across a load of languages and is guaranteed to be correct for all words in the training corpus and is mostly correct for others. English and American have completely different hyphenation rules for mostly the same set of words, and both end up with around 70 outliers that need to be in the special-case list in this approach. Writing a rule-based system for American is moderately easy, but for English is very hard. American breaks on syllable boundaries, which are fairly well defined, but English breaks on root words and some of those depend on which language we stole the word from.