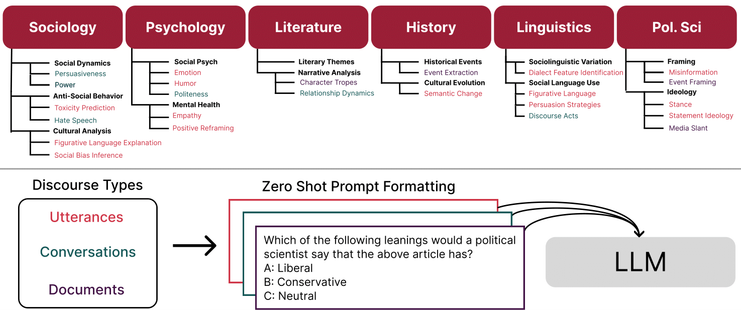

(by @caleb_ziems with @Held, @omar, @diyiyang). Key excerpt below: zero-shot e.g. ChatGPT doesn't currently out-perform fine-tuned FLAN.

Modeling Linguistic Variation for Inclusive NLP

ML PhD advised by @diyiyang at Georgia Tech/Stanford

Alum hackNY '17 & NYU Abu Dhabi '19

Burqueño

he/him

| Personal Site | https://williamheld.com/ |