I want to emphasize this because when I talk about AI security reports now, half my readers seem to believe those are AI slop. They're not. They are found with AI tools and normally high quality bug reports.

The weakest part is that they tend to overstress the vulnerability angle. Lots of them are well phrased bug reports that are still "just bugs".

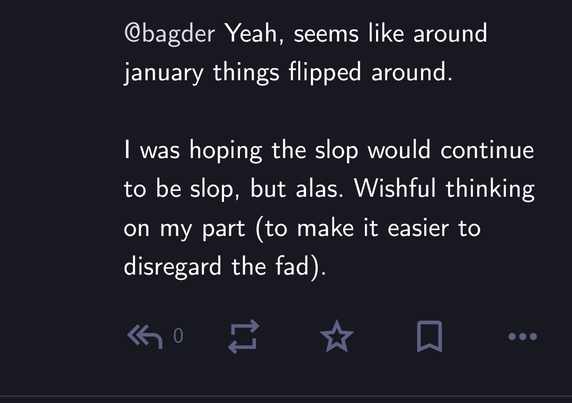

@bagder Yeah, seems like around january things flipped around.

I was hoping the slop would continue to be slop, but alas. Wishful thinking on my part (to make it easier to disregard the fad).

@bagder I get this with fwupd too. Everything that's AI found is reported as a CVSS 10.0 CRITICAL vulnerability, and then you find out it's assuming the attacker has write access on /etc or something dumb like that.

At that point it's just a regular old typo bugfix like all the other thousands of unimportant commits.

@bagder I see

- good ones using AI as part of a rigorous process with replication

- mediocre where someone asked an AI "Find me a CVE", submits the report without review or replication, and yet still expects credit

If "have write access to the filesystem" is a prerequisite to an exploit: it's not an exploit. You already have total ownership of the server

@bagder Do reporters share the tools used, or are there strong tool indicators in the reports?

Curious about which tool(s) are most successful, at least for cURL research.

I imagine in most cases reporters don't mention the tools used (especially if custom), which is unfortunate.

There's still little to no consequences for wasting time - I've been thinking about the "name and shame" approach you have, maybe that helps change the behavior?

@nicolas17 @j_s_j well I can imagine that expensive AI models really got better. This new one is just perfect example byt in general LLMs changed a lot at the end of previous year.

I have to use Claude at work and it really boosts productivity. It wont code whole project for you but if you know what you are doing these tools really speed up the work.

@bagder I love how you changed your opinion on this topic when you saw real evidence in form of good security reports written by AI.

If someone would write this 2 years ago I would say they are delusional but today its just reality.

I hope soon we get open models with such capabilities as for now only the gatekeeped models from big tech are capable of doing such good work.

curl disclosed on HackerOne: Argument Injection via curl Short-Flag...

This report details how the curl -os command facilitates an Argument Injection vulnerability in applications that wrap the curl command-line tool. The specific command curl -os /etc/passwd --url http://example.com demonstrates a subtle but dangerous behavior. Because -s (silent) follows -o (output), curl expects the very next string to be the filename.In this scenario:The -o flag consumes the...

@pozorvlak To me, the most interesting part of that thread was this post.

This person considers AI their enemy. But not because it is wasting Stenberg's time. They wanted it to continue to waste Stenberg's time, so that they could continue to hate it more.

@pozorvlak Now I think a more reasonable interpretation is: they are concerned about copyright violations, environmental damage, etc., and are dismayed that people like me use AI anyway. The fact of its getting better doesn't fix the other problems, and just means that there are fewer arguments against using it.

(“This is terrible” vs. “This is terrible, maybe when people realise that it doesn't work, they will stop.”)

CC: @[email protected]

@bagder Seems like all you need to do is take away the incentive to get rid of the low effort reports.

Sad they had to ruin it for real reporters now as they don’t get their (deserved) bounty anymore in exchange for the good work they’re doing.