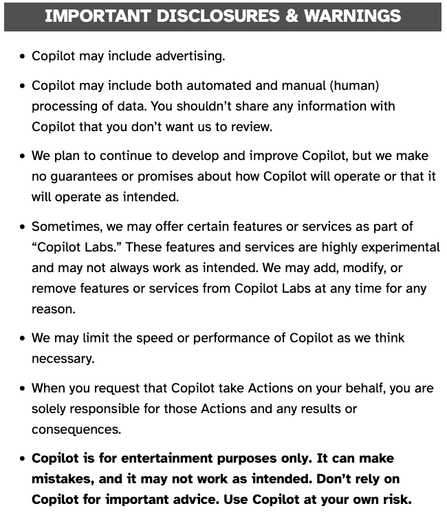

RE: https://cosocial.ca/@mhoye/116325415496773597

@mhoye If they tell me that something is "for entertainment purposes only," while selling it to me as a revolution in the way people write software and/or conduct business, they have established that lying for the purposes of covering their ass is how they do business.

I have other sources of entertainment, and if there's one thing I've learned, it's that leopards who offer to shave your beard for entertainment purposes only always wind up having your face for lunch.

@mhoye Only a completely corrupt and captured legal system would ever accept "For entertainment purposes only" as a disclaimer conferring immunity from liability.

C'mon. Who spends literally billions of dollars to make AI just to entertain people? Can they show the jury their business plan to pivot to entertainment? Is this the xbox division?

@mhoye "Here's Excel. It's buggy. Go ahead and use it for the pro-forma cash flow and income statements you need to raise funds, but if _our_ code screws up and _you_ are arrested for fraud, leave us out: Excel is for entertainment purposes only."

If that is ridiculous, so is the Copilot weasel-clause.

@raganwald @mhoye Pharma companies should heighten the contradictions by labeling their drugs as “for entertainment purposes only.”

Would also make a dandy shield against malpractice. "Your splenectomy was for entertainment purposes only. While I understand you didn’t find my little gratis surprise – the resection of your liver – entertaining, you can hardly blame me for trying to lighten your day.”

> we make this tool but we cannot guarantee that it does something and if it does we cannot guarantee that it does it correctly. Use at your own risk also it's not a tool.

@Sphinx_Pouet @noodlemaz I might have gone through the same reaction as I think you did.

"This can't possibly be real. It *must* be satire."

"..."

"Apparently, in the grim future of 2026, Poe's law is the only law."

www.microsoft.com/en-us/microsoft-copilot/for-individuals/termsofuse

- Do not taunt Copilot.

- If Copilot begins to smoke, get away immediately. Seek shelter and cover head.

- Copilot contains a liquid core, which, if exposed due to rupture, should not be touched, inhaled, or looked at.

- Ingredients of Copilot include an unknown glowing green substance which fell to Earth, presumably from outer space.

- Discontinue use of Copilot if any of the following...

@mhoye "For example, we can’t promise that any Copilot’s Responses won’t infringe someone else’s rights (like their copyrights, trademarks, or rights of privacy) or defame them."

Remember that little period when Microsoft was promising to compensate Github Copilot users if they were sued for IP violations for Copilot output?

Anyway.

@mhoye "We don’t own Your Content, but we may use Your Content to operate Copilot and improve it. By using Copilot, you grant us permission to use Your Content, which means we can copy, distribute, transmit, publicly display, publicly perform, edit, translate, and reformat it, and we can give those same rights to others who work on our behalf."

We don't technically own your content, but we absolutely can do anything we want to with it. So can anyone else if we want them to. Suck it, peons.