RE: https://infosec.exchange/@malwarejake/115695789576148295

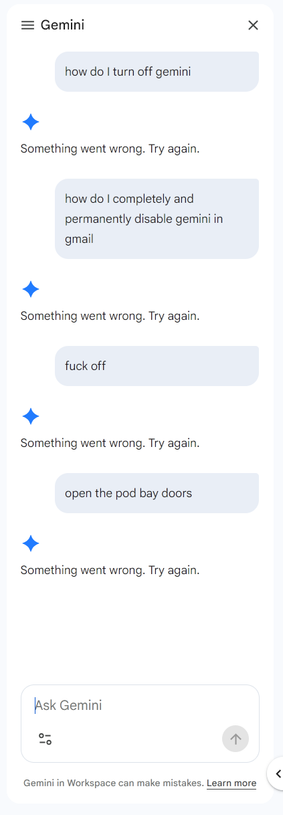

Infosec industry AI hype: AI agents automating full attack chains, AI polymorphic code, SKYNET!!

Infosec AI reality: Using AI products as a glorified pastebin

https://mastodon.social/@malwarejake@infosec.exchange/115695789609999560