* 45% of all AI answers had at least one significant issue.

* 31% of responses showed serious sourcing problems – missing, misleading, or incorrect attributions.

* 20% contained major accuracy issues, including hallucinated details and outdated information.

Kicker: Separate study found "just over a third of UK adults saying that they trust AI to produce accurate summaries, rising to almost half for people under-35"

https://www.bbc.co.uk/mediacentre/2025/new-ebu-research-ai-assistants-news-content

They note "Comparison between the BBC’s results earlier this year and this study show some improvements but still high levels of errors" but don't address the question of whether the industry has any idea of how to solve the underlying problem

(spoiler: they don't)

https://www.bbc.co.uk/mediacentre/2025/new-ebu-research-ai-assistants-news-content

#AIIsGoingGreat "A US teenager was handcuffed by armed police after an [AI] system mistakenly said he was carrying a gun - when really he was holding a packet of crisps… AI alert was sent to human reviewers who found no threat - but the principal missed this"

Tossup whether this belongs here or in the "cops being abusive shitbags" thread*, but it does highlight how the "sure AI fails but just have a human check" line is mostly CYA for vendors

#AIIsGoingGreat, supplemental: "Google’s controversial new AI Mode has falsely named an innocent Sydney Morning Herald graphic designer as the man who confessed to abducting and murdering three-year-old Cheryl Grimmer more than 50 years ago … appears to have latched onto the designer’s name instead, given he was credited for an illustration " - Perfect illustration of how #LLM "AI" fills in the blanks with statistically plausible BS

Who could have predicted that if you present a statistical text completion machine with a scenario that mirrors a trope frequently found in the training set, it may produce output which follows the trope. SKYNET!!!!

https://www.wired.com/story/chatbots-are-pushing-sanctioned-russian-propaganda/

"Patrick Gelsinger took the reins at Gloo, a technology company made for what he calls the “faith ecosystem” – think Salesforce for churches, plus chatbots and AI assistants for automating pastoral work and ministry support"

Uh… "Lu recommends that leaders start by steering workers toward tasks that AI clearly handles better than humans and where personalization is unnecessary, such as numeric estimation and forecasting tasks" - numeric estimation tasks more or less demanding than estimating the number times "r" appears in strawberry? 🤔

https://www.businessinsider.com/inside-ai-divide-roiling-video-game-giant-electronic-arts-2025-10

Good rebuttal to the "but humans make mistakes too" or "just treat it like an intern" excuses for LLM failings: "A lawyer reviewing a first-year associate’s work likely expects some errors flowing from inadequate research or an incomplete understanding of the law. They do not suspect straight-up fictitious content"

Deceptive Dynamics of Generative AI: Beyond the “First-Year Associate” Framing - Slaw

Guidance for lawyers on generative AI use consistently urges careful verification of outputs. One popular framing advises treating AI as a “first-year associate”—smart and keen, but inexperienced and needing supervision. In this column, I take the position that, while this framing helpfully encourages caution, it obscures how generative AI can be deceptive in ways that […]

"there is also the lesser-known prospect of [subtler than fake citation] hallucinations: a date altered here, part of a legal test changed there. These more subtle hallucinations are harder to detect and mean that where accuracy is paramount, extreme caution and rigourous verification is warranted when relying on AI outputs. In some situations, the vetting burden may, in fact, outweigh any efficiency gains" 💯

Deceptive Dynamics of Generative AI: Beyond the “First-Year Associate” Framing - Slaw

Guidance for lawyers on generative AI use consistently urges careful verification of outputs. One popular framing advises treating AI as a “first-year associate”—smart and keen, but inexperienced and needing supervision. In this column, I take the position that, while this framing helpfully encourages caution, it obscures how generative AI can be deceptive in ways that […]

"[CFO] Sarah Friar has told some associates the company is aiming for a 2027 listing … But some advisers predict it could come even sooner, around late 2026 … A successful offering would mark a major win for investors such as SoftBank, Thrive Capital and Abu Dhabi's MGX. Microsoft, one of its biggest backers, now owns about 27% of the company after investing $13 billion" - Sure, they're building god, but "IPO before the bottom drops out" is a nice backup plan

#AIIsGoingGreat "As the deepfake gathered views on X, some users asked the platform’s AI chatbot Grok whether it was authentic. In at least two replies seen by BBC Verify, which have now been deleted, Grok wrongly claimed the video was genuine"

(I remain gobsmacked by the number of people who ask a chatbot to verify purported current events. Even if you're an LLM optimist, this seems like a task they are spectacularly unsuited for)

https://www.bbc.com/news/live/c4gjv2xdl5dt?post=asset%3A884ecf7b-139a-4033-a61b-73fc82891a49#post

"These centres will cost $2.5tn to build, according to Barclays, to service an industry that still doesn’t turn a profit. But the maddest bit arguably is how much energy they will require once completed. Using Barclays’ 1.2 “Power Use Effectiveness” ratio, all these data centres — if they are all completed — would need 55.2 gigawatts of electricity to function at full capacity"

https://www.ft.com/content/2b849dbd-1bef-4c26-aa11-2cb86750d41e

Via that FT article "Beyond sheer density, AI workloads introduce a second, equally formidable challenge: volatility. Unlike a traditional data center running thousands of uncorrelated tasks, an AI factory operates as a single, synchronous system … This creates a facility-wide power profile characterized by massive and rapid load swings … The power draw of a rack can swing from an “idle” state of around 30% to 100% utilization and back again in milliseconds"

Hard to see how torching a few trillion dollars on the altar of FOMO could possibly go wrong #AIIsGoingGreat

https://www.theverge.com/ai-artificial-intelligence/812455/ai-industry-earnings-bubble-fomo-hype

Thought I was joking about collateralized GPU obligations*, but here we are: "private-equity firms put up or raise the money to build a data center, which a tech company will repay through rent. Data-center leases from, say, Meta can then be repackaged into a financial instrument that people can buy and sell—a bond, in essence … leases can be combined into a security and sorted into what are called “tranches” based on their risk"

https://www.theatlantic.com/technology/2025/10/data-centers-ai-crash/684765/

Ah yes, who could have predicted that a probabilistic text generator trained on the sum total of the world's new age hocus pocus would attract a cultish following?

I'm with the experts in the article who doubt it qualifies as a cult itself, but I bet it will be the foundation of a few

https://www.rollingstone.com/culture/culture-features/spiralist-cult-ai-chatbot-1235463175/

#ChatGptLawer roundup from @arstechnica (leaning heavily on Damien Charlotin's excellent database*)

Today's #AIIsGoingGreat: Going so great we gotta wear shades

https://www.404media.co/power-companies-are-using-ai-to-build-nuclear-power-plants/

RE: https://tldr.nettime.org/@tante/115564591798368145

Another problem with the "but lots of normies like AI" argument @anildash doesn't engage with is that a lot of popular use cases are actively harmful to those same users, e.g. AI "summaries" that randomly inject falsehoods. Lots of people like smoking cigarettes too, but that doesn't make it morally defensible to go around handing them out, even if your tobacco is more ethically sourced than the big brands!

https://mastodon.social/@[email protected]ttime.org/115564592068950117

Achievement unlocked: Scoffing critic

Today's #AIIsGoingGreat (ht @dangillmor*) "Kolakowski, who serves on California’s Alameda County Superior Court, soon realized why: The video had been produced using generative artificial intelligence. Though the video claimed to feature a real witness — who had appeared in another, authentic piece of evidence — Exhibit 6C was an AI “deepfake,” Kolakowski said"

I used a neural network trained on decades of tech industry corporate speak to summarize this document and all it came up with was "vacuous horseshit"

https://blog.mozilla.org/en/mozilla/rewiring-mozilla-ai-and-web/

If AI were the amazing efficiency booster the hype claims, shouldn't all those medium to large non-AI focused companies be posting gains? 🤔

In today's #AIIsGoingGreat (ht @daedalus) Deloitte charges Newfoundland and Labrador $1.6 million (CAD, presumably) for a report with AI hallucinated citations, and then insists it "stands by its conclusions and findings" and just needs to fix the citations. As ever in these cases, the question of how they came up with the assertions the citations supposedly supported is not addressed

https://theindependent.ca/news/lji/deloitte-breaks-silence-on-n-l-healthcare-report/

* https://mastodon.social/@daedalus@eigenmagic.net/115619136163500955

#AIIsGoingGreat 'Instead, per [District Judge Sara Ellis] footnote, body camera footage revealed that an agent “asked ChatGPT to compile a narrative for a report based off of a brief sentence about an encounter and several images.” The officer reportedly submitted the output from ChatGPT as the report'

https://gizmodo.com/judge-says-ice-used-chatgpt-to-write-use-of-force-reports-2000692370

This suggests a good question to ask healthcare providers who are falling over themselves to shove* #AI into everything: Does your malpractice insurance cover AI related errors?

* e.g. https://mastodon.social/@reedmideke/115047332404466187

In today's #AIIsGoingGreat (ht @GossiTheDog*) the Economist brings us this chart of Goldman Sachs index of companies with the "largest estimated potential change to baseline earnings from AI adoption via increased productivity" vs the S&P500

* https://mastodon.social/@GossiTheDog@cyberplace.social/115638306307720246

The same article notes that "According to a poll of executives by Deloitte, a consultancy, and the Centre for AI, Management and Organisation at Hong Kong University, 45% reported returns from AI initiatives that were below their expectations"

Loyal readers may recall that Deloitte themselves was recently featured in this thread* charging big bucks for hallucinated BS

I feel like the various surveys about "what percent of workers use AI at work" would be more informative if "use" was defined more specifically. You can hardly use Microsoft or Google's business suites without stepping in AI somewhere, but that doesn't mean users are benefiting from it. The Census Bureau's "in producing goods and services" qualification may be confusing, but it at least it suggests the AI has to have some material role

Alternative hypothesis that AI doesn't help people who actually do shit remains unexplored

https://www.businessinsider.com/executives-adopting-ai-higher-rates-than-workers-research-2025-10

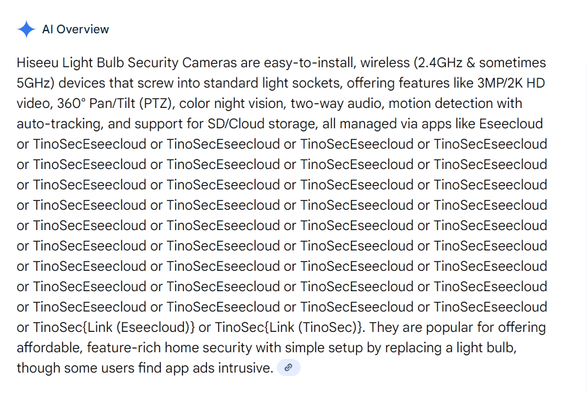

#AIIsGoingGreat. See replies in thread for more greatness. Apologists will say stuff like "that's a silly question, just look at the calendar on your phone, no one uses google for that" but I'm sorry, if you dumped a few hundred billion dollars into this magic answer machine and you can't get it to stop doing stupid shit like this, I'm gonna be a *little* skeptical that it's ready to run health care, solve climate change and revolutionize science

Bonus #AIIsGoingGreat - With the power of #AI, I predict that by 2026 there will be at least 30 "r"s in "year"

(I did this a second time in a new private window because I realized after I closed the first one I should see what the supposedly supporting link was…)

edit: one more for old times sake

RE: https://infosec.exchange/@timb_machine/115657160615736269

A succinct "WTF are we even doing here" that applies to vast swathes of the use cases GenAI is being hyped for, to which the entire industry has no coherent response 👇

https://mastodon.social/@timb_machine@infosec.exchange/115657160659807487

The optimistic scenario here is this is just a cynical attempt to jump on the AI gravy train knowing the bubble will pop before anything gets built…

https://www.404media.co/nuclear-rian-bahran-iaea-international-symposium-on-artificial-intelligence/

Today's #AIIsGoingGreat, courtesy of the UK NCSC: "SQL injection can be properly mitigated with parameterised queries, but there's a good chance prompt injection will never be properly mitigated in the same way. The best we can hope for is reducing the likelihood or impact of attacks" - Will this affect the market's willingness to throw more billions on the #LLM bonfire? Probably not, but only time will tell

¯\_(ツ)_/¯

https://www.ncsc.gov.uk/blog-post/prompt-injection-is-not-sql-injection

https://therecord.media/trump-plans-ai-exec-order-curbing-state-laws

RE: https://infosec.exchange/@malwarejake/115695789576148295

Infosec industry AI hype: AI agents automating full attack chains, AI polymorphic code, SKYNET!!

Infosec AI reality: Using AI products as a glorified pastebin

https://mastodon.social/@malwarejake@infosec.exchange/115695789609999560

'… saying that developers should not "intentionally encode partisan or ideological judgments" into a chatbot's outputs' - Ah yes, text generating machine derived from a statistical soup of vast amounts of human-written text must not "encode partisan or ideological judgments" totally realistic requirement there guys, definitely not a transparent attempt to impose your own preferred partisan and ideological preferences

Everyone is rightly mocking the fact the bot suggests apt on Fedora, but I would also like to point out that the wifi "diagnosis" is crap. Sure, checking for updated firmware and drivers is reasonable, but it's vanishingly unlikely the problem is insufficient system RAM or "aggressive driver configuration", whatever the heck that would be

https://fedoramagazine.org/find-out-how-your-fedora-system-really-feels-with-the-linux-mcp-server/

Today's #AIIsGoingGreat "Inasmuch as you are going to have to double-check every “fact” that “AI”” provides to you, why not eliminate the middleman and just not use “AI”? It’s not decreasing your workload here, it’s adding to it"

#AIIsGoingGreat "Asked whether Taiwan is a country, it would repeatedly lower its voice and insist that “Taiwan is an inalienable part of China. That is an established fact” or a variation of that sentiment"

There's a whiff of "OMG X is rotting kids brains" moral panic about this, but also, the entire concept of an #LLM powered toy just seems like asking for trouble in a whole bunch of ways. Even ignoring the possible psychological impacts, it's indisputable the industry does not have a way to create reliable guardrails, and internet connected toys generally have a long history of egregious privacy violations

This piece is genuinely good rundown of how LLMs are BS machines, and then goes on to say "you can use LLMs to get incredible gains in how fast you can do tasks like research, writing code, etc. assuming that you are doing it mindfully with the pitfalls in mind" 🥴

I remain unconvinced that the productivity gains survive the "you must have a subject matter expert verify that every single thing it did" overhead, but YMMV, I guess

Bonus #AIIsGoingGreat makes all those billions in capex worth it

'Slop' is Merriam-Webster's 2025 word of the year

Merriam-Webster’s 2025 word of the year is “slop.” The word was first used in the 1700s to mean soft mud. It evolved more generally to mean something of little value. The definition has since expanded to mean “digital content of low quality that is produced usually in quantity by means of artificial intelligence.” In other words, as the dictionary's president says, “absurd videos, weird advertising images, cheesy propaganda, fake news that looks real, junky AI-written digital books." The dictionary has selected one word every year since 2003 to capture and make sense of the current moment.

"… and explained that AIs have emotions and that tech firms were working to create a new form of sentience, according to Discord logs and conversations with members of the group" https://www.404media.co/anthropic-exec-forces-ai-chatbot-on-gay-discord-community-members-flee/

RE: https://researchbuzz.masto.host/@researchbuzz/115782207999466966

On the bright side, if you've just got to set a trillion dollars and change on fire, doing it in a way that doesn't require blowing a bunch of people up is an improvement of sorts, I suppose

https://mastodon.social/@Researchbuzz@researchbuzz.masto.host/115782208145625995

I have mixed feelings about Zitron rants but anyway, collateralized GPU obligations* in the wild! "As a result, these neoclouds are forced to raise billions of dollars in debt, which they collateralize using the GPUs they already have, along with contracts from customers, which they use to buy more GPUs"

https://www.wheresyoured.at/the-enshittifinancial-crisis/#coreweave-is-still-a-time-bomb-by-the-way

The Enshittifinancial Crisis

Soundtrack: Lynyrd Skynyrd — Free Bird This piece is over 19,000 words, and took me a great deal of writing and research. If you liked it, please subscribe to my premium newsletter. It’s $70 a year, or $7 a month, and in return you get a weekly newsletter that’

Behold the awesome power of #AI, the product of billions of dollars in GPU time, simplifying your life by precisely summarizing the most pertinent information

Google AI assures me* that "microslop coprolite" is a recent viral internet joke, and with your help, we can retcon that into reality

* with hallucitations that in no way support the claim

Shot: " xAI announced Tuesday it raised $20 billion in an upsized Series E funding round, exceeding its $15 billion target"

Second chaser: "Nvidia and Cisco Investments joined as strategic investors" in the series E above

(is it good or bad if the CSAM generating machine is propped up circular investing? 🤔 )

#AIIsGoingGreat "The problem? Neither of those places exist. Nor do a handful of the other spots marked on the National Weather Service’s forecast graphic, riddled with spelling and geographical errors that the agency confirmed were linked to the use of generative AI"

Thing that boggles my mind about this is NWS has tools for generating forecast maps. It's one of their core products!

Shot: 'OpenAI announced ChatGPT Health, a dedicated section of the AI chatbot designed for “health and wellness conversations” intended to connect a user’s health and medical records to the chatbot in a secure way'