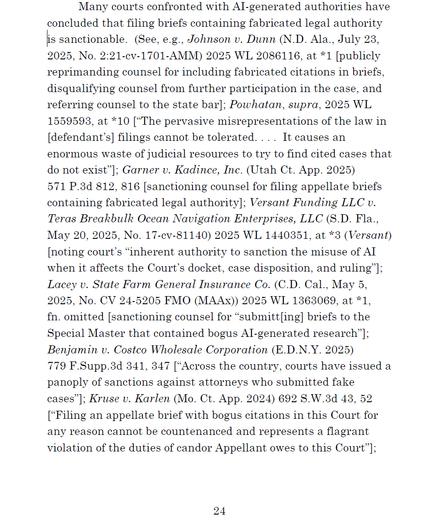

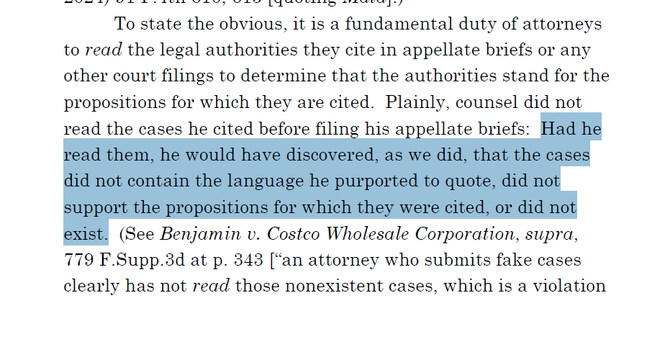

Glad to see news outlets pointing out that #LLM chatbots aren't reliable sources of information about themselves: Way too many people who should know better fall for the "chatbot did weird thing, so I asked it to explain and it said…"

However it should be pointed out that this isn't a special case, they're equally likely to BS about loads of other stuff!

https://www.theverge.com/x-ai/758595/chatbots-lie-about-themselves-grok-suspension-ai

(also https://arstechnica.com/ai/2025/08/why-its-a-mistake-to-ask-chatbots-about-their-mistakes/)