setting StateDirectory=foo in a systemd unit makes it create the directory /var/lib/foo and set $STATE_DIRECTORY to that

https://www.freedesktop.org/software/systemd/man/latest/systemd.exec.html#RuntimeDirectory=

$STATE_DIRECTORY will expand in your Exec= declaration but not in e.g. Environment=

so i can't say

Environment=DB_FILENAME=${STATE_DIRECTORY}/database.sqlite3

...

Exec=/usr/bin/command

instead i have toExec=env DB_FILENAME=${STATE_DIRECTORY}/database.sqlite3 /usr/bin/command

is there a prettier way?

is there a prettier way?

there is!

StateDirectory=%N

Environment=DB_FILENAME=%S/%N/database.sqlite3

setting up xmpp service on an ipv6-only vm with a graciously provided sniproxy to reverse-proxy ipv4 traffic to it

xmpp seems fine. i think my fantastic vps will let me forward those ports but even if not you can make xmpp share http(s) ports with a webserver with some hacks

but if i want to do voice and video, i'll need stun/turn to help ipv4 users

stun seems fine. client connects to it, server says "you appear to me to be (address):(port)," done.

turn however...

turn is for when no clever way can be found to connect clients directly to each other; they both connect to a turn server which just proxies: it shovels data back and forth to each client

even though my host would probably grant a request to proxy traffic through their ip4->ip6 machine to my turn service, which would proxy it again, that seems wasteful and squandering of a currently free community resource. i think i will build this out pretending that i can't do that

i'm not sure if there's any need for stun or turn with ipv6 clients, but otoh ipv6 nats are possible

like when your isp assigns you a /64 but you want to set up several subnets

think i'll rely on the reverse proxy to enable xmpp for ipv4-only users, and just say that voice and video is unavailable to them. the only real users of my xmpp server are my immediate family, and if both happen to be on an ip4-only network and need voice/video, they can get on the vpn

i got a ipv6-only vm from my sweet hosting provider

they gave me a /56 worth of ipv6 address space to play in. woah.

said my vm is currently using only the first /64 of it.

my vm's address is (prefix)::1/64, amazing!

so uh

how do i plug servers and containers into the rest of my available space? do i have to request each address get added to a table somewhere? (no)

(to be continued. but i think i did it, and understand it now!) 🎉

(i might do this as a blog instead)

woo! connected to a test instance of prosody xmpp on port 443 (https) using xmpp's direct-tls protocol

which means

- hopefully, certain employers won't block the traffic anymore

- hopefully, it can pass through sniproxy the same way https traffic does, which will enable ipv4 users to talk to my ipv6 xmpp server

there's still different ways i can think of to wire all this together, wondering if any would be better

other ways i could wire it up:

instead of using a separate ip address so it and caddy can both listen on port 443, i could have caddy reverse proxy to it

might need to put it behind a proxy anyway because it might not handle PROXY protocol from sniproxy

might need to put it behind a proxy anyway so iocaine can slap the llm scrapers that try it

maybe run it in a container and figure out how to get those their own ip address

wow incus (anti-ubuntu fork of lxd) and its web ui is pretty slick

also libvirt and virt-manager connected to lxc offers the ability to create an application container or an operating system container

(compared to incus which says application containers require docker?)

this feels like a deep rabbit hole, hope i can get grips on it soon

all right incus there you go, a whole-ass lvm volume group all to yourself, let's see what you do with it

also: learning wtf a network bridge is and how to use one 🧑🎓📖 instead of the usual winging it with vague guesswork and assumptions from context

is xmpp's direct-tls protocol (usually on port 5223) the same as its unencrypted protocol (usually port 5222) wrapped in tls? same as imaps and pops and https? so could i terminate the tls with haproxy and reverse proxy to an unencrypted xmpp server?

the protocol is all spec'd in RFCs for anybody to look at but they don't wanna get in my brain

also, aw, the wikipedia article for xmpp describes as an example a transport for icq, rip https://en.wikipedia.org/wiki/XMPP

the docs don't reveal the info i want, and i don't want to try reading reams of source code in an unfamiliar language right now, so i'll set up an experiment and see how it behaves i guess

(the only activity that ever feels slightly close to doing science in my field of software-jiggling)

my ipv4-only client

-> ipv4-to-ipv6 sniproxy port 443

-> ipv6-only vm

-> haproxy to conditionally unwrap proxy protocol

-> prosody xmpp server

... experiment is working ✨🤩✨

calling it now: even though haproxy has lots of sharp edges, i like it, or its configuration mechanism, way more than caddy's

- i seem to be able to make stuff work in haproxy that takes struggle and uncertainty in caddy

- caddy's magical get-your-free-ssl-cert-automatically is nice when you want to stand up an experiment but i like cronned certbot for "prod"

- otoh caddy has a nice builtin static webserver 🤷

i think i'm going to be stuck using both for a while

migrating xmpp services from my old vps to my colocataires vm is the last thing remaining to do before i'm able to delete the old account (and stop paying for it)

(dreamcompute hasn't been bad, but i like colocataires way better)

now that i've proven to myself that it can work over port 443 and ipv6-only, it's time to configure it properly

next: see if i can move the service over without xmpp clients complaining

but first, sleep 😴

all the clients we usually use on android and linux are now connecting to my new xmpp server at colocataires, with no settings changes, on the https port so it looks like website traffic 😮

i don't have stun/turn turned back on yet, so voice and video calls probably won't work just yet

i don't have the conversations.js web client set back up yet but that's mostly for emergency use

this may be success enough to decommission the old servers! 🎉

https://conversejs.org/ is back up on my domain and working just fine 🎉

old dreamcompute vps is turned off, sitting there just in case for a bit, then i can delete my account 🎉

(i don't hate dreamcompute but i like https://colocataires.dev way better)

think i'm just gonna leave stun/turn not running for now. if both parties are on ipv6-capable networks, calls should work. let's see how often that's an issue

if i want to set up stun/turn, i should abandon my somewhat irrational ipv6-purist intentions and pay the loonie for an ipv4 address

if i'm gonna do that then maybe i can keep almost all of my vm ipv6-only, except for one container that runs coturn?

if i'm gonna do that then maybe i can figure out how to make that container, that only does whatismyipaddress and proxy video calls, shareable with my datacenter neighbors

my prosody xmpp setup in its new server mostly works great (assuming ipv6 capable network) but somewhere i've introduced a timeout that closes the connection after a uniform number of seconds

pretty sure it has something to do with haproxy, though from a skim of the docs these timeouts are supposed to apply to the initial connection setup, not inactivity

also after a chat with someone more knowledgeable i think i'm resigned to eventually acquire that ipv4 address

i think i might have fixed haproxy closing the socket on my long-lived idle xmpp connections by setting timeout tunnel 1h

i'll check again in several hours to be sure

wish i knew more precisely why this fixes the issue. are clients and/or the server sending keepalive messages more often than 1h but less often than 10m? is the tcp keepalive stuff not being used? someday perhaps but more likely i'll leave it unexamined as long as it works

recent impulses have been like

"this is too long for toots i should blog about it"

"but i don't want to put anything new or deploy a new website until i have something installed to block the scrapers like iocaine"

"so let's install it"

"ehh not enough brain rn, maybe next time"

so i set up some rules to block a good portion of bots (until they smarten up)

which frees me up to actually post some blog 👍

i'll install iocaine properly after that

i want to set up a photo storage server

photoprism seems like a good browsing interface but what i'm more concerned about rn is the upload

so a client on each android phone that backs up photos to the server

but i want to be able to turn the server off for a while, as a normal/expected thing one does, and not have the clients moan about it. they should just retry occasionally until the server comes back online

anybody have a setup like this running already?

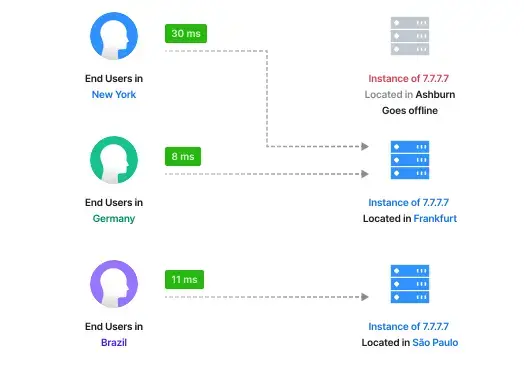

musing about how to do high(ish)-availability systems on the cheap, goblin style

- i got this sweet vm on a friend's server

- someday i wanna convince employers they're better off in there, than on aws

- if the host, or the whole datacenter, or the hose from the internet to that datacenter get orbital-lasered to slag in the middle of the business day, how to automatically shunt traffic to backup systems?

- dns records are too cached, too slow to flip

...

- also don't want to send all traffic through a load balancing proxy, expensive and another single point of failure

but wait i have some tradeoffs that might enable some tricks:

- i want high availability for existing users but new users can wait for dns to propagate

- the site can be a "progressive web app" that gets mostly cached in the browser

so in this specific case i think i could do client-side js failover. maybe even a service worker?

wait, how often does the whole region disappear anyway?

that was never a concern multiple employers ago when i got to help out at the datacenter

they did redundant everything inside the rack, regular cable-yank failover tests and everything, but no geographical redundancy iirc

maybe i'll inquire about a vm on another host within the same rack when i get closer to dragging clients on board and just forget about higher availability than that for now

embarrassed to admit that i've today taken one halfhearted step toward learning wtf snmp is by way of (re)reading the rrdtool tutorial

no, not smtp the email sending thing. snmp the monitoring of hardware status thing

all because i want to put up some pretty charts of computer doing inscrutable computer thing

(accuracy? that's like number seven or twelve down the list of nice-to-haves)

well, actually,

my ipv4-only client

-> colocataires' ipv4-to-ipv6 sniproxy on port 443

-> my ipv6-only vm

-> haproxy to unwrap proxy protocol

-> prosody xmpp server

... experiment is not working ✨😕✨

so:

did it never work and i mistakenly thought that it did?

or

did it work at first but i broke it?

an easy fix would be to get an ipv4 address which obviates the need for sniproxy. but dammit before i do that i want answers: is this setup possible? if so, what'd i mess up?

(maniacal cackling)

i have finally got iocaine installed. wasn't even hard, just needed to sit down and do the steps and brain is real good at not that sometimes

hooked it up to the apt-installable anarchism faq for its markov corpus and the biggest canadian flavored apt-installable wordlist i could get

feels good. like the invulnerability you get from your favorite winter gloves and jacket before going out to play in the blizzard

now it's safe to blog again 🎉

i um only just now noticed that the apt-installable anarchism faq, in uncompressed markdown format, which i fed to iocaine for its markov corpus,

is twelve megabytes. of text.

almost 1.9 million words.

iocaine seems to be doing just fine so far

accidentally set caddy to syslog every request sent to iocaine 3 and oh gosh my website is pumping so much poison markov trash into chatgpt and claude rn 😍 💕

and it's using less cpu and memory than systemd-journald to do so

might need to look into setting bandwidth limiters on this thing

i'm still casting around for anti-cloud(flare) mechanisms of regional failover. like if the cable to the datacenter i use gets cut, or there's political upheaval, how to automatically shunt traffic to a different datacenter faster than a dns update would propagate through caches

i'm vaguely aware of this technology called anycast but i don't know much

https://grebedoc.dev/ uses https://rage4.com/ to do it

yeah eat it, ai scraper assholes

(gradually improving my monitoring, iocaine stats newly added to my collectd/rrdtool dashboard)

tiddlywiki doesn't come with a basic to-do feature, to make checkboxes and tick them off without having to tediously edit the page and type some [x]s

but it does have a plugin mechanism. found two plugins (both by the same author) that do checklists: Kara and Todolist

installation instructions made me nervous though, since i'm using tiddlyPWA that is rather different on the backend...

i haven't put any rate limiters on here yet (i definitely will), but seems like claude and chatgpt limit themselves to 25 requests per second to my websites. i wonder how they picked that number, and if they'll ramp it up. and if i ratelimit, will they send more requests from other ip addresses. etc.

feels so good to know these assholes' language models are chugging down low-effort ungrammatical poison after ignoring my robots.txt

should i do traffic shaping using tc, haproxy, or shove yet another plugin into caddy?

should i slow the response down to a trickle for all the llm scrapers, or randomly drop their connections? 😈

despite it being part of linux since version 2.2, which is about as long as i've been daily-driving it, i hadn't heard of tc until this past month. that's "traffic control," a tool to control the kernel's network traffic limiting, smoothing, and prioritization

and for a command with such a tiny name wow it's a lot

i only want to restrict the bandwidth of one process so i think i'll look for easier mechanisms before i attempt to swallow this whole burrito

til: trickle, a lightweight userspace bandwidth shaper

could i just wrap iocaine with this and be done?

... except trickle doesn't work on statically linked executables, like iocaine. womp womp

i guess i could do a trick like wrap socat with it, then talk to iocaine through that,

but that feels more complicated than just switching back to haproxy and using its builtin traffic shaping features

what bits of haproxy, lighttpd, nginx, caddy, static-web-server should i string together?

requirements:

- iocaine can plug in somewhere

- can control the bandwidth of iocaine's garbage generator

- static web server

- for multiple domains ("virtual hosts")

- uses sendfile() for speed

- precompressed files trick

- haproxy: has traffic shaping, proxy, fastcgi. no sws; have to proxy one. which?

- lighttpd: small, sendfile(), correct webdav. can do reverse proxy itself, makes haproxy redundant? can i plug in iocaine?

- nginx: i quit it because it was segfaulting when i tried to configure too many features. but if i'm only using it for static files maybe it's ok

- static-web-server: i like rust. don't like a copy paste chunk of toml per configured domain

- caddy: proxy, fastcgi, builtin static fileserver, traffic shaping requires a module that doesn't do quite what i want. getting tired of guessing my way around caddyfile syntax. don't need its magic certificate management.

- use 'tc' for traffic shaping? big learning curve

- front it with haproxy? lots of redundant features, feels heavy

dang i gotta draw up a feature matrix or something

it's pretty weird that it took me this long to actually do but

tonight i have set up for the first time a program running on a computer inside my home, that people may access like a normal website, without learning my cable modem's ip address in the process, and if someone starts ddosing me i can just unplug and let the household continue watching videos unaware

(i'm having my @colocataires vps proxy traffic through a tailscale vpn to my closet fileserver)

safe(r) home-hosting by reverse proxy from a little computer in a datacenter is one of those things that seems like complex esoteric engineering from afar

but once you've experienced it, and then again when you've set it up yourself, all of a sudden it makes sense and is totally normal and a whole mess of possibilities for what you can cheaply and casually build on the internet blasts wide open

like the first time you experience nerd astral projection

llm scrapers ignoring my robots.txt and pounding on my small website 28 times per second, 24/7. 600kbps of my available bandwidth wasted just on markov trash

it's easy to imagine how they'll ddos any service that does a bit of compute on each request

it's not super exciting but if you're the kind of weirdo who wants to look at my vm's gauges, they are viewable here:

https://telemetry.orbital.rodeo/

i have been cobbling it together using collectd, rrdtool, and scripts instead of the far more reasonable and popular prometheus / grafana combo. because it might be more lightweight? haven't measured

for now it updates only when i run the command, so don't sit there wondering

no light mode or explanatory text (yet) soz

oof, it's somewhat heavy though. went from about 3% avg cpu use to about 6%

the throttling that haproxy does just gets buffered up by caddy in front of it and the result is a long initial delay before a fast transmission of data. like latency

which could probably be implemented more simply with a sleep statement somewhere

i wonder what other strategies i can use to slow down crawlers. thinking random connection drops or http errors 429, 402, and 451

problem: once detected, how best to slap back at ai scrapers? return poison quickly? tarpit? throttled poison drip? drop their ip's packets at the firewall?

idea: drop packets during business hours to free up bandwidth for legit visitors; fast poison otherwise to collect ip addresses for next day's ip ban. "party all night sleep all day" strategy

lots of bot traffic hitting port 80 (http) on my vm just to get redirected to port 443 (https) where they get a "go away, bot" error

who am i keeping port 80 open for?

who types in "orbital.rodeo," lands on http, and doesn't know to or can't try https instead?

many people use hsts and abandon 80

caddy auto-magically puts a redirect on 80 for my sites but i'm increasingly annoyed by its magic. wanna go back to haproxy

think i'll shut it

oh, whoopsie

if an ipv4->ipv6 proxy is telling my webserver the clients' ipv4 addresses using proxy protocol, blocking those ipv4 addresses at the webserver firewall isn't going to do much 🤦

i have a v4 address now, i was just lazy about reconfiguring dns to send v4 web traffic direct to my vm instead of through the v4-v6 proxy. time to get on that

after blocking ai scrapers at the firewall, cut my cpu use down to 1% and bot traffic to almost nothing

you love to see it!

🤔 i wonder how many innocents i'll accidentally shut out if i adopt a policy of, "any /24 prefix with 3 or more scrapers within it dooms the lot"?

🤔 i could set up a "pls let me back in" automation. tell me my biceps are eleven out of ten in this web-form and you get added to an inclusion list that takes effect before the block list

i could implement both of those defense mechanisms

reduce bookkeeping on my part by being a bit overeager about blocking whole prefixes instead of individual ip addresses

definitely want to do something like @alex's butlerian jihad where i block all networks from any ASN abusing my sites

but also, have a cooldown that sends traffic from blocked prefixes to a "let me back in" form that allowlists individual addresses

haha oops i accidentally banned my own ip. fixed it but guessing i'll have to flush the ban lists and rebuild in case i caught any more i shouldn't have

one super nice thing i'm doing this time around is using a wireguard-based vpn for all my ssh'ing. so even when i blocked my own ip address my ssh session was unaffected and i could fix it. and zero log spam from vulnerability scanners constantly trying the door 😌

i want to block any requests from google and facebook; also i want to block any isp who would tolerate scrapers

the database of ip range ("prefix") assignments is downloadable but it's big. 590 entries just for as32934 (facebook). too big to just dump into the firewall

but there's often nothing between multiple records for any given asn. maybe i could treat that as a single range, which would let me express the set of ranges to block more concisely 🤔

ooo python's builtin ipaddress library has collapse_addresses and address_exclude functions, and pyasn uses those. if i study those functions i think i should be able to come up with a "collapse_addresses" variant that absorbs unallocated gaps between allocated subnets for a more concise specification

https://github.com/python/cpython/blob/3.14/Lib/ipaddress.py#L304

too big to just dump into the firewall

whoopsie, that was a wrong assumption on my part based on a bad time i had with way too many iptables firewall rules created by fail2ban many years ago

these days i'm using nftables and its set structure to hold ip addresses, which uses radix trees just like the routing tables do, and you can dump addresses in there all day long, it will manage merging them into ranges and auto expiring them if you want, works great

@pho4cexa Interesting. We only see 176 routes active from AS32934 on our edge router.

```

bird> show route protocol bgp_he_v4 where 32934 ~ bgp_path all count

176 of 1038011 routes for 1038011 networks in table master4

```

Also, it's a 1 litre Lenovo MiniPC and it can handle over 1 million IPv4 routes without a sweat -- have you considered creating null routes for all of the networks you don't like instead of firewall rules?

@pho4cexa Yeah, I have an allowlist based on the follows and follow-requests of my account on the single-user fedi instance I run, for example. Haven’t updated it in a while but the idea is I want to block Hetzner and OVH and all that without damaging my fedi experience.

Now that I think about it, checking my followers would make sense, too.

Anyway, the allow list must be based on something – MX records of the email addresses in your contacts would be a candidate, too. That kind of thing. I just haven’t heard of anybody affected by it.

https://alexschroeder.ch/view/2025-12-23-santa-bots

@algernon i'm no expert here and i probably got this wrong. i'm already seeing bandwidth higher than this limit so i suspect this ends up being 7KB per client not per backend. that said:

listen slowpoison

bind localhost:42068

mode http

filter bwlim-out my-limit default-limit 7k default-period 1s

http-response set-bandwidth-limit my-limit

server iocaine localhost:42069

then have caddy proxy to port 42068 instead of 42069

@jamey yes indeed i probably am lol

next step is to start dropping connections rather than feeding them poison, once ive got a few on the line

@jamey @pho4cexa I think that'd be out of scope for iocaine, but... I plan to turn iocaine into a library, and write a reverse proxy that's just barely has the functionality to replace the Caddy + iocaine + bazmeg (fail2ban-like thing, but tailored for my particular need) + vector combo with just one thing.

Now that would be a place where this could be done. It would be very difficult, indeed, and I suspect, would require noticably more CPU than it does now, because I I'd have to make the response streamable, in smaller chunks, and that complicates the architecture a lot.

@alex nice! how do you find munin? your dashboard is much more complete and nicer to browse than mine, (https://telemetry.orbital.rodeo) but i'm being super miserly about how many cpu cycles i want to devote to the task. so i'm hesitant about exploring nicer tools like munin or prometheus+grafana.

rn mine's collectd/rrdtool with a graph creation script i run by hand when i want to. which is admittedly way too ducktape and chicken wire. proof of concept

@pho4cexa LlMs were pounding my tiny old site so hard, so often, that my server bills started reaching triple digits.

There was nothing there for them. It was art, cartoons, short stories, everything from 1995 to now years of publishing and creating, but nothing that should interest scrapers.

I had to take the whole site down. There’s now a single page saying why I had to take it all down.

I hate this stupid timeline and I despise the capitalism that destroyed the one good new commons in the last 75 years, the internet.

@insom oh ho ho you jester i'm not going to write a whole new reverse proxy

i'm just gonna write a whole new static files webserver to run behind haproxy

(i am only about 61.3% joking, this may well yet actually occur)

@algernon i feel bad asking for more features when you already do so much and i haven't (yet) been able to support development with bags of money

also, the engineer in me feeling like this is a puzzle i should be able to solve by clicking the right pieces together

but if you're eager to do it: my primary goal is to rate-limit total garbage sent. i like poisoning the scrapers but i don't want to over-consume a shared resource like bandwidth to do it

@algernon so far the scrapers visiting my sites haven't overloaded my instance or made me become a nuisance

but i'm worried about the day they decide to throw a thousand scrapers at 10 req/s at me. i've got nothing in place right now to ensure my little vm on a shared host doesn't start trying to saturate the link

despite the nobility of filling scrapers with that much markov trash, i still want to be polite to my host and neighbor vms

@pho4cexa nod nod, completely understandable. And that is exactly the situation I found myself in, although they saturated my CPU (even when iocaine wasn't in play) before I was able to saturate the network.

So one of the things I'm working on is figuring out how to make.... gestures at all of the puzzle pieces all of these work more efficiently together, and how to make them automatically adapt to load.

@pho4cexa Don't feel bad. I appreciate feature requests, because they usually give me ideas too, and then everyone wins! :)

No need for bags or even small pouches of money. If it's too hard, I have learned to, and trained myself well enough to say "no". If it's too time consuming, I'll implement it whenever I have time.

In short: feature requests are lovely. Demands are not, and you're doing the first, and your thread gave me a couple of fun ideas already!