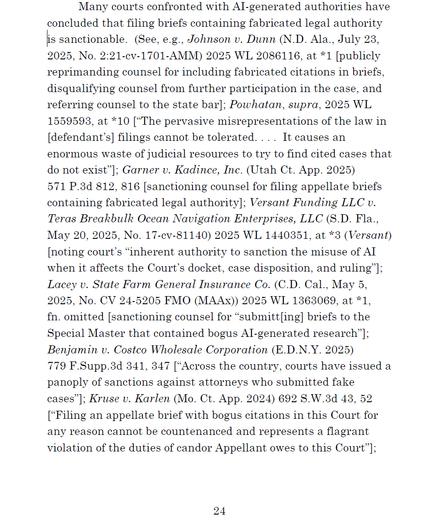

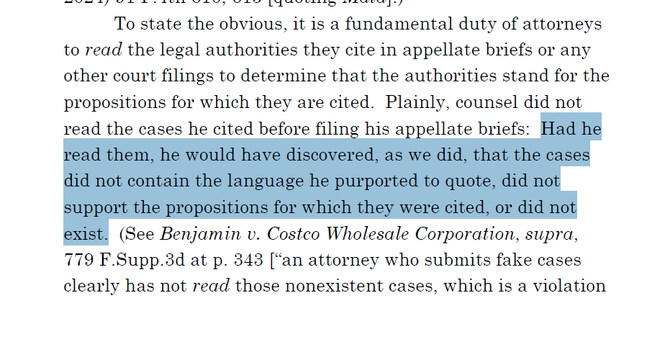

In today's #AIIsGoingGreat (HT @normative.bsky.social*) an intrepid #ChatGPTLawyer finally won based on an apparently slop-filled filing. Unfortunately for them, the opposing party noticed and appealed, to which our budding prompt engineer responded with… another slop-filled filing to the appeals court. The appeals court was not amused: "we impose a $2,500 frivolous motion penalty on Lynch, which is the most the law allows"

https://caselaw.findlaw.com/court/ga-court-of-appeals/117442275.html#

* https://mastodon.social/@normative.bsk[email protected]/114795731130848546