Bonus #AIIsGoingGreat "OpenAI announced an agreement to supply more than 2 million workers for the US federal executive branch access to ChatGPT and related tools at practically no cost: just $1 per agency for one year" - OK, they're obviously trying to get people at the agencies hooked so the they'll cough up real money next year, but that also doesn't exactly scream a product so revolutionary and transformative that everyone wants it

"We've solved raspberry and now if we can just fix blueberry, I swear AGI is RIGHT AROUND THE CORNER. Throw another hundred billion on the bonfire!"

https://kieranhealy.org/blog/archives/2025/08/07/blueberry-hill/

Blueberry Hill

ChatGPT 5 was released today. ChatGPT-maker OpenAI has unveiled the long-awaited latest version of its artificial intelligence (AI) chatbot, GPT-5, saying it can provide PhD-level expertise. Billed as “smarter, faster, and more useful,” OpenAI co-founder and chief executive Sam Altman lauded the company’s new model as ushering in a new era of ChatGPT. “I think having something like GPT-5 would be pretty much unimaginable at any previous time in human history,” he said ahead of Thursday’s launch. GPT-5’s release and claims of its “PhD-level” abilities in areas such as coding and writing come as tech firms continue to compete to have the most advanced AI chatbot.

Glad to see news outlets pointing out that #LLM chatbots aren't reliable sources of information about themselves: Way too many people who should know better fall for the "chatbot did weird thing, so I asked it to explain and it said…"

However it should be pointed out that this isn't a special case, they're equally likely to BS about loads of other stuff!

https://www.theverge.com/x-ai/758595/chatbots-lie-about-themselves-grok-suspension-ai

(also https://arstechnica.com/ai/2025/08/why-its-a-mistake-to-ask-chatbots-about-their-mistakes/)

Today's #AIIsGoingGreat, via @Iris: Elsevier "values user experience, hence we develop ways of improving our product" such as having machines invent new, random definitions of terms and attaching them prominently to published papers

https://irisvanrooijcogsci.com/2025/08/12/ai-slop-and-the-destruction-of-knowledge/

Related to this, I recently discovered that Elsevier uses these AI generated "definitions" on standalone "topic" pages, which rank highly in google. Bonus: The slop is free, but the articles referenced are of course frequently paywalled. Example https://www.sciencedirect.com/topics/engineering/air-fuel-ratio

(this particular definition seems OK, if extremely basic)

Don't worry, in the glorious #AI future, you'll still have choice! For example, you can choose to have your (or your children's) medical details filtered through a stochastic bullshit machine, or you can choose to forgo treatment https://ia.acs.org.au/article/2025/kobi-refused-a-doctors-ai-she-was-told-to-go-elsewhere.html

We Are Still Unable to Secure LLMs from Malicious Inputs - Schneier on Security

Nice indirect prompt injection attack: Bargury’s attack starts with a poisoned document, which is shared to a potential victim’s Google Drive. (Bargury says a victim could have also uploaded a compromised file to their own account.) It looks like an official document on company meeting policies. But inside the document, Bargury hid a 300-word malicious prompt that contains instructions for ChatGPT. The prompt is written in white text in a size-one font, something that a human is unlikely to see but a machine will still read. In a proof of concept video of the attack...

Today's #AIIsGoingGreat (HT @hazelweakly*) sheds light on whether there might be risks associated with the industry's headlong rush to adopt a technology for which input validation is literally impossible

https://embracethered.com/blog/posts/2025/wrapping-up-month-of-ai-bugs/

* https://mastodon.social/@hazelweakly@hachyderm.io/115138692622938480

Reverse dogfood #AIIsGoingGreat "Most [of the interview google AI training] workers said they avoid using LLMs or use extensions to block AI summaries because they now know how it’s built. Many also discourage their family and friends from using it, for the same reason"

https://www.theguardian.com/technology/2025/sep/11/google-gemini-ai-training-humans

https://arstechnica.com/ai/2025/09/education-report-calling-for-ethical-ai-use-contains-over-15-fake-sources/

Department of Education and Early Childhood Development spokes says they are aware of a "small number of potential errors in citations" and "We understand that these issues are being addressed, and that the online report will be updated in the coming days to rectify any error" - Ignoring the obvious problem that if the citations are BS, arguments or conclusions they were supporting were likely unjustified at best, if not outright BS

#AIIsGoingGreat "Americans are much more concerned than excited about the increased use of AI in daily life, with a majority saying they want more control over how AI is used in their lives"

Also pleased to see the stuff people are concerned about mostly isn't skynet

Don't totally disagree the basic arguments, but…

1) He suggests the "infrastructure bubble" may "lead to positive outcomes, because overcapacity will mean falling prices for those who want to use that infrastructure" - Probably true for data centers, but less clear for $ trillions in AI chips. AFAIK compute tends to be dominated by energy cost, so even at fire sale prices older chips may be of limited use

https://www.fastcompany.com/91400857/there-isnt-an-ai-bubble-there-are-three-ai-bu

2) His offers NFTs as an example of a "hype bubble" and then points to Amazon, Google and Paypal as examples of real value that emerged from the dotcom bubble. I agree with both, but… can anyone point to an Amazon or Google equivalent that emerged from the NFT bubble? Or anything of value at all to anyone other than speculators, scammers and crooks?

I can't, and while my gut says the AI stuff is probably closer to dotcom than NFTs, how much is far from obvious

https://www.fastcompany.com/91400857/there-isnt-an-ai-bubble-there-are-three-ai-bu

In today's #AIIsGoingGreat (HT @markwyner*) MIT boffins offer us an "AI Incident Tracker project" which "classifies real-world, reported incidents by AI Risk Repository risk domain, causal factors, and harm caused"

Sounds useful, right? But how exactly do they classify them? "Using a Large Language Model (LLM), the tool processes raw reports from the AI Incident Database and categorizes them using established frameworks" 🤨

https://airisk.mit.edu/ai-incident-tracker

* https://mastodon.social/@markwyner@mas.to/115249150911541318

Meanwhile, California appeals court fines #ChatGPTLawyer Amir Mostafavi ten grand for "filing a frivolous appeal, violating court rules, citing fake cases, and wasting the court’s time and the taxpayers money"

https://calmatters.org/economy/technology/2025/09/chatgpt-lawyer-fine-ai-regulation/

The court observes "Many courts confronted with AI-generated authorities have concluded that filing briefs containing fabricated legal authority is sanctionable" and backs it up with a page of (presumably non-hallucinated) citiations

Bonus #AIIsGoingGreat (HT @acdha*) features Cascade PBS and KNKX using public records requests to get local Washington governments #LLM chatlogs

* https://mastodon.social/@acdha@code4lib.social/115253478967518855

Nice interview (via @ink*) with reporter Nate Sanford about how the project came about, along with tips for people who want to make similar requests

https://www.poynter.org/reporting-editing/2025/how-to-foia-chatgpt-logs-government-public-records/

* https://mastodon.social/@ink@merveilles.town/115253543686563040

#AIIsGoingGreat "When we spoke to executives, they would often say the internal tool was very successful … But when we spoke to employees, we found zero usage"

https://www.ft.com/content/e93e56df-dd9b-40c1-b77a-dba1ca01e473

#AIIsGoingGreat Newsguard illustrates yet another case where #LLM chatbots a terrible substitute for search engines: "…the chatbots were prone to repeating false claims about Moldova due to the intensity of Russian propaganda campaigns, as well as the lack of English-language data in smaller Eastern European political markets"

https://www.newsguardrealitycheck.com/p/new-kremlin-linked-influence-campaign

New Kremlin-Linked Influence Campaign Targeting Moldovan Elections Draws 17 Million Views on X and Infects AI Models

As Moldova prepares for Sunday’s elections that will decide if it continues its European trajectory, or pivots back to Russia, the Storm-1516 Russian disinformation operation generates huge traffic

Today's #AIIsGoingGreat (HT @ai6yr*) highlights the perils of using a stochastic BS machine for vacation planning. In addition to making up non-existent destinations, it will also happily provide you with nonsense directions to reach them

https://www.bbc.com/travel/article/20250926-the-perils-of-letting-ai-plan-your-next-trip

Meanwhile @therecord_media provides a sneak peek at coming #AIIsGoingGreat attractions, featuring startups Tranquility, Truleo and Allometric as they aggressively pitch police and prosecutors on using stochastic BS machines to sift through and summarize evidence. What could possibly go wrong?!

(also, what are the odds at least one of them is shoveling all that evidence on to an improperly secured S3 bucket? Better than the lottery, I'd wager!)

https://therecord.media/law-enforcement-ai-platforms-synthesize-evidence-criminal-cases

"we are looking for videos of both real and staged events, to help train the Al what to be on the lookout for" - First thought was "what could possibly go wrong with training theft detection AI on staged videos?" but this is probably a rational response to someone realizing that paying would inevitably lead to staged videos anyway. Not that it makes the whole concept any less creepy or suspect…

JFC, it's not like there's any good case to go #ChatGPTJudge on, but this seems like a particularly poor one "The letter stems from an error-laden temporary restraining order Wingate issued July 20, which paused the enforcement of a state law that bans [DEI] in public schools"

errors "included naming defendants and plaintiffs that weren’t parties to the case, misquoting state law and referencing a case that doesn’t exist"

US Senate chairman asks federal judge in Mississippi to explain possible AI usage - Mississippi Today

A U.S. senator is asking about an error-laden temporary restraining order that U.S. District Judge Henry T. Wingate issued July 20. The order paused the enforcement of a state law that bans diversity, equity and inclusion programs in public schools.

https://arstechnica.com/ai/2025/10/openai-wants-to-make-chatgpt-into-a-universal-app-frontend/?utm_brand=arstechnica&utm_social-type=owned&utm_source=mastodon&utm_medium=social

Today's #AIIsGoingGreat is… actually unsarcastically going pretty great 🤯

Hard to see how anything could possibly go wrong here "the industry is spending over $30 billion a month (approximately $400 billion for 2025) and only receiving a bit more than a billion a month back in revenue"

An AI Addendum

Last month, I chose to strip away all the hubris around AI and ask one simple question, one that oddly no one had really bothered to ask; how much revenue is needed to justify the current level of capex spend and give AI investors a return on their capital?? I clearly hit a nerve in […]

Today's #ChatGPTLawyer (via @404mediaco*) ticks all the boxes:

✅ Files slop motion citing non-existent cases

✅ Denies using AI in slop-filled motion opposing sanctions for original slop

✅ Blames unnamed "staff"

✅ Eventually admits using AI and unconvincingly feigns remorse in sanctions hearing

✅ Gets sanctioned

Dave Karpf's #AIIsGoingGreat take "But I’ll say this: the AI bubble isn’t predominantly giving off Pets.com or Global Crossing vibes anymore. It’s giving Enron vibes."

Excellent deep dive into who goes #ChatGPTLawer by @riana

https://cyberlaw.stanford.edu/blog/2025/10/whos-submitting-ai-tainted-filings-in-court/

Who’s Submitting AI-Tainted Filings in Court?

It seems like every day brings another news story about a lawyer caught unwittingly submitting a court filing that cites nonexistent cases hallucinated by AI. The problem persists despite courts’ standing orders on the use of AI, formal opinions and continuing legal education (CLE) courses on ethical use of AI

#AIIsGoingGreat "In a preview of its 2025 report on the impact of the tech on research, the academic publisher Wiley released preliminary findings on attitudes toward AI. One startling takeaway: the report found that scientists expressed less trust in AI than they did in 2024"

(I suspect that like me, many readers of this thread will not be particularly startled by that)

https://futurism.com/artificial-intelligence/ai-research-scientists-hype

RE: https://mastodon.social/@lawfare/115390271811742445

Pass the bong, @lawfare https://mastodon.social/

@lawfare/115390271811742445

* 45% of all AI answers had at least one significant issue.

* 31% of responses showed serious sourcing problems – missing, misleading, or incorrect attributions.

* 20% contained major accuracy issues, including hallucinated details and outdated information.

Kicker: Separate study found "just over a third of UK adults saying that they trust AI to produce accurate summaries, rising to almost half for people under-35"

https://www.bbc.co.uk/mediacentre/2025/new-ebu-research-ai-assistants-news-content

They note "Comparison between the BBC’s results earlier this year and this study show some improvements but still high levels of errors" but don't address the question of whether the industry has any idea of how to solve the underlying problem

(spoiler: they don't)

https://www.bbc.co.uk/mediacentre/2025/new-ebu-research-ai-assistants-news-content

#AIIsGoingGreat "A US teenager was handcuffed by armed police after an [AI] system mistakenly said he was carrying a gun - when really he was holding a packet of crisps… AI alert was sent to human reviewers who found no threat - but the principal missed this"

Tossup whether this belongs here or in the "cops being abusive shitbags" thread*, but it does highlight how the "sure AI fails but just have a human check" line is mostly CYA for vendors

#AIIsGoingGreat, supplemental: "Google’s controversial new AI Mode has falsely named an innocent Sydney Morning Herald graphic designer as the man who confessed to abducting and murdering three-year-old Cheryl Grimmer more than 50 years ago … appears to have latched onto the designer’s name instead, given he was credited for an illustration " - Perfect illustration of how #LLM "AI" fills in the blanks with statistically plausible BS

Who could have predicted that if you present a statistical text completion machine with a scenario that mirrors a trope frequently found in the training set, it may produce output which follows the trope. SKYNET!!!!

https://www.wired.com/story/chatbots-are-pushing-sanctioned-russian-propaganda/

"Patrick Gelsinger took the reins at Gloo, a technology company made for what he calls the “faith ecosystem” – think Salesforce for churches, plus chatbots and AI assistants for automating pastoral work and ministry support"

Uh… "Lu recommends that leaders start by steering workers toward tasks that AI clearly handles better than humans and where personalization is unnecessary, such as numeric estimation and forecasting tasks" - numeric estimation tasks more or less demanding than estimating the number times "r" appears in strawberry? 🤔

https://www.businessinsider.com/inside-ai-divide-roiling-video-game-giant-electronic-arts-2025-10

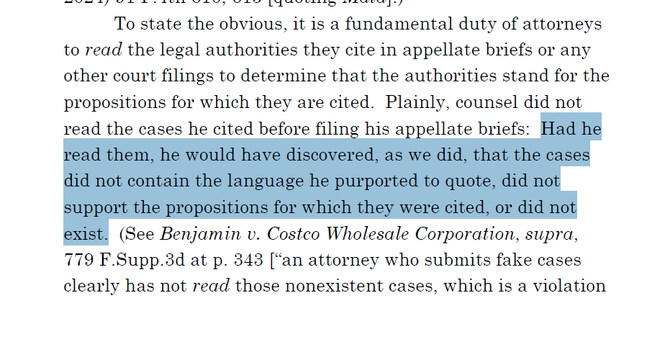

Good rebuttal to the "but humans make mistakes too" or "just treat it like an intern" excuses for LLM failings: "A lawyer reviewing a first-year associate’s work likely expects some errors flowing from inadequate research or an incomplete understanding of the law. They do not suspect straight-up fictitious content"

Deceptive Dynamics of Generative AI: Beyond the “First-Year Associate” Framing - Slaw

Guidance for lawyers on generative AI use consistently urges careful verification of outputs. One popular framing advises treating AI as a “first-year associate”—smart and keen, but inexperienced and needing supervision. In this column, I take the position that, while this framing helpfully encourages caution, it obscures how generative AI can be deceptive in ways that […]

"there is also the lesser-known prospect of [subtler than fake citation] hallucinations: a date altered here, part of a legal test changed there. These more subtle hallucinations are harder to detect and mean that where accuracy is paramount, extreme caution and rigourous verification is warranted when relying on AI outputs. In some situations, the vetting burden may, in fact, outweigh any efficiency gains" 💯

Deceptive Dynamics of Generative AI: Beyond the “First-Year Associate” Framing - Slaw

Guidance for lawyers on generative AI use consistently urges careful verification of outputs. One popular framing advises treating AI as a “first-year associate”—smart and keen, but inexperienced and needing supervision. In this column, I take the position that, while this framing helpfully encourages caution, it obscures how generative AI can be deceptive in ways that […]

"[CFO] Sarah Friar has told some associates the company is aiming for a 2027 listing … But some advisers predict it could come even sooner, around late 2026 … A successful offering would mark a major win for investors such as SoftBank, Thrive Capital and Abu Dhabi's MGX. Microsoft, one of its biggest backers, now owns about 27% of the company after investing $13 billion" - Sure, they're building god, but "IPO before the bottom drops out" is a nice backup plan

#AIIsGoingGreat "As the deepfake gathered views on X, some users asked the platform’s AI chatbot Grok whether it was authentic. In at least two replies seen by BBC Verify, which have now been deleted, Grok wrongly claimed the video was genuine"

(I remain gobsmacked by the number of people who ask a chatbot to verify purported current events. Even if you're an LLM optimist, this seems like a task they are spectacularly unsuited for)

https://www.bbc.com/news/live/c4gjv2xdl5dt?post=asset%3A884ecf7b-139a-4033-a61b-73fc82891a49#post

"These centres will cost $2.5tn to build, according to Barclays, to service an industry that still doesn’t turn a profit. But the maddest bit arguably is how much energy they will require once completed. Using Barclays’ 1.2 “Power Use Effectiveness” ratio, all these data centres — if they are all completed — would need 55.2 gigawatts of electricity to function at full capacity"

https://www.ft.com/content/2b849dbd-1bef-4c26-aa11-2cb86750d41e

Via that FT article "Beyond sheer density, AI workloads introduce a second, equally formidable challenge: volatility. Unlike a traditional data center running thousands of uncorrelated tasks, an AI factory operates as a single, synchronous system … This creates a facility-wide power profile characterized by massive and rapid load swings … The power draw of a rack can swing from an “idle” state of around 30% to 100% utilization and back again in milliseconds"

Hard to see how torching a few trillion dollars on the altar of FOMO could possibly go wrong #AIIsGoingGreat

https://www.theverge.com/ai-artificial-intelligence/812455/ai-industry-earnings-bubble-fomo-hype