I'm begging you. Please tell me what else to do.

I run copy-paste-quality pfsense with a single personal domain.

I just want to access my home computers (self-hosted music) remotely via DNS, from corporate networks that block Comcast.

IDK if you call having a domain name, "renting", but I guess I can't deny that is ICANN's business model

@dnavinci I've had good luck using yggdrasil (https://yggdrasil-network.github.io/) rather than DNS to access media remotely. it does require the client machine to also be on yggdrasil, but that's doable even without root if you can run your own software or apps

DNS is renting because you have to keep paying for domains or you lose them, just like renting anything else. the "buying" terminology is just marketing

Appreciate the recommendation. I think I'm not ready for alpha grade software for my home network.

I haven't even built my own Gentoo :P

@dnavinci @migratory One edge server running Wireguard & nginx. One LAN computer running same, connecting via Wireguard to edge server. DNS entries that resolve publicly to edge IP, resolve on LAN to internal IP.

Configuration and automation ensues; not too intense.

Thank you. Thank you!

Yes, after some research I guess I just need any old endpoint under MY control to be the reverse proxy.

Some suggested that Oracle (evil as they are) have a free tier which is relatively ideal.

@migratory

@dnavinci @migratory Sorry if I made it sound trivial, I was trying to quickly regurgitate my approach 😅 But depending on what you host there are some docker-based workflows that simplify some parts e.g. certificates. My Wireguard and nginx setup was mostly hand-built though, and it wasn't too complicated to configure those. There are a few moving parts, but I didn't find it too overwhelming.

I'm in the middle of switching to a Pangolin based approach. I had previously only allowed clients on the Wireguard network into the services but only allowing IPs from Wireguard, but to allow for sharing content with external (to my home) users I think Pangolin will give more flexibility. This mostly affects the edge server configuration, not much has to change internally.

If you do go with a certbot approach to certificates, I'd recommend using DNS validation from the start, as I think it offers more flexibility for the rest of the setup.

@migratory

Segmentation is a feature.

I'd like to minimize my attack surface. My read of yggdrasil seems like it would be fairly exposed.

You've opened my mind to the possibilities. I was looking at l3 switches and thinking about yggdrasil and thinking, "who even needs a router?!"

But... Just not ready to free my mind. Also my spouse and kid aren't either :P

@nickspacek

Thanks for turning me on to pangolin. That will make this really easy!

By DNS validation you mean DCV? Not something I've given much thought. I assume this is easy in pangolin?

I've been waffling between what you said and just tailscale.

My threat model doesn't really include the NSA.

Mostly I want to host my music server, but also give my friends my minecraft.myhostname.com for when we play.

@migratory

@dnavinci @migratory Most (all?) Of my current use-cases are HTTP-based. Pangolin supports TCP proxying too but I have no experience so I can't speak to it.

By DNS validation with ACME/LetsEncrypt the common approaches to validate that you "own" the domain are HTTP, where the handshake asks you to create a specific file/contents on a web server, vs the ACME DNS handshake that asks you to create a TXT record with specific contents. When the ACME confirms one of the above approaches, it issues you the SSL certificate for the domain! Then you can serve your site over HTTPS.

That's not important for Minecraft servers though. In that case, Pangolin would probably do the trick (maybe a bit heavy), or Tailscale (still heavy), or Hamachi (is that still around??), or for a long while I just used a reverse SSH tunnel which is dead simple.

@dnavinci @migratory Right, I couldn't remember before but there's a app built on SSH for maintaining the reverse proxy connection called AutoSSH. I think it is in most Linux Distro package managers now.

I found this post about setting it up to start automatically with systemd. Could be handy!

https://pesin.space/posts/2020-10-16-autossh-systemd/

It runs inside the LAN and connects over SSH to your jumpbox, which has some convenient DNS entry pointing at it. When it establishes the SSH connection it also opens a port on the jumpbox where packets are tunneled over the SSH connection to a target port inside your LAN, which can be on the server initiating the SSH connection, or on some other host if you want!

Thanks for this part. I had just wandered my planning over to this exact gap, and clicked back here a few hours later ;)

@migratory

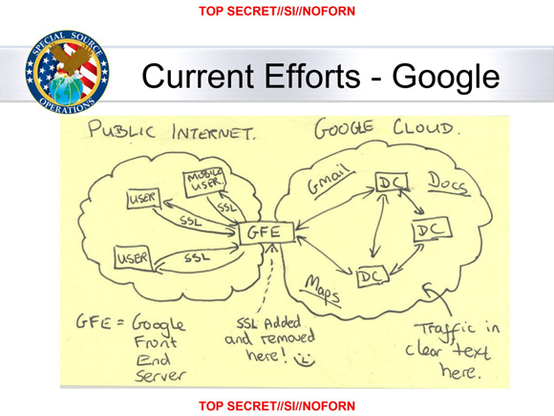

@pojntfx I think Google has since fixed the cleartext-in-the-DC issue but that alone doesn't earn them trust.

I'm old enough to remember when even the front-end of webmail services was cleartext.

@stuartl @pojntfx if you use a modern browser (especially Firefox or Chrome, but I think Safari might as well these days), the traffic is even protected against someone storing the ciphertexts, developing a quantum computer, and breaking the elliptic curves used.

I talk about the effort of making this true internally here, using that very slide 😏.

Session on PQC Protocols and Agility

i was just trying to add more forward secrecy

i was just trying to add more forward secrecy@stuartl @pojntfx I’m old enough to remember when TCI/IP was invented, and anyone smartcenougb to have deployed it could be trusted by default. And you tell these young folk nowadays, etc., etc. (stumbles off, mumbling and stroking into beard).

I miss those days. The UK Internet Consortium was influential (even though nobody even remembers it). It achieved its goal, and the UK uses the same (more efficient) standards as the rest of the world.

@stuartl @pojntfx well yes, but thanks to the old guard who actually made this shit work, users don’t really need to understand the details anymore. I have only skimmed the surface myself!

I taught TCPIP classes for approximately 20 years, and throughout that period, the uptake of IPv6 was consistently felt by the industry to be “10 years away“. 🤣

@holdenweb @pojntfx It's been reduced to magic numbers at this point.

"Look, put 255.255.255.0 in that field there and the other one must start with 192.168… don't worry about the rest!"

(ugh!)

Not much use for knowledge of things like 802.1Q in "the cloud".

@stuartl @pojntfx yes, but there’s no use bemoaning the fact that broadening the market for a technology necessarily means making it accessible to the (literally, not pejoratively) ignorant.

Back in 1995, when the Python world was a delight, I knew it would inevitably devolve into the same shit-show I’d already seen in the MS world. You either work with niche tech or welcome the world and all its warts!🤷

@pojntfx that's why this sticker exists :)

SVG: https://github.com/justjanne/stickers/blob/main/designs/ssl%20added%20and%20removed%20here.svg

Great, thanks. Saved, maybe I find it useful sometime.

@justjanne That sticker would perfectly fit my workplace's laptop

Am I misreading something?

Article 13 and 14. Passing data off to a third party requires that the data subject be explicitly notified about where the data is going, for what purpose, what the legal basis for the processing is, how long it's stored, how it's protected, etc.

Also it's a transfer outside of the EU, which necessitates additional scrutiny and reporting (Transfer Impact Assessment).

Article 7 requires that requests for consent must be presented in a way that's clearly different from other matters - this means that putting your GDPR language in a ToS or Privacy Policy where it's not likely to be read isn't sufficient.

CloudFlare and its customers, if they don't notify affected individuals, are very clearly in breach of GDPR, if Cloudflare really is tapping into their customers traffic.

However, even if CF isn't tapping into their customer's traffic, they're still in breach of GDPR. As a US company, Cloudflare is subject to FISA 702 and the CLOUD Act, which give the US government power to secretly request access to data about any CF customer.

Not to mention, being part of the Data Privacy Framework doesn't absolve US companies from ensuring compliance with GDPR. DPF only means that transfers to certain companies don't require a transfer impact assessment - it doesn't reduce any other obligations.

Yeah, of course. That's why I don't use US-based services if I can avoid it. The American government has been very clear that it's hostile to both their own citizens, and even more hostile to foreigners.

You're thinking about the cookies.

That's a different thing entirely - cookies are for tracking, not internal processing of that data.

IP addresses are personal data under GDPR, as they can be used to identify the person who is accessing the service.

Even if a business chose to not collect personal data, if they host their services on AWS or other US-based hosting providers, those providers still get that information.

"Not collecting personal data" is something you have to proactively do and enforce across your entire business, including business partners and vendors.

@sashin @pojntfx The padlock in your browser promising your data is securely protected ends at Google's servers, and is only restored on the other side, exposing your data to Google. The slide Snowden posted is from an NSA presentation where the author drew a smiley face at that point, because it gave the NSA full access to the data flowing through Google's servers.

Cloudflare has the same vulnerability. And the NSA's capabilities have only improved since the slide was released 12 years ago.

@sashin @pojntfx That Cloudflare (and everyone that manages to snoop on them) can read, store, and modify any requests and content that is transmitted.

Basically, the promise of TLS is that everything you do on a website is unreadable by anyone except the web browser and the provider of the website. Cloudflare inserts itself into the transaction and breaks that promise.

@pojntfx Yes, end-to-end encryption is all very well as long as the other end isn’t everyone in a corporation that decided being evil was OK, actually.

But as a technologist I’m firmly of the opinion that knowing this stuff should not be a precondition of safe internet communications. Ethics and technical standards should (but currently don’t) keep us all safe.