I've started working on a #Vulkan renderer for #WorldFabric. None of the demos I've looked at are arranged at all how I need, and I struggle to get each example to even run, so it's going to be a minute. On the upside I know exactly how I want my pipeline to work, and it's pretty in line with how Vulkan is designed, so now it's just a matter of fighting with the syntax and compiler until I've abstracted away all the annoying parts.

I managed to get a #Vulkan window to compile and open in my existing game engine project. It just changes color. It's not a lot, but linker errors are the worst, and I'm happy to be on the other side of some of them. Pretty soon I may even have a triangle.

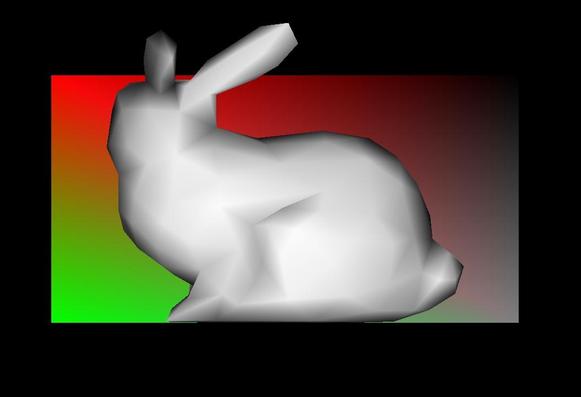

Progress on the #Vulkan renderer. Got my homebrew GLTF model loader pushing vertex data into a simple triangle pipeline. Data structures and matrices are a mess of cobbled together tutorial bits, but now that I've got "something" on screen I can start cleaning up the code. I'll be able to tell when things break at least.

More #Vulkan progress. Fixed up the matrices, so I can use glm::lookAt for the camera and matrices the way my engine has them. Spent much of the time confused by the depth test because the tutorial code was set to GREATER_OR_EQUAL when I always use LESS_OR_EQUAL. Good example of what I was talking about with making code things "what they are". In terms of code it doesn't really matter, but I feel like, intuitively, lower depth means closer, so that's how I always set it up. It's also conveniently how glm::lookAt works.

I got vkCmdDrawIndexedIndirect working to draw a bunch of instances with one draw call in #Vulkan. I can get the id of the current instance in the shader with gl_InstanceIndex, so I'm thinking I'll make a struct for instance data and then pack those into a big buffer that I can read as an array in the shader. I haven't figured out how I want to abstract descriptor sets yet, so I've got a bunch of hardcoded buffers which I don't like.

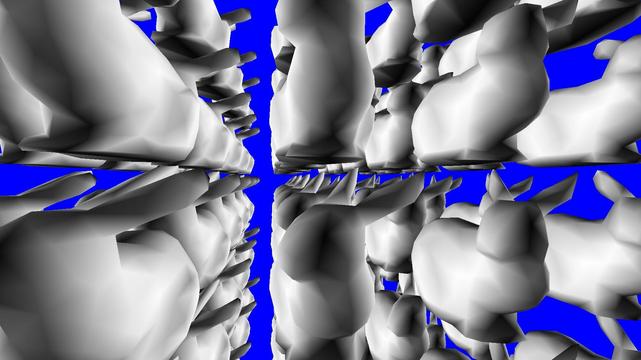

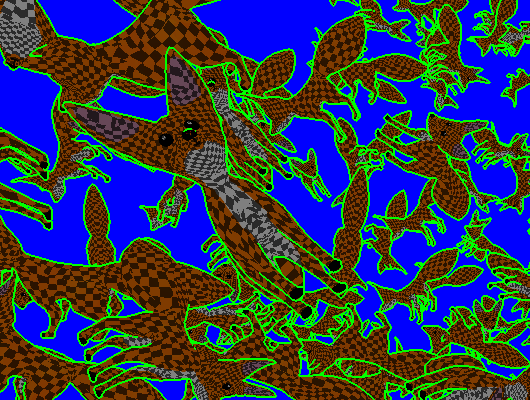

So how many of those rabbit instances can I draw using #Vulkan DrawIndexedIndirect? On my 4070TI Super, about a million is where it starts slowing down. Welcome to the rabbit dimension.

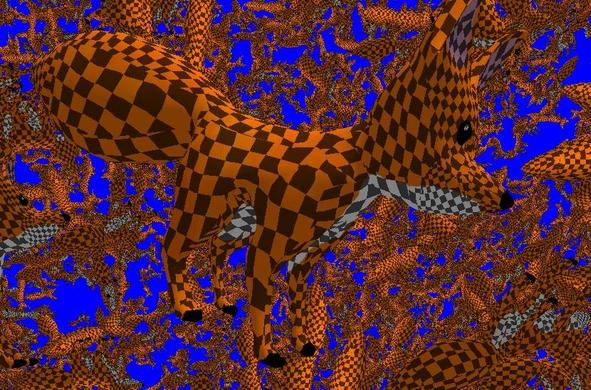

I've got textures in #Vulkan. Well, one texture. I still don't really "get" descriptor sets. I've got to make a pool and then allocate a descriptor to get a handle to be allowed to modify a buffer? It's a pain I need to abstract away, but I haven't quite figured out how.

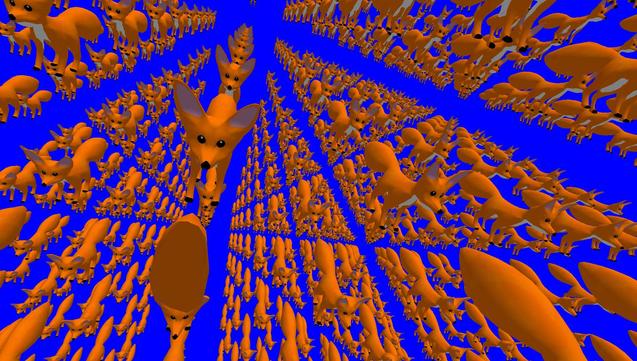

I created a templated buffer push function that lets me push std:vectors of arbitrary structs to the GPU with #Vulkan. Byte alignment is dangerous and requires care, but it's great when it works. I made the buffer for instance specific data, so now I have proper GPU level instancing. Do a barrel roll!

I've been working on abstracting my #Vulkan render pipeline set-up, and I think I "get" descriptor sets now. They're just binding configurations you can stash on the GPU. Configure a bunch of textures and samplers, save it on the GPU, and you get back a reference to it. That's a descriptor set, and you can pass it back when you want to quickly load that set of bindings. Not much visually to show today, but I can load shaders that read and write with multiple images easily now.

Made a lot of progress in #Vulkan today. I can now run a triangle pipeline, have it output positions, normals, and colors to separate images, and then read those back in a compute shader that compiles the final image. I made a simple outline post-processor, but this unlocks much cooler deferred rendering and screen-space effects I'll get to eventually.

Abstracted out the concept of a render model in #Vulkan over the weekend. With most of the hard-coded buffer stuff cleaned up, I can now easily load different models and run them through the pipeline together. I'm getting closer to a usable render engine, but there are still a few critical elements missing. #graphicsprogramming

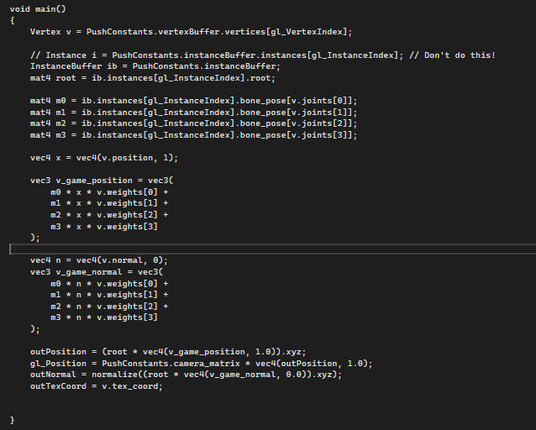

I figured it out and it got 10x faster! If you fetch data above a certain size in a vertex #shader then it thrashes the cache and gets really slow. I was inadvertently fetching ALL of the bone matrices on every vertex because I named the instance. That's a copy and not a reference in #GLSL. Directly pulling only the bones I need for skinning out of the buffer fixes it. Obvious now that I see it, but mysterious as foretold when performance just falls off a cliff when bone count hits 50. #graphicsprogramming #debugging

When using Vulkan in openXR it flips the Y axis in the matrices vs openGL (no idea why). Not a big deal, I can wrap it in some scale matrices to flip it back. Which I had done for the head movement, but not the eye matrices until just now. I honestly don't know why it worked fine for Quest 2. I guess if the eyes are vertically aligned with the reported center of the head it wouldn't matter? It would have been really easy to ship with that bug if I only had a Quest 2 to test with.

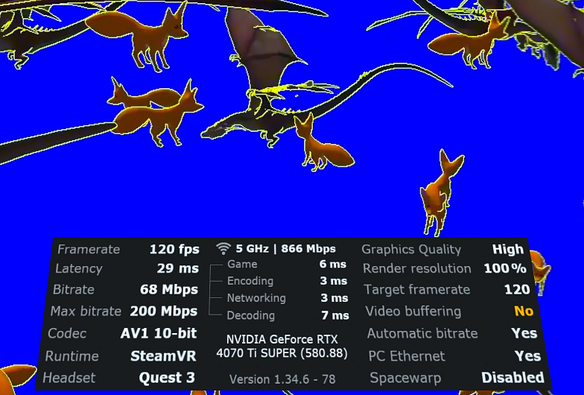

It's very smooth on the Quest 3. I don't feel a big difference between 90fps and 120fps, but the latency. Pushing my VR streaming latency down from 50 to 30 really helps the feel, and turning off video buffering helps a lot. It's on by default in virtual desktop to reduce stutter, but #WorldFabric is designed for VR. I compute scene changes, then wait for sync to get the latest headset pose to immediately begin rendering. Faster response to head movement also reduces motion sickness.

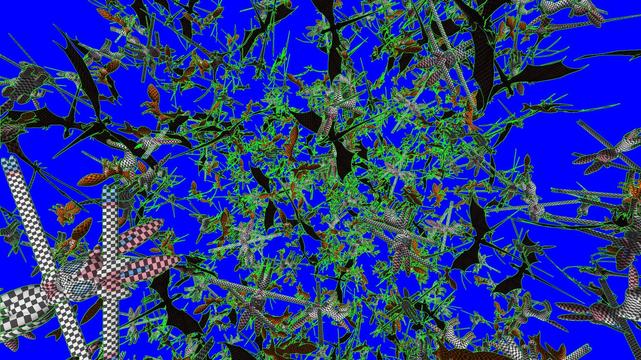

More #Vulkan progress. I got the particle system from Portal Foxes TD working. They're just translucent ellipsoids, but they're shaded as true 3D, so they look right in VR. Unlike in #PortalFoxesTD they're properly GPU instanced now and the quad poses are computed on the GPU as well, so they're very, very cheap. I can make a lot of cool effects with a few thousand of these.

I merged the Vulkan plugin for #WorldFabric into the main branch. I still need to update the scene and UI plugins to use it, so it's not game ready, but I think the Vulkan part is done. It can do everything my openGL plugin could do and a few new things. I'll make a devlog about it soon. That'll be more focused on the abstractions and tradeoffs for the engine rather than Vulkan details, so different content than this thread. Stay tuned.