I whipped up a version of the doof-and-boop patch that automates the alternating connections with a pile of flip flops and I've been grooving out to it for the last two hours 😎

I tried doing a live jam for some friends tonight where I had the drum machine patch going along with a simple synth voice on top of that which I intended to drive with the midi touch screen keyboard I made back in January, but I quickly found that to be unplayable due to latency in the system. I'm not sure exactly where as I haven't attempted to measure yet.

I figure if I move the touch screen keyboard into the synth and cut out the trip through the midi subsystem that might make the situation a little better (again, depending on where the real problem is)

Anyways, it got me thinking, I think processing input events synchronously with rendering might be a mistake, and it would be better if I could process input events on the thread where I'm generating the audio.

I added an instruction called "boop" that implements a momentary switch, and built a small piano out of it, and also built a metronome in the patch. That is very playable, so the latency must have been something to do with having one program implement the midi controller and the other implement the synth. I think I had this same problem with timidity a while back, so maybe it's just linux not being designed for this sort of thing.

with one program being the midi controller and the synthesizer it's pretty playable, but sometimes touch events seem to get dropped or delayed. that is probably my fault though, there's a lot of quick and dirty python code in that path, though I'm not sure why it's only occasional hitches

k, I've got tracy partially hooked up to mollytime (my synthesizer), and with mollytime running stand-alone playing the drum machine patch (low-ish program complexity), the audio thread (which repeatedly evaluates the patch directly when buffering), the audio thread wakes up every 3 milliseconds, and the repeated patch invocations take about ~0.5 milliseconds to run, with most patch evals taking about 3 microseconds each.

almost a third of each patch invocation is asking the also if there's any new midi events despite there being no midi devices connected to mollytime, but that's a smidge under a microsecond of time wasted per eval, and it's not yet adding up enough for me to care so whatever

anyways, everything so far is pointing toward the problem being in the UI frontend I wrote, which is great, because that can be solved by simply writing better code probably unless the problem is like a pygame limitation or something

I switched pygame from initializing everything by default like they recommend to just initializing the two modules I actually need (display and font) and the audio thread time dropped, probably because now it's not contending with the unused pulse audio worker thread pygame spun up. The typical patch sample eval is about 1.5 microseconds now, and a full batch is now under a millisecond.

tracy is a good tool for profiling C++, but the python and C support feels completely unserious :/

making building with cmake a requirement to use the python bindings and then only providing vague instructions for how to do so is an odd choice.

using cmake is a bridge too far, so I figure I'll just mimic what the bindings do, but it turns out they're a bit over engineered as they're meant to adapt C++ RAII semantics to python decorators and I don't want to pay for the overhead for that when I could just have a begin and end function call to bind instead. that probably will have to be wrapping new and delete like they do though because there is no straight forward C call for this.

The relevant C APIs are provided as a pile of macros that declare static variables that track the context information for the begin and end regions. This seems to be on the theory that you would never ever ever want to wrap these in a function for the sake of letting another language use them in a general purpose way. The inner functions they call are all prefixed with three underscores, which is basically the programmer way of saying "trespassers will be shot without hesitation or question"

also there's this cute note in the docs saying that if use the C API it'll enable some kind of expensive validation step unless you go out of your way to disable it, which you shouldn't do but here's how 🙄

C++ RAII semantics are so universally applicable to all programs (sarcasm) that even the C++ standard library provides alternatives to scope guard objects if you don't want to use them. come on man

tragic. pygame.display.flip seems to always imply a vsync stall if you aren't using it to create an opengl context, and so solving the input events merging problem is going to be a pain in the ass. it is, however, an ass pain for another night: it is now time to "donkey kong"

EDIT: pygame.display.flip does not imply vsync! I just messed up my throttling logic :D huzzah!

ideally the ordering of those events would be represented in the patch evals but they just happen as they happen. It's plenty responsive with a mouse though. I'll have to try out the touch screen later and see if the problem is basically solved now or not.

ok I can go ape shit with a mouse and it works perfectly and like I mean double kick ape shit, but the dropped events problem still persists with the touch screen ;_;

it's possible that I'm doing something wrong with the handling of touch events and it's something i can fix still, but now i'm wondering if there's a faster way to confirm if "going ape shit" playing virtual instruments is a normal intended use case for touch screen monitors by their manufacturers and the people who wrote the linux infrastructure for them, or if they all were going for more of a "tapping apps" vibe

and by "going ape shit" i just mean gently flutter tapping two fingers rapidly to generate touch events faster than i could with just one finger, such as to trill between two notes or repeatedly play one very quickly. doing that on one mouse button to generate one note repeatedly very fast feels a lot more aggressive

if i do the same on my laptop's touch pad (which linux sees as a mouse) the same thing happens, but if I really go ham on it such that it engages a click with the literal button under the touch pad, then the events all go through just fine. this is why i'm starting to think there's some filtering happening elsewhere

i wonder if there's a normal way to ask linux to let me raw dog the touch screen event stream without any gesture stuff or other filtering, or if this sort of thing isn't allowed for security and brand integrity reasons

someone brought up a really good point, which is that depending on how the touch screen works, it may be fundamentally ambiguous whether or not rapidly alternating adjacent taps can be interpreted as one touch event wiggling vs two separate touch events

easy to verify if that is the case, but damn, that might put the kibosh on one of my diabolical plans

ok great news, that seems to not be the case here. I made little touch indicators draw colors for what they correspond to, and rapid adjacent taps don't share a color.

bad news; when a touch or sometimes a long string of rapid touches is seemingly dropped without explanation, nothing for them shows up in evtest either D: does that mean my touch screen is just not registering them?

side note: if you try to use gnome's F11 shortcut to take a screen recording, it doesn't give you the option to pick which screen, and it just defaults to recording the main screen. i assume this too is security

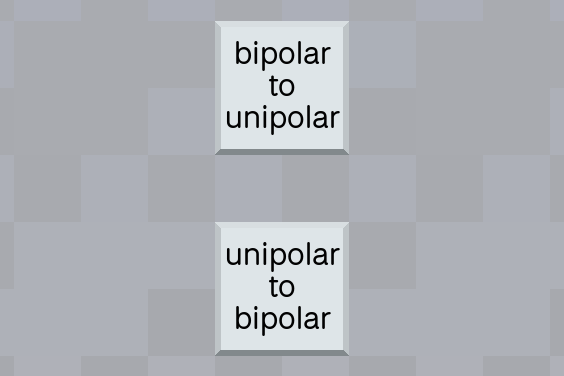

I want to add a pair of instructions for switching between (0, 1) range and (-1, 1) range. so

u = s * .5 + .5

and

s = u * 2 - 1

what are good short names for these operations?

EDIT: can be up to three words, less than 7 letters each preferably closer to 4 each

ok thanks to the magic of variable width font technology, "unipolar" squeaks in under the limit despite being 8 letters

I've got my undulating noise wall mollytime patch piped into a rings clone running in vcvrack and ho boy that is a nice combo

I gotta figure out how to build some good filter effects

why the hell am i outputting 48 khz

i'm pretty sure i can't even hear 10 khz

i'm using doubles for samples which feels excessive except that std::sinf sounds moldy so that has a reason at least, but i do not know why i picked 48 khz for the sampling rate i probably just copied the number from somewhere but seriously why is 48 khz even a thing what is this audiophile nonsense

look at me im just gonna fill up all my ram with gold plated cables

well, ok I'd have to buffer roughly two days of audio to run out of ram but still it just feels obscene

i'm going to have words with this nyquist guy >:(

i love how a bunch of people were like it's because of nyquist duh and then the two smart people were like these two specific numbers are because of video tape and film

EDIT: honorable mention to the 3rd smart person whose text wall came in after I posted this

I'm going to end up with something vaguely resembling a type system in this language because I'm looking to add reading and writing to buffers so I can do stuff like tape loops and sampling, and having different connection types keeps the operator from accidentally using a sine wave as a file handle.

I've got a sneaking suspicion this is also going to end up tracking metadata about how often code should execute, which is kinda a cool idea.

oOoOoh your favorite language's type system doesn't have a notion of time? how dull and pedestrian

oh, so I was thinking about the sampling rate stuff because I want to make tape loops and such, and I figured ok three new tiles: blank tape, tape read, tape write. the blank tape tile provides a handle for the memory buffer to be used with the other two tiles along with the buffer itself.

I thought it would be cute to just provide tiles for different kinds of blank media instead of making you specify the exact buffer size, but I've since abandoned this idea.

bytes per second is 48000 * sizeof(double)

wikipedia says a cassette typically has either 30 minutes or an hour worth of tape, so

48000 * sizeof(double) * 60 * 30

is about half a GiB, which is way too much to allocate by default for a tile you can casually throw down without thinking about.

I thought ok, well, maybe something smaller. Floppy? 1.44 MiB gets you a few seconds of audio lol.

I have since abandoned this idea, you're just going to have to specify how long the blank tape is.