I had this really great ambinet patch going where it was these washes of noise convolved with a short clip from the chrono cross chronopolis bgm, and I recorded 3 minutes of it but audacity just totally mangled it. There's this part that sounded like a storm rumbling in the distance but it was just the patch clipping, and that seems to be the danger zone for recording and playback I guess.

so you're just going to have to pretend you're hovering gently in a vast warm expanse of sky and ocean with no obvious notion of down, as a storm roils off in the distance, during an endless sunset. the moment is fleeting, yet lasts forever

saving and loading is probably going to be the next highest priority feature now. you can do a lot with simple patches, but it would be nice to be able to save the more interesting ones

I've got saving and loading patches working 😎 that was easy

I've been very careful so far to make it impossible to construct an invalid program, but I'm eventually going to add a reciprocal instruction (1/x), and was pondering what to do about the (1/0) case and I had a great idea which is instead of emitting infinity or crashing, what if it just started playing a sound sample as an easter egg. I'd have to add another register to the instruction to track the progress through the sample though.

I haven't picked out a good sample yet for the (1/0) case. A farting sound is too obvious and puerile. The most hilarious idea would be to start playing a Beatles song on the theory that if you messed up the copyright autotrolls would kill your stream, but I don't want the RIAA coming after my ass.

I might just have the rcp component explode if you feed it a zero.

ok it's less sensational than stuffing an easter egg in here, but I realized I can simply have it refuse to tell you the answer if you try to divide by zero

I'm thinking of adding a tile type that could be used to construct sequences. It would take an input for its reset value, a clock input, and an input for its update value. It outputs the reset value until the clock goes from low to high, at which point it copies the current update value to its output. This would let you build stuff like repeating note sequences with two tiles per note + a shared clock source, and you could use flipflops to switch out sections.

I made a crappy oscilloscope for debugging. Every frame it draws a new line for the magnitude of the sample corresponding to that frame. This does not show the wave form accurately because it's just whatever the main thread happens to get when it polls for the latest sample. This is mostly meant to help check the magnitude to verify that it matches expectations. A red line indicates clipping happened.

#mollytimeAnd here's what an eccentric patch looks like in scope view. I'm using the RNG node as if it were an oscillator, but I didn't try to correct the range, so the samples are all positive. Also it clips like crazy. Anyways here's 3 minutes and 29 seconds of hard static to relax and study to.

#mollytimepersonally, I highly recommend the 3 minutes and 29 seconds of hard static

I reworked the oscilloscope so that it now shows the range of samples in the time frame between refreshes. Here's a short video fo me playing around with inaudible low frequency oscillators. Also, I added a "scope" tile which you can use to snoop on specific tile outputs in place of the final output. I'm planing on eventually having it show the output over the regular background when connected so you don't have to switch modes to see it.

The scope line is sometimes a bit chonky because it's time synced, and recording causes it to lag a bit. It generally looks a bit better than in the video normally.

You can also make it worse by taking successive screenshots :3

mym tells me this is ghost house music

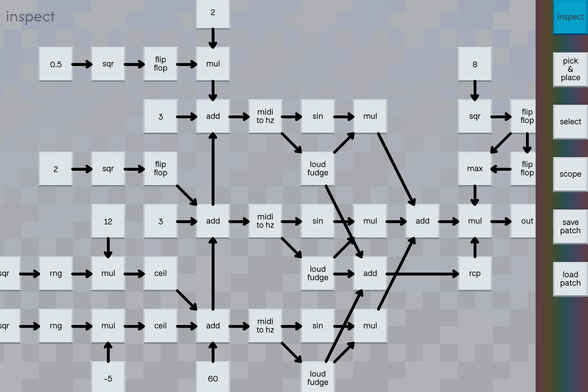

#mollytimeand here's most of that patch

one of the things I think is neat about programming is the way fundamental design decisions of the programming languages you use inevitably express themselves in the software you write. for javasscript it's NaN poisoning bugs. for c++, it's "bonus data". unreal blueprints have excessive horizontal scrolling on a good day. blender geometry nodes evolve into beautiful deep sea creatures. as I've experimented with mollytime, I've noticed it has a wonderful tendency to summon friendly demons

I have all these big plans for using procgen to do wire management (

https://mastodon.gamedev.place/@aeva/114617952229916105 ) that I still intend on implementing eventually, but I'm finding their absence unexpectedly charming at the moment

Attached: 1 image

I put it to the test by translating my test patch from my python fronted to a hypothetical equivalent node based representation without regard for wire placement and then stepped through my wire placement rules by hand. I'm very pleased with the results, it's a lot clearer to me what it does than the python version is at a glance.

anyone up for some doof and boop music

#mollytimea good test to see if your speakers can emit 55 hz or not

I whipped up a version of the doof-and-boop patch that automates the alternating connections with a pile of flip flops and I've been grooving out to it for the last two hours 😎

I tried doing a live jam for some friends tonight where I had the drum machine patch going along with a simple synth voice on top of that which I intended to drive with the midi touch screen keyboard I made back in January, but I quickly found that to be unplayable due to latency in the system. I'm not sure exactly where as I haven't attempted to measure yet.

I figure if I move the touch screen keyboard into the synth and cut out the trip through the midi subsystem that might make the situation a little better (again, depending on where the real problem is)

Anyways, it got me thinking, I think processing input events synchronously with rendering might be a mistake, and it would be better if I could process input events on the thread where I'm generating the audio.

I added an instruction called "boop" that implements a momentary switch, and built a small piano out of it, and also built a metronome in the patch. That is very playable, so the latency must have been something to do with having one program implement the midi controller and the other implement the synth. I think I had this same problem with timidity a while back, so maybe it's just linux not being designed for this sort of thing.

with one program being the midi controller and the synthesizer it's pretty playable, but sometimes touch events seem to get dropped or delayed. that is probably my fault though, there's a lot of quick and dirty python code in that path, though I'm not sure why it's only occasional hitches

k, I've got tracy partially hooked up to mollytime (my synthesizer), and with mollytime running stand-alone playing the drum machine patch (low-ish program complexity), the audio thread (which repeatedly evaluates the patch directly when buffering), the audio thread wakes up every 3 milliseconds, and the repeated patch invocations take about ~0.5 milliseconds to run, with most patch evals taking about 3 microseconds each.

almost a third of each patch invocation is asking the also if there's any new midi events despite there being no midi devices connected to mollytime, but that's a smidge under a microsecond of time wasted per eval, and it's not yet adding up enough for me to care so whatever

anyways, everything so far is pointing toward the problem being in the UI frontend I wrote, which is great, because that can be solved by simply writing better code probably unless the problem is like a pygame limitation or something

I switched pygame from initializing everything by default like they recommend to just initializing the two modules I actually need (display and font) and the audio thread time dropped, probably because now it's not contending with the unused pulse audio worker thread pygame spun up. The typical patch sample eval is about 1.5 microseconds now, and a full batch is now under a millisecond.

tracy is a good tool for profiling C++, but the python and C support feels completely unserious :/

making building with cmake a requirement to use the python bindings and then only providing vague instructions for how to do so is an odd choice.

using cmake is a bridge too far, so I figure I'll just mimic what the bindings do, but it turns out they're a bit over engineered as they're meant to adapt C++ RAII semantics to python decorators and I don't want to pay for the overhead for that when I could just have a begin and end function call to bind instead. that probably will have to be wrapping new and delete like they do though because there is no straight forward C call for this.

The relevant C APIs are provided as a pile of macros that declare static variables that track the context information for the begin and end regions. This seems to be on the theory that you would never ever ever want to wrap these in a function for the sake of letting another language use them in a general purpose way. The inner functions they call are all prefixed with three underscores, which is basically the programmer way of saying "trespassers will be shot without hesitation or question"

also there's this cute note in the docs saying that if use the C API it'll enable some kind of expensive validation step unless you go out of your way to disable it, which you shouldn't do but here's how 🙄

C++ RAII semantics are so universally applicable to all programs (sarcasm) that even the C++ standard library provides alternatives to scope guard objects if you don't want to use them. come on man

tragic. pygame.display.flip seems to always imply a vsync stall if you aren't using it to create an opengl context, and so solving the input events merging problem is going to be a pain in the ass. it is, however, an ass pain for another night: it is now time to "donkey kong"

EDIT: pygame.display.flip does not imply vsync! I just messed up my throttling logic :D huzzah!

ideally the ordering of those events would be represented in the patch evals but they just happen as they happen. It's plenty responsive with a mouse though. I'll have to try out the touch screen later and see if the problem is basically solved now or not.

ok I can go ape shit with a mouse and it works perfectly and like I mean double kick ape shit, but the dropped events problem still persists with the touch screen ;_;

it's possible that I'm doing something wrong with the handling of touch events and it's something i can fix still, but now i'm wondering if there's a faster way to confirm if "going ape shit" playing virtual instruments is a normal intended use case for touch screen monitors by their manufacturers and the people who wrote the linux infrastructure for them, or if they all were going for more of a "tapping apps" vibe

and by "going ape shit" i just mean gently flutter tapping two fingers rapidly to generate touch events faster than i could with just one finger, such as to trill between two notes or repeatedly play one very quickly. doing that on one mouse button to generate one note repeatedly very fast feels a lot more aggressive