The natural thing to implement next would be save and load, but pygame doesn't provide anything for conjuring the system file io dialogue windows (SDL3 has it, but pygame is SDL2 under the hood iirc). I'll worry about that another time I guess.

I'm probably going to go with an xml format so it's easy to extend. I want it to be easy to make custom controls in other applications eg game engines, so this feels like the obvious choice also I'm one of the five people who likes xml.

i'll probably end up adding envelope generators and midi before i implement save and load since it's not really worth using without those but it really depends on which way the wind is blowing when i next work on this

I'm really excited because all of the hard stuff is done now. I mean none of it was hard to implement or design, it's just that I made a bunch of architectural decisions up front and five weeks later the result is a thing that 1) works, 2) I've managed to keep the complexity of implementation pretty low across the entire program, and 3) all of the missing features and areas I want to improve upon have obvious paths to implementation.

and so further effort put into developing this will have an extremely favorable ration of time spent actually making the program more useful vs wallowing in tech debt

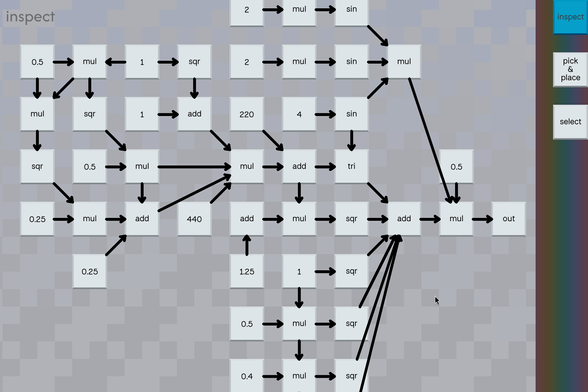

I made a short sequence of boops entirely out of oscillators and arithmetic. There's no sequencer or envelope generator.

#mollytimeand this is the patch, or at least here is most of the patch. it probably looks complicated but I did not put a lot of thought into it

from my experimentation so far since I got all this working end-to-end, I think modality worked as useful convenience for standing up a visual programming language quickly, but it adds too much friction to this one. In particular when making patches I want to be able to alternate between placing/moving stuff around and connecting stuff, so those two modes need to be merged for sure.

I'm not really making good use of multitouch because I've been developing this using a mouse. I'd like to have it so I can touch two things to hear what they sound like connected or disconnected; and then double tap to connect or disconnect that connection when a quick connection is possible. Mouse mode will need to work differently.

Another verb I would like to implement is the ability to disconnect a wire and connect a new wire in the same frame. The current flow for disconnecting a wire and connecting a new wire is too long even with a touch screen, but I think anything where it is not one action would be.

Being able to suspend/resume updating the patch that is playing while making edits is also a thing I want to implement for similar reasons, but I think being able to splice parts quickly is useful in different ways.

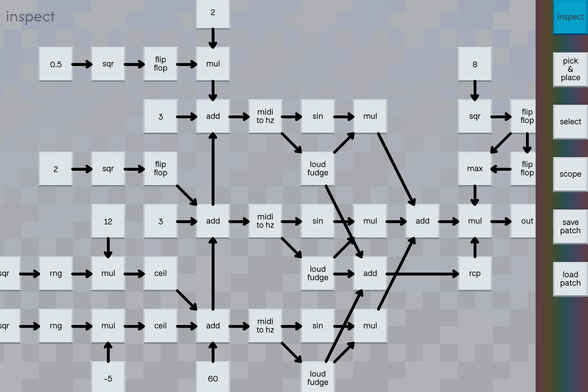

I added a flip/flop instruction. It's mostly a toy, but it's helped me clarify how I want to handle envelopes.

here's what that patch sounds like

I'm sorry everyone but I have to confess to a sin. See that chain of flip flops up there two posts ago? That's a number. That's a number where every bit is a double precision float 😎

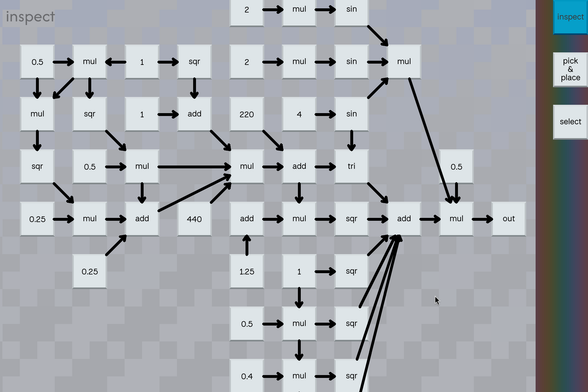

I came up with a pretty good approximation of a dial tone on accident while implementing a mixing node.

#mollytimeand here's the patch for that sound

Also now that I have tile types with multiple inputs and/or multiple outputs, I went and cleaned up manual connect mode to make it a little more clear what's going on.

today I added an envelope generator and some basic midi functionality. and then I made this absolute dog shit patch :3

#mollytimeit'll be a bit of work to do, but I want to eventually add a convolution verb to my synthesizer so I don't have to run it as a separate program. I'll probably also end up rewriting it to be a FFT because it would be nice to be able to have longer samples and maybe run several convolutions simultaneously. That might be "get a better computer" territory though.

I had this really great ambinet patch going where it was these washes of noise convolved with a short clip from the chrono cross chronopolis bgm, and I recorded 3 minutes of it but audacity just totally mangled it. There's this part that sounded like a storm rumbling in the distance but it was just the patch clipping, and that seems to be the danger zone for recording and playback I guess.

so you're just going to have to pretend you're hovering gently in a vast warm expanse of sky and ocean with no obvious notion of down, as a storm roils off in the distance, during an endless sunset. the moment is fleeting, yet lasts forever

saving and loading is probably going to be the next highest priority feature now. you can do a lot with simple patches, but it would be nice to be able to save the more interesting ones

I've got saving and loading patches working 😎 that was easy

I've been very careful so far to make it impossible to construct an invalid program, but I'm eventually going to add a reciprocal instruction (1/x), and was pondering what to do about the (1/0) case and I had a great idea which is instead of emitting infinity or crashing, what if it just started playing a sound sample as an easter egg. I'd have to add another register to the instruction to track the progress through the sample though.

I haven't picked out a good sample yet for the (1/0) case. A farting sound is too obvious and puerile. The most hilarious idea would be to start playing a Beatles song on the theory that if you messed up the copyright autotrolls would kill your stream, but I don't want the RIAA coming after my ass.

I might just have the rcp component explode if you feed it a zero.

ok it's less sensational than stuffing an easter egg in here, but I realized I can simply have it refuse to tell you the answer if you try to divide by zero

I'm thinking of adding a tile type that could be used to construct sequences. It would take an input for its reset value, a clock input, and an input for its update value. It outputs the reset value until the clock goes from low to high, at which point it copies the current update value to its output. This would let you build stuff like repeating note sequences with two tiles per note + a shared clock source, and you could use flipflops to switch out sections.

I made a crappy oscilloscope for debugging. Every frame it draws a new line for the magnitude of the sample corresponding to that frame. This does not show the wave form accurately because it's just whatever the main thread happens to get when it polls for the latest sample. This is mostly meant to help check the magnitude to verify that it matches expectations. A red line indicates clipping happened.

#mollytimeAnd here's what an eccentric patch looks like in scope view. I'm using the RNG node as if it were an oscillator, but I didn't try to correct the range, so the samples are all positive. Also it clips like crazy. Anyways here's 3 minutes and 29 seconds of hard static to relax and study to.

#mollytimepersonally, I highly recommend the 3 minutes and 29 seconds of hard static

I reworked the oscilloscope so that it now shows the range of samples in the time frame between refreshes. Here's a short video fo me playing around with inaudible low frequency oscillators. Also, I added a "scope" tile which you can use to snoop on specific tile outputs in place of the final output. I'm planing on eventually having it show the output over the regular background when connected so you don't have to switch modes to see it.

The scope line is sometimes a bit chonky because it's time synced, and recording causes it to lag a bit. It generally looks a bit better than in the video normally.

You can also make it worse by taking successive screenshots :3

mym tells me this is ghost house music

#mollytimeand here's most of that patch

one of the things I think is neat about programming is the way fundamental design decisions of the programming languages you use inevitably express themselves in the software you write. for javasscript it's NaN poisoning bugs. for c++, it's "bonus data". unreal blueprints have excessive horizontal scrolling on a good day. blender geometry nodes evolve into beautiful deep sea creatures. as I've experimented with mollytime, I've noticed it has a wonderful tendency to summon friendly demons

I have all these big plans for using procgen to do wire management (

https://mastodon.gamedev.place/@aeva/114617952229916105 ) that I still intend on implementing eventually, but I'm finding their absence unexpectedly charming at the moment

Attached: 1 image

I put it to the test by translating my test patch from my python fronted to a hypothetical equivalent node based representation without regard for wire placement and then stepped through my wire placement rules by hand. I'm very pleased with the results, it's a lot clearer to me what it does than the python version is at a glance.

anyone up for some doof and boop music

#mollytimea good test to see if your speakers can emit 55 hz or not

I whipped up a version of the doof-and-boop patch that automates the alternating connections with a pile of flip flops and I've been grooving out to it for the last two hours 😎

I tried doing a live jam for some friends tonight where I had the drum machine patch going along with a simple synth voice on top of that which I intended to drive with the midi touch screen keyboard I made back in January, but I quickly found that to be unplayable due to latency in the system. I'm not sure exactly where as I haven't attempted to measure yet.

I figure if I move the touch screen keyboard into the synth and cut out the trip through the midi subsystem that might make the situation a little better (again, depending on where the real problem is)

Anyways, it got me thinking, I think processing input events synchronously with rendering might be a mistake, and it would be better if I could process input events on the thread where I'm generating the audio.